Kafka Tutorial

Home Data Science Data Science Tutorials Kafka Tutorial

Basic

Kafka Tutorial and Resources

Kafka is a distributed streaming platform that was created by LinkedIn and was later open-sourced and handed over to Apache Foundation. It has a huge vast network with active contributions from users and developers. Kafka is based on a distributed environment approach, which means it can run across multiple servers, making it capable of using additional processing power and storage capacity.

Component of Kafka: Topic, Producer, Consumer, and Brokers

In this article, we will through understand the need, application, prerequisites, and simple implementation of a Hello World program using Kafka.

Why do we need Kafka?

Following are the few key aspects which justify the need for Kafka:

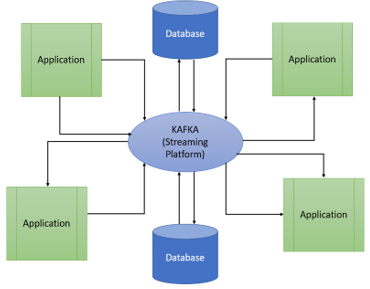

- Backend Architecture is simplified: Kafka is a streamlined platform. A streamlined platform can store a huge amount of data. These data are persistent and are replicated for fault tolerance. The following figure is the architecture of a complex system simplified by using Kafka.

- Real-Time Processing of Data: A continuous flow of data is needed in a real-time application. These data should be processed immediately with reduced latency. Kafka Stream is used to building and deploy packages without any sperate stream processor or any heavy, expensive infrastructure.

- Connects to an Existing System: Kafka provides a framework known as Kafka to connect to the existing systems to maintain the universal data pipeline.

Application

Following are the few application of Kafka :

- Netflix: It uses Kafka to perform real-time monitoring and event processing to understand user interest and predict the media in which the user might be interested.

- LinkedIn: LinkedIn uses Kafka messaging system in their various products like LinkedIn Newsfeed, LinkedIn Today, and Hadoop. Its strong durability makes it a key factor to be used on LinkedIn.

- Twitter: Storm Kafka is used by Twitter as a part of their stream processing infrastructure.

Example

Let us take an example to understand how a message is sent over topics in Kafka. Suppose we want to send a message ‘Hello World’ over the topic from scratch. To do so, we will follow the following steps :

Note: Syntax for each step is out of scope for this blog. You will get an idea of the flow of the exécution of the program on how to send the message over the topic.

Step-1: Start the Zookeeper Server

Step-2: Start the Kafka Server

Step-3: Creation of a topic

Step-4: Create a producer node

Step-5: Send a message using the producer node

Step-6: Create a consumer note and Subscribe to the topic

Following the above steps, the consumer node can subscribe to the message over the topic.

Prerequisites

To learn Kafka, you must understand the Distributed messaging system, Scala, Java, and Linux environment.

Target Audience

Kafka is for a professional who wants to make their career in big data analytics using the Apache Kafka messaging system.

Watch our Demo Courses and Videos

Valuation, Hadoop, Excel, Web Development & many more.

This website or its third-party tools use cookies, which are necessary to its functioning and required to achieve the purposes illustrated in the cookie policy. By closing this banner, scrolling this page, clicking a link or continuing to browse otherwise, you agree to our Privacy Policy

EDUCBA Login