Updated May 30, 2023

Introduction to Kafka MirrorMaker

Apache Kafka has a simple tool to replicate data from two data centers. It is called MirrorMaker, and at its base, it is a series of consumers (named streams) who are all part of the same consumer group and read data from the range of topics you have selected to replicate. It is more than getting tied together by a Kafka consumer and producer. Information will be interpreted from topics in the origin cluster and written in the destination cluster to a topic with the same name. Let us explore more about Kafka MirrorMaker by understanding its architecture.

Architecture of Kafka MirrorMaker

- The data duplication mechanism between Kafka clusters is named “mirroring.” The mirroring function is commonly used for keeping a separate copy of a Kafka cluster in another data center. Kafka’s MirrorMaker module reads the data from topics in one or more Kafka clusters source and writes the relevant topics to a Kafka cluster destination (using the same topic names).

- The source and target clusters are independent of specific partition numbers and different offsets.

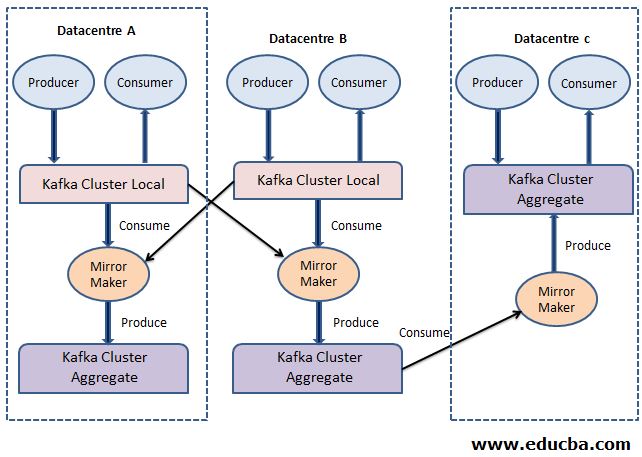

- The below figure shows an example of the architecture of Kafka MirrorMaker, which aggregates messages from two different clusters into an aggregate cluster and then copies that aggregated cluster to another datacentre.

- While operating with multiple data centers, copying messages between them is almost always essential. Therefore online applications can have access to user activity in both domains. For instance, if a user changes the personal information in their account, the update will need to be noticeable irrespective of the data center that shows the search results.

- Replicating Kafka clusters is limited to within a single cluster and cannot be performed between different clusters. Kafka does not allow replication within multiple clusters. Every MirrorMaker operation has one producer. The process is pretty easy. MirrorMaker runs a thread for each consumer.

- Each user collects events from the topics and partitions allocated to him on the source cluster and uses the mutual producer to send events to the target cluster.

- The consumers must tell the producer every 60 seconds (by default) to submit all the events to Kafka and wait before Kafka accepts them. Consumers then contact the Kafka cluster source to assign the offsets for all these events. It means no data loss (Kafka acknowledges messages until offsets are committed to the source). If the MirrorMaker mechanism fails, there will be no more than 60 seconds of duplicates.

- It is essentially a consumer-producer that the consumer goes to cluster A and connects to the topic you decide, and it gets the data from there and generates all the messages in cluster B; all the messages received by a topic in cluster A will be available in cluster B in the same topic.

- Figure 1 shows an instance of architecture using MirrorMaker, which aggregates messages from two different clusters into an aggregate cluster and then copies that aggregated cluster to another data center.

Benefits of Kafka MirrorMaker

Below are the benefits of Kafka MirrorMaker:

1. Global and Central Clusters

The organization sometimes has one or more data centers in different geographic areas, cities, or continents. Many systems can only operate by connecting with the local cluster. Still, some applications need data from multiple data centers (otherwise, you would not be looking at approaches for cross-data center replication). There are many situations where this is a prerequisite, but the classic example is a business that adjusts pricing based on supply and demand. That organization can have a data center in each area with a location, gather local supply and demand statistics, then adjust prices appropriately. After replicating this information to a central cluster, business analysts will report on their sales across the group.

2. Redundancy (DR)

Even though programs operate on a single Kafka cluster and do not require data from other sites, there is a concern about the whole cluster’s capacity becoming unavailable for some reason. Then you would like to have a second Kafka cluster with all the data in the first cluster to direct your applications in case of an emergency.

3. Cloud Migrations

Many companies run their business in an on-site data center and a cloud provider. Sometimes, cloud platform programs choose to operate on multiple regions for flexibility and may utilize different cloud providers. In these situations, at least one Kafka cluster is often present in each on-premise data center and each cloud area. Applications in each data center and region use those Kafka clusters to transfer data efficiently between the data centers.

For instance, if a new application is introduced in the cloud but needs specific data that is modified by applications running in the on-site data center and stored in an on-site database, you can use Kafka Connect to catch changes in the database in the local Kafka cluster and then replicate those changes in the Kafka cluster where the new application is located. It helps to control the effects of cross-data traffic and increases the governance and security of the traffic.

4. Support Data and Schema Replication

Kafka MirrorMaker does support data replication by real-time streaming data between Kafka clusters and data centers. It integrates with Confluent Schema Registry for multi-dc data quality and governance. It supports connection replication by managing data integration across multiple data centers.

5. Ease of Topic Selection

It offers the advantage of flexible topic selection by selecting topics with white lists, black lists, and regular expression

Conclusion

So far, we have seen what Kafka mirror maker is and its architecture. We have also seen its use cases or benefits of why we should use Kafka MirrorMaker. In short, it aggregates messages from two or more local clusters into an aggregate cluster. It then copies that cluster to other datacentres for redundancy, increasing throughput and fault tolerance.

Recommended Articles

This is a guide to Kafka MirrorMaker. Here we discuss the introduction, architecture of Kafka MirrorMaker, and benefits. You can also go through our other suggested articles to learn more –