Updated March 23, 2023

Introduction to Kafka Tools

Kafka Tools is a collection of various tools using which we can manage our Kafka Cluster. The tools are mostly command-line based, but UI based tools are also available, which can be downloaded and installed.

We can use Kafka tools for performing various operations like:

- List the available Kafka Clusters and their brokers, topics, and consumers.

- Can print the messages from various topics in the standard output. The UI based tools can definitely provide better readability.

- Add and drop topics from the brokers.

- Add new messages in the partitions.

- Please view all the offsets of our Consumers.

- Create partitions of our topics.

- List all Consumer Groups, describe the Consumer Groups, delete Consumer Group info and reset Consumer Group offsets.

If we want to use a UI based tool, we can use this, which can be downloaded from the following webpage:

http://www.kafkatool.com/download.html

This application is available for free for personal use, but we need to purchase a commercial use license. The good thing about it is its availability for Mac, Windows and Linux Systems.

Top 3 Types of Kafka Tools

They are categorized into System tools and Application tools.

1. System Tools

The System Tools can be run using the following Syntax.

Syntax:

bin/kafka-run-class.sh package.class - - options

Some of the System Tools are as follows:

- Kafka Migration Tool: This tool is used for migrating Kafka Broker from one version to another.

- Consumer Offset Checker: This tool can display Consumer Group, Topic, Partitions, Off-set, logSize, Owner for the specified set of Topics and Consumer Group.

- Mirror Maker: This tool is used for mirroring one Kafka Cluster to another.

2. Replication Tools

These are basically high-level design tools provided for durability and availability.

Some of the Replication Tools are:

- Create Topic Tool: This tool is used for creating topics with the default number of partitions and replication-factor.

- List Topic Tool: This is used for listing out the information for a given list of topics. The great thing about this tool is that if no topic is already available in the command line, it will query the Zookeeper to fetch the Topics list first and then print the information about them. It lists various fields like Topic Name, Partitions, Leader, Replicas, etc.

- Add Partition Tool: This tool is used to add partitions to a topic that is required to handle the growth of data volume in the topic. But note that, we have to specify the partitions while creating the topic. This tool allows us to add manual replicas for the added partitions.

3. Miscellaneous Tools

Now let’s discuss some miscellaneous tools:

a. Kafka-Topics Tool

This tool is used to create, list, alter and describe topics.

Example: Topic Creation: bin/kafka-topics.sh --zookeeper zk_host:port/chroot --create --topic topic_name --partitions 30 --replication-factor 3 --config x=y

b. Kafka-Console-Consumer Tool

This tool can be used to read data from Kafka topics and write it to standard output.

Example: bin/kafka-console-consumer --zookeeper zk01.example.com:8080 --topic topic_name>/code>

c. Kafka-console-producer Tool

This tool can be used to write data to a Kafka Topic from Standard Output.

Example: bin/kafka-console-producer --broker-list kafka03.example.com:9091 --topic topic_name

d. Kafka-consumer-groups Tool

This tool can be used to list all consumer groups, describe a consumer group, delete consumer group info, or reset consumer group offsets. This tool is mainly used for describing consumer groups and debugging any consumer offset issues.

Example: Viewing Offsets on an unsecured cluster: bin/kafka-consumer-groups --new-consumer --bootstrap-server broker01.example.com:9092 --describe --group group_name

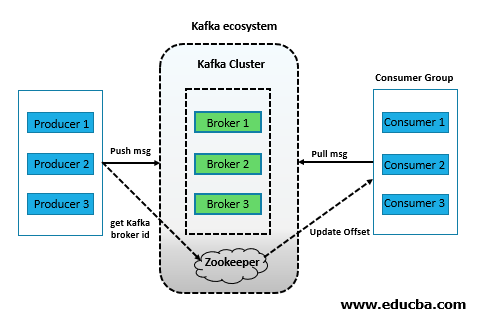

Kafka Architecture

Kafka architecture is shown below.

Various Components of Kafka Tools

The major components are as explained below:

1. Broker

Each node in a Kafka Cluster is a broker that stores the data. Typically, there are multiple brokers to balance the load properly. A broker stores messages in the form of topics that producers can access (for writing) and consumers (for reading). Topics are created to segregate one application’s data from another’s. As brokers are stateless, they need Zookeeper’s help to maintain their cluster state. One broker can handle TBs of messages without any impact on performance. The Zookeeper does Kafka broker leader election.

2. Producer

It is the unit that pushes messages into the brokers. There can be multiple producers generating data at a very high speed and independently of one another. The producers don’t receive an acknowledgement from the brokers and send data at a rate that the brokers can handle. They can search for brokers and start sending messages as soon as the brokers start. The producer is responsible for choosing which message to assign to which partition within the topic. This can be done in a round-robin fashion to balance the load, or it can be done according to some semantic partition function (say based on some key in the message).

3. Zookeeper

It is the unit that manages and coordinates the brokers. The zookeeper notifies a producer or a consumer in the event of a broker’s addition or failure. Each broker sends heartbeat requests to the zookeeper on a regular interval as long as it is alive. The zookeeper also maintains information about the topics and consumer offsets.

4. Consumer

It is the unit that reads the messages from the topics. A consumer can subscribe to and read from more than one topic. A Consumer can work in parallel with other consumers (in this case, each partition will be read by one consumer only), forming a Consumer group. It doesn’t work in synchronization with the producers. The Consumer has to maintain how many messages it has read by using partition offset. If a consumer accepts a particular partition offset, it implies that he has already consumed the partition’s prior messages.

Conclusion

This article has learned how we can use various Kafka Tools to manage our Kafka cluster effectively. We have also learned about the various components of the Kafka Ecosystem and how they interact with one another.

Recommended Articles

This is a guide to Kafka tools. Here we discuss the Types of Kafka Tools, Various Components along with Architecture. You may also look at the following article to learn more –