Updated September 13, 2023

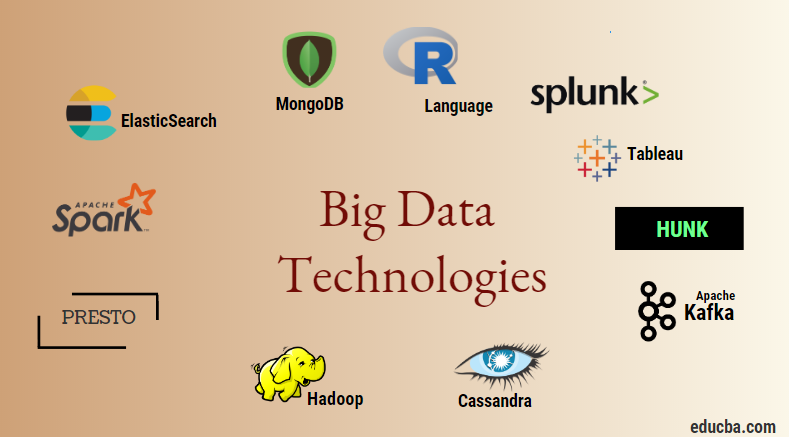

Introduction to Big Data Technologies

Big data technology and Hadoop is as big buzzword as it might sound. As there has been a huge increase in the data and information domain from every industry and domain, it becomes very important to establish and introduce an efficient technique that takes care of all the needs and requirements of clients and big industries responsible for data generation. Previously, normal programming languages and simple structured query language handled the data, but now, these systems and tools don’t seem to accomplish much in the case of big data.

Big data technology encompasses technology and software utilities designed for analyzing, processing, and extracting information from large sets of extremely complex structures and extensive data sets, which traditional systems find challenging to manage. It serves the purpose of handling both real-time and batch-related data. Machine learning has become a critical component of everyday lives and every industry, so managing data through big data has become very important.

Table of Contents

Types of Big Data Technologies

Before starting with the list of technologies, let us first see the broad classification of all these technologies.

They can mainly be classified into four domains.

- Data storage

- Analytics

- Data mining

- Visualization

Let us first cover all the technologies that come under the storage.

1. Hadoop: When it comes to big data, Hadoop is the first technology that comes into play. This is based on map-reduce architecture and helps in the processing of batch-related jobs and process batch information. Designers intended it to store and process data in a distributed data processing environment, using commodity hardware and a straightforward programming execution model. It can store and analyze data in various machines with high storage, speed, and low costs. The Apache Software Foundation developed this as one of the main core components of big data technology in the year 2011, and it is written in Java.

1. Hadoop: When it comes to big data, Hadoop is the first technology that comes into play. This is based on map-reduce architecture and helps in the processing of batch-related jobs and process batch information. Designers intended it to store and process data in a distributed data processing environment, using commodity hardware and a straightforward programming execution model. It can store and analyze data in various machines with high storage, speed, and low costs. The Apache Software Foundation developed this as one of the main core components of big data technology in the year 2011, and it is written in Java.

2. MongoDB: Another essential and core component of big data technology in terms of storage is the MongoDB NoSQL database. It operates as a NoSQL database, meaning it does not adhere to relational and other RDBMS-related properties. It is different from traditional RDBMS databases, which use structured query language. It uses schema documents, and the data storage structure is also different; therefore, it helps hold a large amount of data. It is a cross-platform document-oriented design and database program that uses JSON-like documents and schema. This becomes a very useful use-case of operational data stores in the majority of financial institutions, thereby working to replace the traditional mainframes. MongoDB handles flexibility and data types at high volumes and among distributed architectures.

2. MongoDB: Another essential and core component of big data technology in terms of storage is the MongoDB NoSQL database. It operates as a NoSQL database, meaning it does not adhere to relational and other RDBMS-related properties. It is different from traditional RDBMS databases, which use structured query language. It uses schema documents, and the data storage structure is also different; therefore, it helps hold a large amount of data. It is a cross-platform document-oriented design and database program that uses JSON-like documents and schema. This becomes a very useful use-case of operational data stores in the majority of financial institutions, thereby working to replace the traditional mainframes. MongoDB handles flexibility and data types at high volumes and among distributed architectures.

3. Hunk: It is useful in accessing data through remote Hadoop clusters by using virtual indexes and also uses Splunk search processing language, which can be used for data analysis. The hunk can report and visualize huge amounts of data from the Hadoop and NoSQL databases and sources. It was developed by team Splunk in the year 2013 and was written in Java.

3. Hunk: It is useful in accessing data through remote Hadoop clusters by using virtual indexes and also uses Splunk search processing language, which can be used for data analysis. The hunk can report and visualize huge amounts of data from the Hadoop and NoSQL databases and sources. It was developed by team Splunk in the year 2013 and was written in Java.

4. Cassandra: Cassandra forms a top choice among the list of popular NoSQL databases, which is a free and open-source database that is distributed, has wide columnar storage, and can efficiently handle data on large commodity clusters, i.e., it is used to provide high availability along with no single failure point. Among the list of main features includes distributed nature, scalability, fault-tolerant mechanism, MapReduce support, tunable consistency, query language property, support multi-datacenter replication, and eventual consistency.

4. Cassandra: Cassandra forms a top choice among the list of popular NoSQL databases, which is a free and open-source database that is distributed, has wide columnar storage, and can efficiently handle data on large commodity clusters, i.e., it is used to provide high availability along with no single failure point. Among the list of main features includes distributed nature, scalability, fault-tolerant mechanism, MapReduce support, tunable consistency, query language property, support multi-datacenter replication, and eventual consistency.

Next, let us talk about the different fields of big data technology, i.e., Data Mining.

5. Presto: It is a popular open-source and SQL-based distributed query Engine that runs interactive queries against the data sources of every scale, and the size ranges from Gigabytes to Petabytes. With its help, we can query data in Cassandra, Hive, proprietary data stores, and relational database storage systems. The Apache Foundation developed this Java-based query engine in the year 2013. A few companies making good use of the Presto tool are Netflix, Airbnb, Checkr, Repro, and Facebook.

5. Presto: It is a popular open-source and SQL-based distributed query Engine that runs interactive queries against the data sources of every scale, and the size ranges from Gigabytes to Petabytes. With its help, we can query data in Cassandra, Hive, proprietary data stores, and relational database storage systems. The Apache Foundation developed this Java-based query engine in the year 2013. A few companies making good use of the Presto tool are Netflix, Airbnb, Checkr, Repro, and Facebook.

6. ElasticSearch: This is a very important tool today for searching. This forms an essential component of the ELK stack, i.e., the elastic search, Logstash, and Kibana. ElasticSearch, a search engine based on the Lucene library, resembles Solr and serves as a fully distributed, multi-tenant capable full-text search engine. It has a list of schema-free JSON documents and an HTTP web interface. It is written in the language JAVA and was developed by Elastic company in the company 2012. The names of a few companies that use Elasticsearch are LinkedIn, StackOverflow, Netflix, Facebook, Google, Accenture, etc.

6. ElasticSearch: This is a very important tool today for searching. This forms an essential component of the ELK stack, i.e., the elastic search, Logstash, and Kibana. ElasticSearch, a search engine based on the Lucene library, resembles Solr and serves as a fully distributed, multi-tenant capable full-text search engine. It has a list of schema-free JSON documents and an HTTP web interface. It is written in the language JAVA and was developed by Elastic company in the company 2012. The names of a few companies that use Elasticsearch are LinkedIn, StackOverflow, Netflix, Facebook, Google, Accenture, etc.

Now let us read about all those big data technologies that are a part of Data analytics:

7. Apache Kafka: Known for its publish-subscribe or pub-sub as it is popularly known, it is a direct messaging, asynchronous messaging broker system used to ingest and perform data processing on real-time streaming data. It also includes a provision for setting the retention period, and you can channel the data using a producer-consumer mechanism. It is one of the most popular streaming platforms, similar to the enterprise messaging system or a messaging queue. Kafka has launched many enhancements to date, and one major kind is that of Kafka confluent, which provides an additional level of properties to Kafka such as Schema registry, Ktables, KSql, etc. It was developed by the Apache Software community in 2011 and is written in Java. The companies using this technology include Twitter, Spotify, Netflix, Linkedin, Yahoo, etc.

7. Apache Kafka: Known for its publish-subscribe or pub-sub as it is popularly known, it is a direct messaging, asynchronous messaging broker system used to ingest and perform data processing on real-time streaming data. It also includes a provision for setting the retention period, and you can channel the data using a producer-consumer mechanism. It is one of the most popular streaming platforms, similar to the enterprise messaging system or a messaging queue. Kafka has launched many enhancements to date, and one major kind is that of Kafka confluent, which provides an additional level of properties to Kafka such as Schema registry, Ktables, KSql, etc. It was developed by the Apache Software community in 2011 and is written in Java. The companies using this technology include Twitter, Spotify, Netflix, Linkedin, Yahoo, etc.

8. Splunk: Splunk is used to capture, correlate, and index real-time streaming data from a searchable repository from where it can generate reports, graphs, dashboards, alerts, and data visualizations. It also serves purposes in security, compliance, and application management, as well as in web analytics, the generation of business insights, and business analysis. Splunk developed it in Python, XML, and Ajax.

8. Splunk: Splunk is used to capture, correlate, and index real-time streaming data from a searchable repository from where it can generate reports, graphs, dashboards, alerts, and data visualizations. It also serves purposes in security, compliance, and application management, as well as in web analytics, the generation of business insights, and business analysis. Splunk developed it in Python, XML, and Ajax.

9. Apache Spark: Now comes the most critical and the most awaited technology in Big data technologies, i.e., Apache Spark. It is possibly among the topmost in demand today and uses Java, Scala, or Python to process. Spark Streaming processes and handles real-time streaming data using batching and windowing operations. Spark SQL creates data frame datasets on top of RDDs, providing a robust set of transformations and actions, which are integral components of Apache Spark Core. Other components, such as Spark Mllib, R, and graphX, are also useful in analyzing and doing machine learning and data science. The in-memory computing technique differentiates it from other tools and components and supports various applications. It was primarily developed by the Apache Software Foundation in Java language primarily.

9. Apache Spark: Now comes the most critical and the most awaited technology in Big data technologies, i.e., Apache Spark. It is possibly among the topmost in demand today and uses Java, Scala, or Python to process. Spark Streaming processes and handles real-time streaming data using batching and windowing operations. Spark SQL creates data frame datasets on top of RDDs, providing a robust set of transformations and actions, which are integral components of Apache Spark Core. Other components, such as Spark Mllib, R, and graphX, are also useful in analyzing and doing machine learning and data science. The in-memory computing technique differentiates it from other tools and components and supports various applications. It was primarily developed by the Apache Software Foundation in Java language primarily.

10. R language: R is a programming language and a free software environment used for statistical computing and graphics. It is one of the most important languages in R. This is one of the most popular languages among data scientists, data miners, and data practitioners for developing statistical software and majorly in data analytics.

10. R language: R is a programming language and a free software environment used for statistical computing and graphics. It is one of the most important languages in R. This is one of the most popular languages among data scientists, data miners, and data practitioners for developing statistical software and majorly in data analytics.

11. Apache Oozie

11. Apache Oozie

It is a workflow scheduler system to manage Hadoop jobs. Scheduled workflow jobs configure Directed Acyclic Graphs (DAGs) to perform actions. It’s a scalable and organized solution for big data activities.

12. Apache Airflow

12. Apache Airflow

This is a platform that schedules and monitors the workflow. Smart scheduling helps in organizing and executing the project efficiently. Airflow possesses the ability to rerun a DAG instance when there is an instance of failure. Its rich user interface makes it easy to visualize pipelines running in various stages like production, monitor progress, and troubleshoot issues when needed.

13. Apache Beam

13. Apache Beam

It’s a unified model to define and execute data processing pipelines, including ETL and continuous streaming. Apache Beam framework provides an abstraction between your application logic and big data ecosystem, as no API binds all the frameworks like Hadoop, Spark, etc.

Let us now discuss the technologies related to Data Visualization.

14. Tableau: It is the fastest and most powerful growing data visualization tool used in business intelligence. Tableau, a powerful tool for rapid data analysis, enables the creation of visualizations in the form of Worksheets and dashboards. Tableau company developed it in 2013, and its programming languages include Python, C++, Java, and C. Companies that use Tableau are QlikQ, Oracle Hyperion, Cognos, etc.

14. Tableau: It is the fastest and most powerful growing data visualization tool used in business intelligence. Tableau, a powerful tool for rapid data analysis, enables the creation of visualizations in the form of Worksheets and dashboards. Tableau company developed it in 2013, and its programming languages include Python, C++, Java, and C. Companies that use Tableau are QlikQ, Oracle Hyperion, Cognos, etc.

15. Plotly: Plotly is mainly used for making Graphs and associated components faster and more efficient. It has richer libraries and APIs such as MATLAB, Python, R, Arduino, Julia, etc. This can be used interactively in Jupyter Notebook and Pycharm to style interactive Graphs. It was first developed in 2012 and written in JavaScript. The few companies which are using Plotly are Paladins, bitbank, etc.

15. Plotly: Plotly is mainly used for making Graphs and associated components faster and more efficient. It has richer libraries and APIs such as MATLAB, Python, R, Arduino, Julia, etc. This can be used interactively in Jupyter Notebook and Pycharm to style interactive Graphs. It was first developed in 2012 and written in JavaScript. The few companies which are using Plotly are Paladins, bitbank, etc.

16. Docker & Kubernetes

16. Docker & Kubernetes

These are the emerging technologies that help applications run in Linux containers. Docker is an open-source collection of tools that help you “Build, Ship, and Run Any App, Anywhere”.

Kubernetes is also an open-source container/orchestration platform, allowing large numbers of containers to work together in harmony. This ultimately reduces the operational burden.

17. TensorFlow

17. TensorFlow

It’s an open-source machine learning library that designers, builders, and trainers of deep learning models use. It conducts all computations in TensorFlow using data flow graphs. Graphs comprise nodes and edges. Nodes represent mathematical operations, while the edges represent the data.

TensorFlow is helpful for research and production. The developers built it with the capability to run on multiple CPUs, GPUs, and even mobile operating systems. They could implement it in Python, C++, R, and Java.

18. Polybase

18. Polybase

Polybase works on top of SQL Server to access data stored in PDW (Parallel Data Warehouse). PDW processes any volume of relational data and integrates it with Hadoop.

19. Hive

19. Hive

People use Hive as a platform for querying and analyzing large datasets. It provides a SQL-like query language called HiveQL, which is converted internally into MapReduce and then processed.

With the rapid growth of data and the organization’s huge strive for analyzing big data, Technology has brought in so many matured technologies into the market that knowing them is of huge benefit. Nowadays, Big data Technology addresses many business needs and problems by increasing operational efficiency and predicting the relevant behavior. A career in big data and its related technology can open many doors of opportunities for the person as well as for businesses.

Henceforth, it’s high time to adopt big data technologies.

Conclusion

Big Data technologies have revolutionized data management and analytics, enabling organizations to harness vast information for valuable insights. They offer scalability, real-time processing, and diverse data storage and analysis tools. Embracing these technologies is essential for staying competitive in the data-driven era, driving innovation and informed decision-making.

FAQs

Q1. How can I get started with Big Data technologies?

Ans: You can learn programming languages such as Python and Java by studying relevant frameworks and tools, taking online courses, or working on personal or open-source projects. Additionally, cloud providers often offer free tiers for experimentation.

Q2. What are the future trends in Big Data technologies?

Ans: In the future, we may see AI and machine learning being integrated with Big Data, edge computing being used for real-time processing, an increase in the use of graph databases, and more automation in data management and analysis.

Q3. What industries benefit the most from Big Data technologies?

Ans: Big Data technologies have applications in various industries, including finance, healthcare, retail, e-commerce, marketing, logistics, and manufacturing.

Q4. How does cloud computing relate to Big Data?

Ans: Companies can utilize cloud providers for their Big Data needs, as they offer flexible and affordable infrastructure for storing and processing large amounts of data. Some popular cloud services for this include Amazon S3 and EC2 from AWS, Azure Data Lake and HDInsight from Microsoft’s Azure, and Google Cloud’s Dataprep and BigQuery. Big Data solutions often make use of these services.

Recommended Articles

We hope that this EDUCBA information on “Big Data Technologies” was beneficial to you. You can view EDUCBA’s recommended articles for more information.