Introduction to Kafka Architecture

The following article provides an outline for Kafka Architecture. LinkedIn developed Kafka and donated it to the Apache Software Foundation. It acts as a publish-subscribe messaging system. But it is not like a normal messaging system; it helps build real-time data pipelines, and streaming apps having the capability to deal with huge volumes of data.

The message’s primary goal is to send a message from the sender to the receiver and vice versa. The producer is the term used in Kafka to refer to the sender, while the consumer is the term used to refer to the receiver. But it is important to note that the producer does not send a message directly to the consumer. The producer pushes the message to Kafka Server or Broker on a Kafka Topic. The interested consumer subscribes to the required topic and starts consuming messages from Kafka Server.

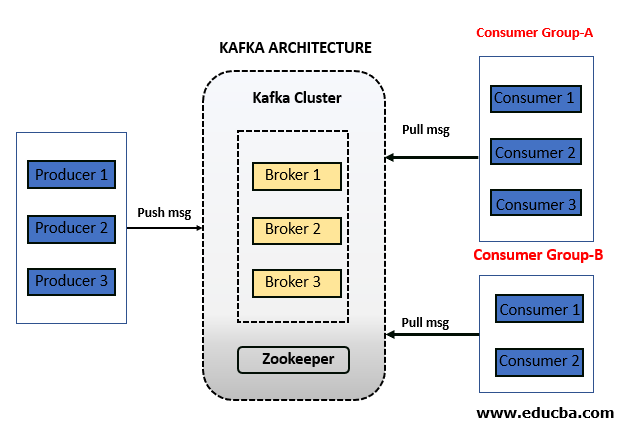

Kafka Architecture

Kafka has a straightforward but powerful architecture. The producer pushes the message to Kafka Broker on a given topic in Kafka. The Kafka cluster contains one or more brokers that store the message from Kafka Producer to a Kafka topic. After that, consumers or groups subscribe to the Kafka topic and receive a message from the Kafka broker. As Kafka is a distributed system with multiple components, the zookeeper helps manage and coordinate.

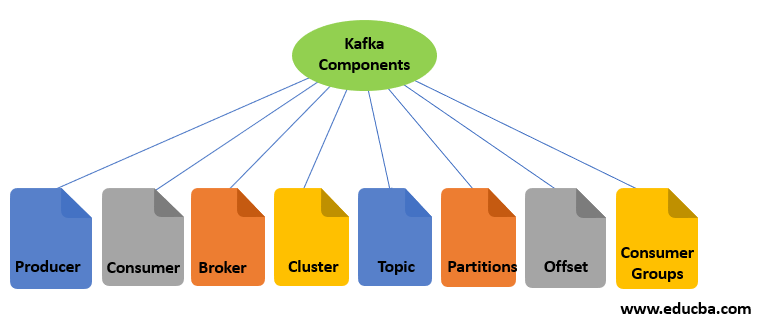

Kafka Components

Below is the list of components available.

Let us go through the functionality of all the components one by one:

1. Kafka Producer

The producer acts as a sender. It is responsible for sending a message or data. It does not send messages directly to consumers. It pushes messages to Kafka Server or Broker. The messages or data are stored in the Kafka Server or Broker. Multiple producers can send a message to the same Kafka topic or different Kafka topics.

2. Kafka Consumer

The consumer acts as the receiver. It is responsible for receiving or consuming a message. But it does not consume or receive a message directly from Kafka Producer. Kafka Producer pushes messages to the Kafka server or broker. The consumer can request a message from the Kafka broker. If the Kafka Consumer has enough permissions, it gets a message from the Kafka Broker.

3. Kafka Broker

The Kafka Broker is nothing but just a server. Simply put, A broker is an intermediate entity exchanging messages between a producer and a consumer. For Kafka Producer, it acts as a receiver; for Kafka Consumer, it acts as a sender. In the Kafka cluster, there can be one or more Kafka brokers.

4. Kafka Cluster

Now first understand, what is a cluster? A cluster is a common terminology in the distributed computing system. It is nothing but just a group of computers that are working for a common purpose. Kafka is also a distributed system, so it has a cluster with a group of servers called brokers.

There can be one or more brokers in the Kafka cluster.

- Single Broker Cluster: The Kafka cluster having only one broker is called Single Broker Cluster.

- Multi-Broker Cluster: The Kafka cluster having two or more brokers is called Multi-Broker Cluster.

5. Kafka Topic

It is one of the most important components of Kafka. Kafka Topic is a unique name given to a data stream or message stream.

Now let us understand the need for this. As you know, Kafka Producer sends a message stream to the broker, and Kafka Consumer receives a message stream from that broker.

Suppose a consumer wants to consume a message from broker, but the question is, from which message stream? There can be multiple different message streams on the same broker, coming from different Kafka producers. Here comes the concept of topic, which is a unique identity of the message stream. The producer sends a message to a unique name called the topic for that message stream. Multiple producers can also send to the same topic. If any consumer wants to consume the message, it subscribes to the topic in Kafka Broker. The consumer will receive all the messages coming to that topic.

6. Kafka Partitions

Now we know that the producer sends data to the broker with a unique identity called a topic, and the broker stores the message with that topic. Now, you have a huge volume of data, and it is very challenging for the broker to store data on a single machine. As we already know, Kafka is a distributed system. In such a scenario, we can break the Kafka topic into partitions and distribute the partitions on a different machine to store. Based on the use case and data volume, we can decide the number of partitions for a topic during Kafka topic creation.

7. Offsets

In Kafka, a sequence number is assigned to each message in each partition of a Kafka topic. This sequence number is called Offset. As soon as any message arrives in a partition, a number is assigned to that message. For a given topic, different partitions have different offsets. The offset number is always local to the topic partition. There is not any offset that is global to the topic or each partition of the topic. Initially, the offset pointer points to the first message. As soon as the consumer reads that message, the offset pointer moves to the next message and so on in the sequence.

So, combining the topic name, partition number, and offset number is a unique identity for any message. In other words, you can find any message based on the below three components.

[Topic Name] -> [Partition Number] -> [Offset Number]8. Kafka Consumer Group

As the name suggests, the Kafka Consumer group is a group of consumers. Multiple consumers combined to share the workload. It is just like dividing a piece of a large task among multiple individuals. There can be multiple consumer groups subscribing to the same or different topics. Two or more consumers of the same consumer group do not receive the common message. They always receive a different message because the offset pointer moves to the next number once the message is consumed by any of the consumers in that group.

9. Zookeeper

Zookeeper is a prerequisite for Kafka. Kafka is a distributed system that uses Zookeeper for coordination and to track the status of Kafka cluster nodes. It also keeps track of Kafka topics, partitions, offsets, etc.

Conclusion

As we have seen that Kafka is a compelling distributed streaming platform. It acts as a publish-subscribe messaging system. But it is not like normal messaging systems. It helps industries to build their big data streaming pipelines and streaming applications. We have also seen that the producer does not send a message directly to the consumer. The broker is a centralized component that helps exchange messages between a producer and a consumer.

Recommended Articles

This has been a guide to Kafka Architecture. Here we discuss the introduction, architecture, and components of Kafka. You may also have a look at the following articles to learn more –