Updated February 24, 2023

Introduction to Spiking Neural Network

Spiking Neural networks can often be the third generation of neural networks. It aims to bridge the gap between biology and additionally, machine learning. Spiking neural networks operate victimization spikes that square measure separate events that take place at points in time, rather than continuous values. Temporal committal to writing suggests that one spiking cell can replace several hidden units on a colon neural net. The spiking neural network considers profane data. In a spiking neural network, the neuron’s current declared is taken into consideration as its level of activation. It uses binary output instead of continuous output.

An input pulse in SNN causes this state value to rise for the associate quantity of some time then bit by bit reduce. secret writing schemes square measure designed to interpret these output pulse sequences as selection, taking into thought every the center beat frequency and pulse interval. Pulse generation time helps the neural network to be established accurately. These SNNs square measure extra powerful than that of the second generation neural networks, due to the coaching job issues, and additionally the hardware wants these square measures used less.

Software

A wide variety of application computer code package can replicate SNNs. This computer code package is also classified per its uses.

They are mentioned as:

- SNN simulation

- Data method

- Hybrid neural simulation

SNN style consists of spiking neurons and interconnecting synapses that square measure sculptural by adjustable scalar weights. We have a tendency to tend to first implement the SNN to cipher the analog data into spike trains victimization either temporal committal to writing or population committal to writing.

Spike trains in an exceeding network of spiking neurons square measure propagated through conjugation connections. Conjugation is also either simulative, which may increase the neurons membrane potential upon receiving the input or restrictive, which decreases the neurons membrane potential.

Architecture of SNN

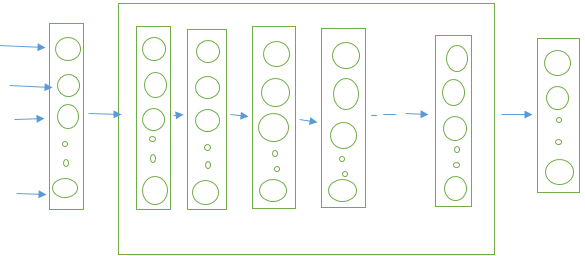

Below is the architecture:

- The prime of the figure represents the planning of a spiking neural network. The square measure three layers among the SNN network.

- The input layer learns to perform preprocessing on the input. The information is then sent to a series of hidden layers, the amount of which could vary, as a result of the data propagates through hidden layers, extra advanced choices square measure extracted and learned. The output layer performs classification and determines the label of the input stimulation, usually by computer code package.

Learning in Spiking Neural Networks

Any network, learning is completed by adjusting the scalar-valued conjugation weights. Spiking permits a sort of bio plausible learning rule that can’t be directly replicated in non-spiking networks Neuro human has known several variants of this learning rule that falls below the term spike-timing-dependent physical property (STDP).

The following square measure the educational mechanisms in spiking neural networks, each supervised and unsupervised.

Unsupervised Learning via STDP

Unsupervised learning in SNNs usually involves STDP as an area of the educational algorithms. The foremost common sort of biological STDP encompasses a terribly intuitive interpretation. In this, strengthening is looking semi-permanent synergism, and therefore the weakening is termed semi-permanent depression. The term semi-permanent is employed to tell apart between the terribly transient effects on a scale of many values that square measure discovered in experiments. The exploitation of this approach, a lot of complicated networks with multiple outputs neurons are developed.

Probabilistic Characterization of Unsupervised STDP

As per the researchers, this is often thought of as the attainable role of probabilistic computation as a primary IP step within the brain in terms of STDP. Once a sort of STDP is employed with Poisson spiking input neurons plus the acceptable random winner-take-all (WTA) circuit, it is in a position to accept a random on-line expectation-maximization algorithmic rule to find out the parameters for a multinomial mixture distribution. This model was well meant to possess some biological plausibleness.

Supervised Learning

- Supervised learning invariably uses a label of some kind. It adjusts weights via gradient descent on a price perform scrutiny discovered and desired network outputs. Supervised learning tried to attenuate the error between the specified and therefore, the output spike trains.

- Spike Prop is that the 1st algorithmic rule to coach SNNs by back-propagating errors. Their value performance took into consideration spike temporal arrangement, and spike prop was able to classify the non-linearly severable knowledge for a temporally encoded XOR drawback employing a 3 layer design.

- Later advanced versions of spike prop, multi-spike prop were applicable in multiple spike secret writing. The foremost recent approaches to supervised coaching of SNNs embrace ReSuMe that is remote supervised learning, Chronotron, and Span that is that the spike pattern association somatic cell.

Applications of SNN

SNN has constant applications as that of ANNs.

- SNN will model the central system a nervousness of biological organisms, like associate insect seeking food while not previous data of the setting.

- SNN has tried itself helpful in neurobiology; however, it couldn’t kill engineering.

- It is simple to make the SNN model and observe its dynamics.

Some of the applications for SNN square measure mentioned below:

- Visual Pattern Recognition

- Auditory Pattern Recognition

- Audi-Visual Pattern Recognition

- Taste Recognition

- Ecological Modeling

- Sign Language Recognition

- Object Movement Recognition

Conclusion

In this, we have seen the spiking neural network. What is spiking neural network, Software Architecture of SNN, Learnings of SNN and applications of SNN

Recommended Articles

This is a guide to Spiking Neural Network. Here we discuss an introduction to spiking Neural Network with software architecture, learning of SNN, and application. You can also go through our other related articles to learn more –