Updated April 7, 2023

Introduction to PyTorch gather

In deep learning we need to extract the values from the specified columns of the matrix at that time we can use the Pytorch gather() function. In other words, we can say that by using PyTorch gather we can create a new tensor from specified input tensor values from each row with specified input dimension. The gather() function uses an index to take the value from each row. When we need to work with multi-class classification at that time we can use the gather() function. We use gather() when we have registered yield probabilities in a network and we want to extricate one worth from each line, where the removed worth compares to the objective yield class.

What is PyTorch gather?

Gather values along a pivot determined by a faint. Information and files should have a similar number of aspects. Basically, the gather() function uses the different parameters as follows.

- Input: Input is nothing but a source of tensor.

- Dim: Dimension means axis with a specified index of tensor.

- Index: Index is used for the elements to gather.

We should begin with going through the semantics of the various contentions: The principal contention, input, is the source tensor that we need to choose components from. The second, faint, is the aspect (or hub in tensor flow/NumPy) that we need to gather along.

Lastly, list is the records to file input.

out[a][b][c] = input[index[a][b][c]][b][c] # if dimension == 0

out[a][b][c] = input[a][index[a][b][c]][c] # if dimension == 1

out[a][b][c] = input[a][b][index[a][b][c]] # if dimension == 2

So how about we go through the model.

The information tensor is [[1, 2], [3, 4]], and the faint contention is 1, for example, we need to gather from the subsequent aspect. The records for the subsequent aspect are given as [0, 0] and [1, 0].

As we “skip” the main aspect (the aspect we need to gather along is 1), the primary element of the outcome is certainly given as the principal aspect of the file. That implies that the lists hold the subsequent aspect, or the section records, however not the column files. Those are given by the lists of the list tensor itself. For the model, this implies that the yield will have in its first line a choice of the components of the information tensor’s first line too, as given by the main column of the list tensor’s first line. As the segment files are given by [0, 0], we consequently select the principal component of the main column of the information twice, coming about in [1, 1]. Likewise, the components of the second column of the outcome are a consequence of ordering the second line of the information tensor by the components of the second line of the list tensor, coming about in [4, 3].

Usage PyTorch gather

Now let’s see how we can use PyTorch gather as follows.

In the above point, we already discussed what the PyTorch gather() function is, basically the gather() function is used to extract the value from the input tensor along with the specified dimension that we want. In deep learning, we need accurate results rather than predicted outcomes; so many times we need to change the tensor dimension as per the requirement. We can say that we need to make the changes inside the trained model. So Pytorch provides the different types of functions to the user to reduce their work as well as they also try to reduce the complexity of code. By using the gather ( ) function we fetch the value from the tensor with a specified dimension so that we can use the PyTorch gather() function as per our requirement.

PyTorch gather Function

Now let’s see what PyTorch gather function with syntax is as follows.

Syntax

torch. gather(specified input, specified dim, index value)Explanation

By using the above syntax we can implement the gather() function. In the above syntax we use the gather() function with different parameters such as specified input, specified dimension, and index values as well it also consists of some keyword arguments as follows.

Boolean value: This is an optional part of this syntax; it is used to specify the gradient with respect to the tensor.

Out tensor: This is an optional part of this gather() and it is used to specify the target tensor.

PyTorch gather Examples

Now let’s see the different examples of PyTorch gather() function for better understanding as follows.

import torch

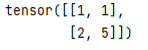

ten = torch.tensor([[2, 1], [2, 5]])

a = torch.gather(ten, 1, torch.tensor([[1, 1], [0, 1]]))

print(a)Explanation

In the above example, we try to implement the gather() function, here first we need to import the torch, after that we declare the tensor values as shown. Next line we use the gather function with dimension 1 and here we also specify the index values 0 and 1 as shown. The final output of the above program we illustrated by using the following screenshot as follows.

Now let’s use the gather() function as follows.

import torch

ten = torch.tensor([[5, 7], [1, 3]])

a = torch.gather(ten, 1, torch.tensor([[1, 1], [0, 1]]))

print(a)Explanation

In this example we follow the same process, here we just change the value of tensor, and reaming all things is the same which means package and gather() function as shown. The final output of the above program we illustrated by using the following screenshot as follows.

So in this we can use PyTorch gather() function as well we can use gather() to solve the problem of 2D and 3D dimensional tenor means suppose we need to extract the specified index but without gather() function it is not possible to fetch the value. So at that time, we can gather() functions to avoid this problem.

Conclusion

We hope from this article you learn more about the Pytorch gather. From the above article, we have taken in the essential idea of the Pytorch gather and we also see the representation and example of the Pytorch gather. From this article, we learned how and when we use the Pytorch gather.

Recommended Articles

We hope that this EDUCBA information on “PyTorch gather” was beneficial to you. You can view EDUCBA’s recommended articles for more information.