Model Risk Management in Enterprise AI Systems: An Engineering Approach

Artificial intelligence adoption across industries is accelerating at an unprecedented pace. AI systems are increasingly integrated into core enterprise workflows, handling tasks ranging from financial forecasting and fraud detection to predictive maintenance and customer personalization. Gartner predicts that a majority of enterprises will rely on AI-driven decision-making within mission-critical business processes over the next few years. Models that excel in controlled settings often underperform in production due to changing data patterns, evolving user behavior, and widening governance gaps. As highlighted by engineering teams at Radixweb, responsible AI adoption begins with architectural discipline. Model risk management in enterprise AI must therefore be integrated into system architecture rather than treated as a compliance afterthought.

What is Model Risk in Enterprise AI?

Model risk in enterprise AI systems refers to the potential for adverse outcomes resulting from errors, misuse, or failures in AI models.

It includes:

- Incorrect predictions

- Data drift

- Concept drift

- Bias amplification

- Regulatory exposure

- Security vulnerabilities

According to McKinsey, more than half of AI projects fail to deliver long-term value after deployment. This usually happens because of operational and governance gaps, not because of problems with the model design.

It is important to understand the difference between model development risk and production system risk.

Model development risk relates to the quality of training, the choice of algorithms, and the suitability of the dataset. Production system risk arises from real-world conditions after the model is deployed. This can include system integration failures, inconsistent data, scaling issues, and gaps in monitoring.

Many enterprises focus mainly on improving model accuracy during development, but they often spend less effort on making the system reliable in production. As organizations recognize the growing importance of AI across industries, they integrate AI models into mission-critical systems, making reliability, transparency, and governance essential.

That imbalance creates systemic vulnerability.

Why Traditional Risk Frameworks Fail for AI Systems?

Traditional software risk management assumes that systems behave in a fixed way. If the same input is given, the system will produce the same output every time.

AI systems work differently.

Their results are based on probabilities. They depend on data that can change over time. They may also retrain automatically and operate in complex and unpredictable environments.

Key challenges include:

- Non-deterministic outputs

- Continuous learning dynamics

- Opaque decision-making processes

- High data dependency

Because of these features, normal risk checklists are not enough.

Engineering discipline must adapt.

AI risk management requires system-level thinking that extends beyond testing for functional correctness. It demands continuous validation across data pipelines, model logic, infrastructure layers, and governance controls.

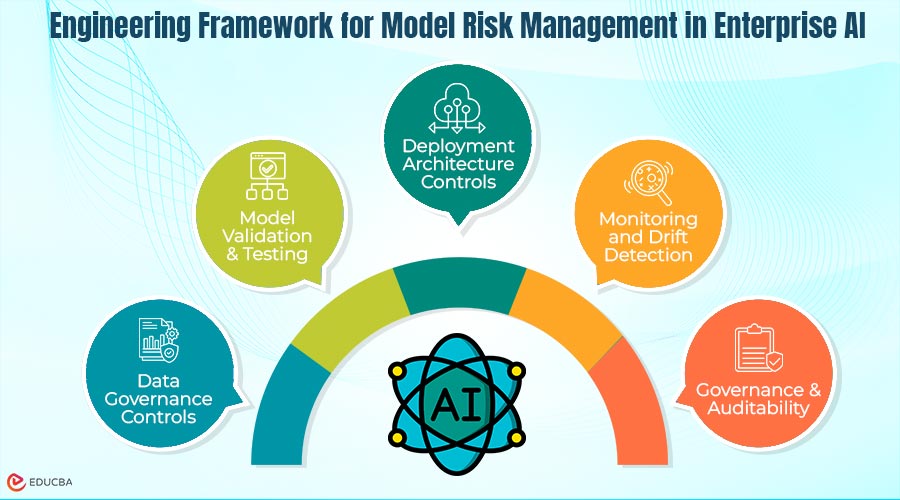

Engineering Framework for Model Risk Management in Enterprise AI

Effective model risk management requires a structured engineering framework. It cannot rely solely on governance documents or compliance audits. You must operationalize it across multiple layers of the AI system.

#Layer 1. Data Governance Controls

Data is the foundation of any AI model. Weak data governance introduces risk before modeling even begins.

Core controls include:

- Data validation pipelines

- Training data documentation

- Bias detection mechanisms

- Version control for datasets

The NIST AI Risk Management Framework emphasizes traceability and documentation as foundational practices. Enterprises must track where data originates, how it transforms, and how it evolves.

Without careful data management, later checks become unreliable.

#Layer 2. Model Validation and Testing

Accuracy metrics alone do not guarantee robustness.

Model validation must include:

- Cross-validation across diverse datasets

- Stress testing under extreme conditions

- Edge case simulation

- Adversarial testing

Testing should check more than just prediction accuracy. It should also examine whether the model is stable, fair, and able to handle unusual inputs.

In industries like finance and healthcare, testing standards need to be stricter than normal machine learning benchmarks.

Engineering teams should also treat validation as an ongoing process, not something done only once before deployment.

#Layer 3. Deployment Architecture Controls

Deployment architecture significantly influences systemic risk exposure.

Key controls include:

- Canary releases to test models on limited traffic

- Shadow deployments to compare predictions without affecting users

- Model versioning for rollback capability

- Feature store management to ensure consistency

Deployment safeguards help reduce the impact if a model fails.

Instead of releasing a new model across the entire system at once, organizations can introduce it gradually in stages. This approach helps avoid major disruptions to operations.

As a result, system architecture plays an important role in reducing risk.

#Layer 4. Monitoring and Drift Detection

A model that performs well today may fail tomorrow.

Data distributions shift. Market conditions evolve. User behaviour changes.

Real-time monitoring is therefore essential.

Monitoring should include:

- Performance thresholds and alerting systems

- Concept drift detection

- Data drift analysis

- Feedback loop integration

IDC reports that most AI system failures happen after deployment because monitoring is not strong enough.

Drift detection tools should be built directly into the production environment instead of being treated as separate analytics tools.

Continuous monitoring helps AI systems adjust to changes and work more reliably over time.

#Layer 5. Governance and Auditability

Technical safeguards must align with governance requirements.

Core governance mechanisms include:

- Explainability layers for decision transparency

- Decision logging for traceability

- Compliance mapping aligned with regulatory standards

- Human oversight checkpoints

As regulations continue to evolve, including frameworks such as the EU AI Act, enterprises must demonstrate not only that their AI models perform well but also that they follow proper processes and accountability.

Governance should not exist separately from engineering work. It needs to be built into everyday workflows, system infrastructure, and documentation practices.

The Cost of Ignoring Model Risk

Ignoring model risk can have significant consequences.

Financial losses may occur when predictive systems generate inaccurate forecasts. Reputational damage may result when biased models harm customers. Regulatory penalties can result from non-compliant AI deployments.

Deloitte research indicates that trust has become one of the strongest differentiators for AI-driven enterprises. Organizations that demonstrate responsible AI practices build stronger stakeholder confidence and long-term competitive advantage.

Model risk is therefore not merely a technical concern. It is a strategic business risk.

Integrating Model Risk Management Into Enterprise Architecture

AI systems must not exist in isolation.

Model risk management must integrate with:

- Data engineering pipelines

- Security architecture frameworks

- Cloud infrastructure management

- DevOps processes

This systems-thinking approach ensures that AI governance aligns with the broader enterprise technology strategy.

Building risk management into architectural design makes AI systems stronger, scalable, and reliable.

Adding rules after deployment, however, leads to broken controls and reactive fixes.

Engineering maturity determines AI sustainability.

Practical Roadmap for Model Risk Management in Enterprise AI

Enterprises can operationalize model risk management through a structured roadmap.

- Establish Risk Classification Framework: Categorize AI systems based on impact, regulatory exposure, and operational criticality.

- Define Validation Standards: Standardize testing protocols, documentation requirements, and performance thresholds.

- Design Deployment Safeguards: Implement canary releases, rollback mechanisms, and staged integration strategies.

- Implement Monitoring Automation: Deploy automated drift detection, anomaly alerts, and real-time performance dashboards.

- Build Cross-Functional Governance Teams: Combine engineering, compliance, security, and business leadership to ensure holistic oversight.

This roadmap transforms model risk management from an abstract principle into an executable engineering practice.

Engineering Discipline Is the Foundation of Responsible AI

AI success depends on more than just advanced algorithms.

It also requires reliable systems, strong architecture, and clear governance practices.

Model risk management in enterprise AI is essential for long-term and sustainable AI adoption.

Organizations that add validation, monitoring, deployment safeguards, and governance into their system design can build AI systems that work responsibly and scale over time.

In enterprise AI, strong engineering practices are not just useful they help build trust in the system.

Recommended Articles

We hope this guide to model risk management in enterprise AI helps you strengthen your AI systems. Check out these recommended articles for further insights and practical strategies.