Updated March 17, 2023

Introduction to Azure Data Factory Connectors

The azure data factory connectors is connecting to source and destination data stores, which is made easier with the aid of Azure Data Factory connectors. Keep in mind that linked services use these connectors to check out this on Azure Data Factory Linked Services and Parameters and to learn more about linked services and how to construct them to enable to use of prebuilt procedures as steps in the workflows.

Key Takeaways

- In order to define input and output data, processing events, as well as the schedule and resources needed to carry out the intended data flow.

- Azure Data Factory needs four essential components.

- Within the data storage, datasets represent data structures.

- A pipeline activity’s input is represented by an input dataset.

- Activities specify the data-related actions to be taken.

- The details required for Azure Data Factory to connect to external resources are defined by linked services.

Overview of Azure Data Factory Connectors

The following data stores and formats are supported by the Copy, Data Flow, Look up, Get Metadata, and Delete activities in Azure Data Factory and Azure Synapse Analytics pipelines. To discover more about the supported capabilities and the accompanying configurations for accessing each data store. It’s connecting to the source and destination data storage with the use of Azure Data Factory connectors. That linked services and use these same connectors to Check out the same on Azure Data Factory Linked Services and Parameters if we want to be linked about services and how to construct them. A fully managed azure data factory connector service including all the combined datas with free maintenance costs that can be included for visualizing data sources in the azure data factory.

How to Create Connectors Azure Data Factory?

The list above is not only the data stores that connect Azure Data Factory and Synapse pipelines that may have been accessed. Here are some expandable choices if we need to transfer data to/from a data store that is not on the list of the service’s built-in connectors. The data transfer logic is used in every pipeline from the data store. Also, the services are not performed if it’s not supported by the data store. We can create custom code logic executed by the custom activity on a group of virtual machines in Azure Batch.

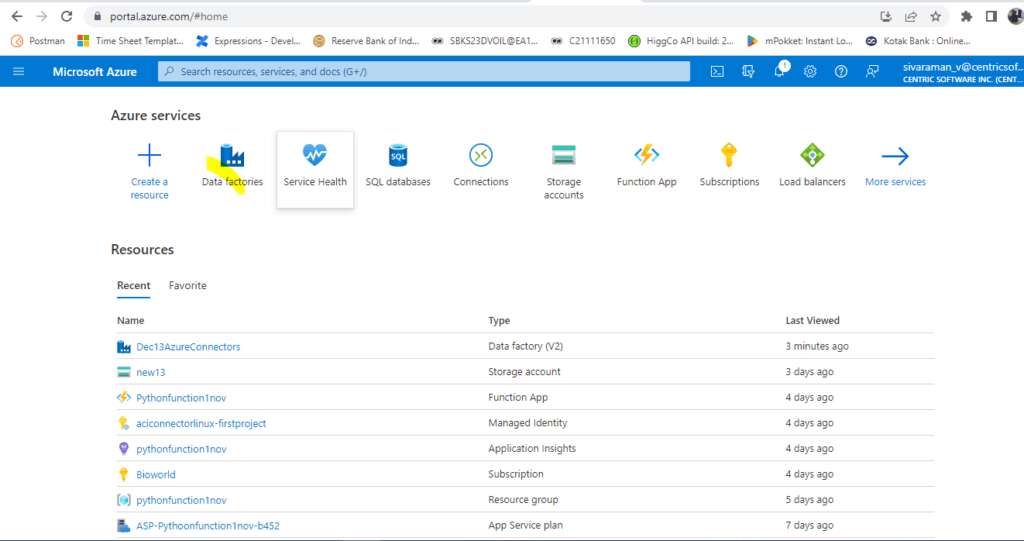

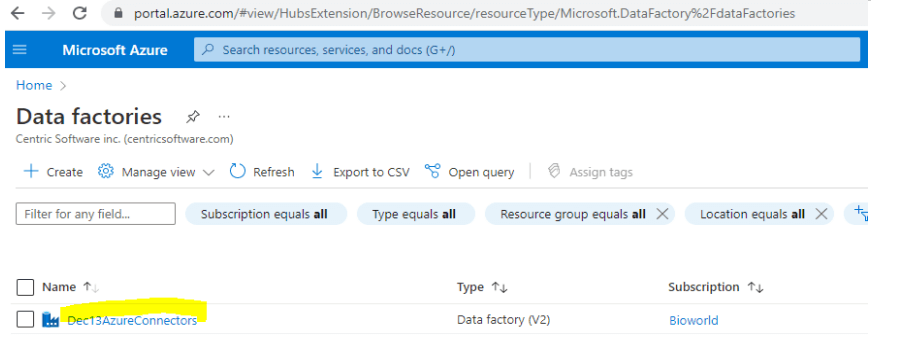

1. Login to the Azure portal and choose Data factories.

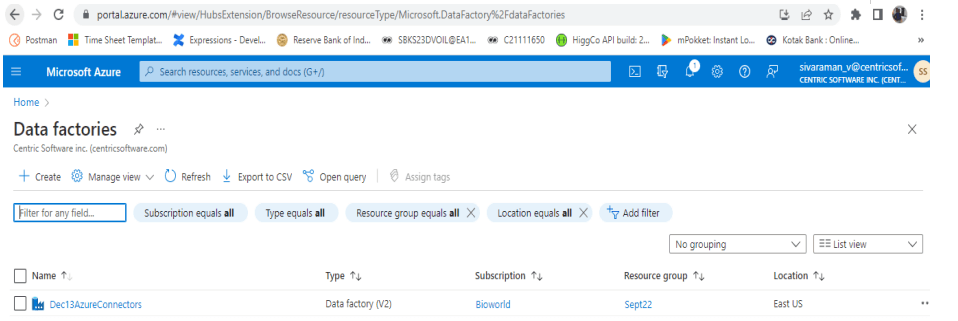

2. Created data factories with the required subscription and other details like location etc.

3. Navigate to Data factories, and click the New option for creating Factory Resources.

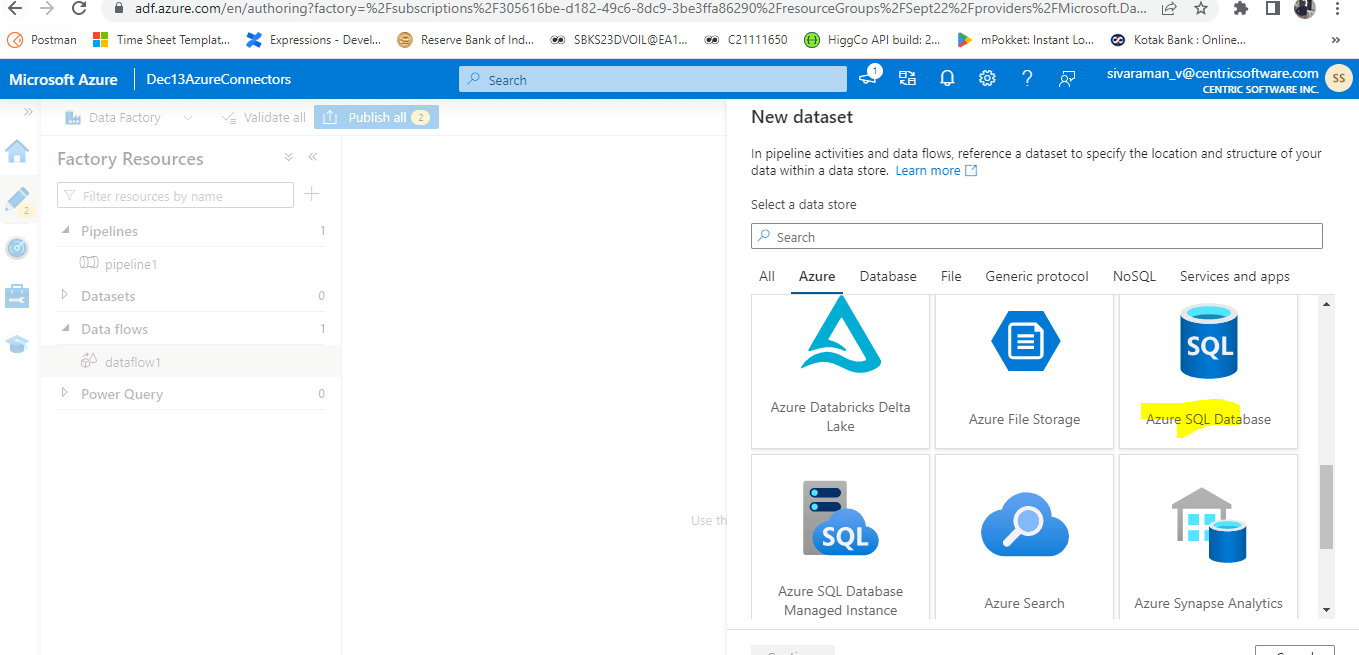

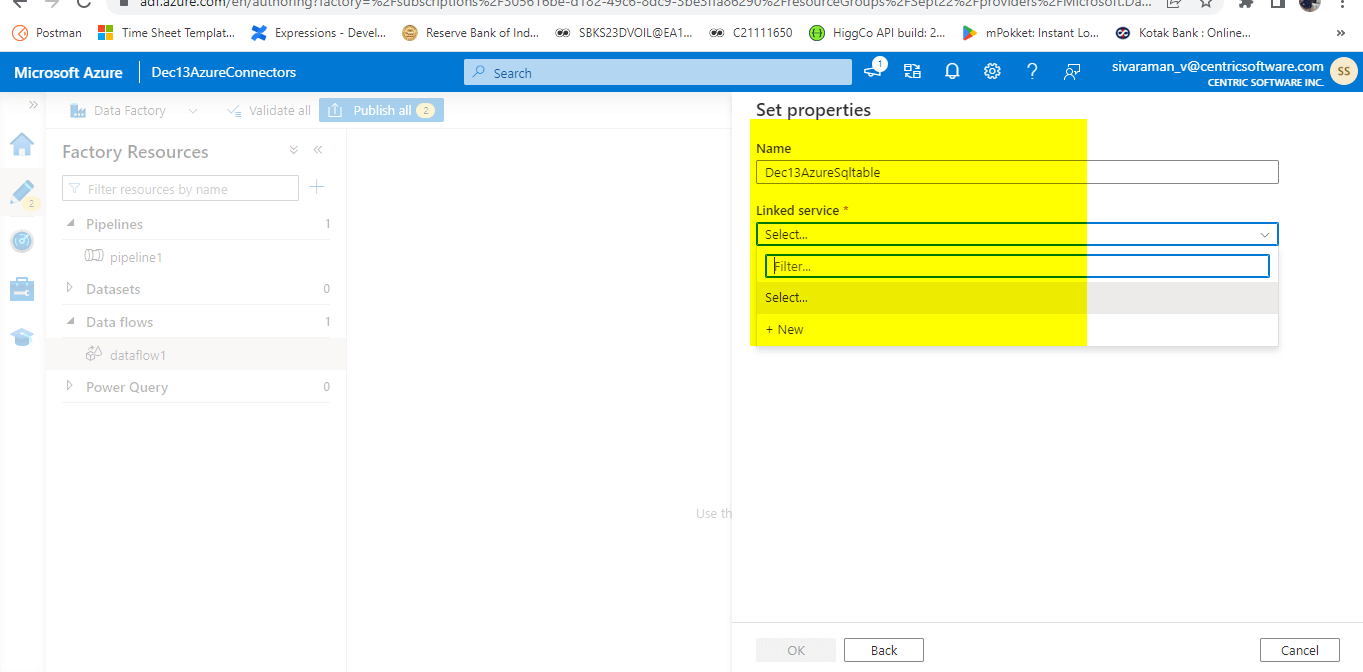

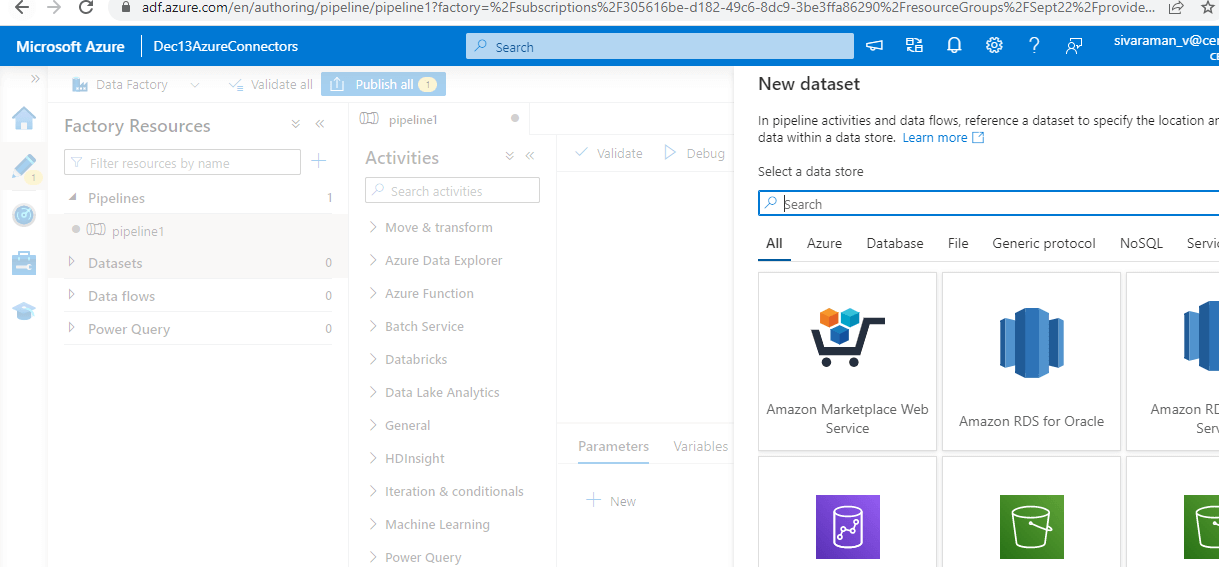

4. Here, I am creating Azure SQL Databases as Dataset, then Click continue.

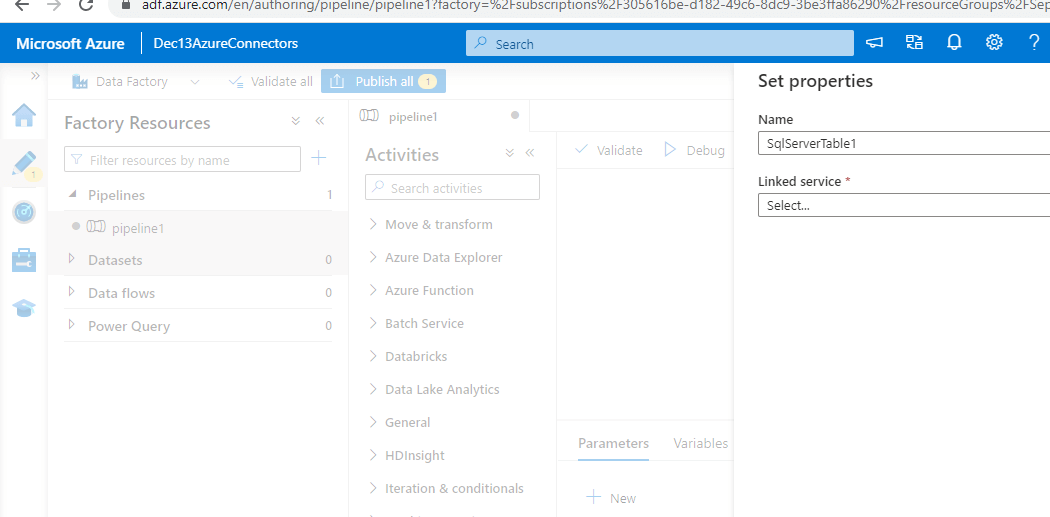

5. Enter the database name and Linked service like below.

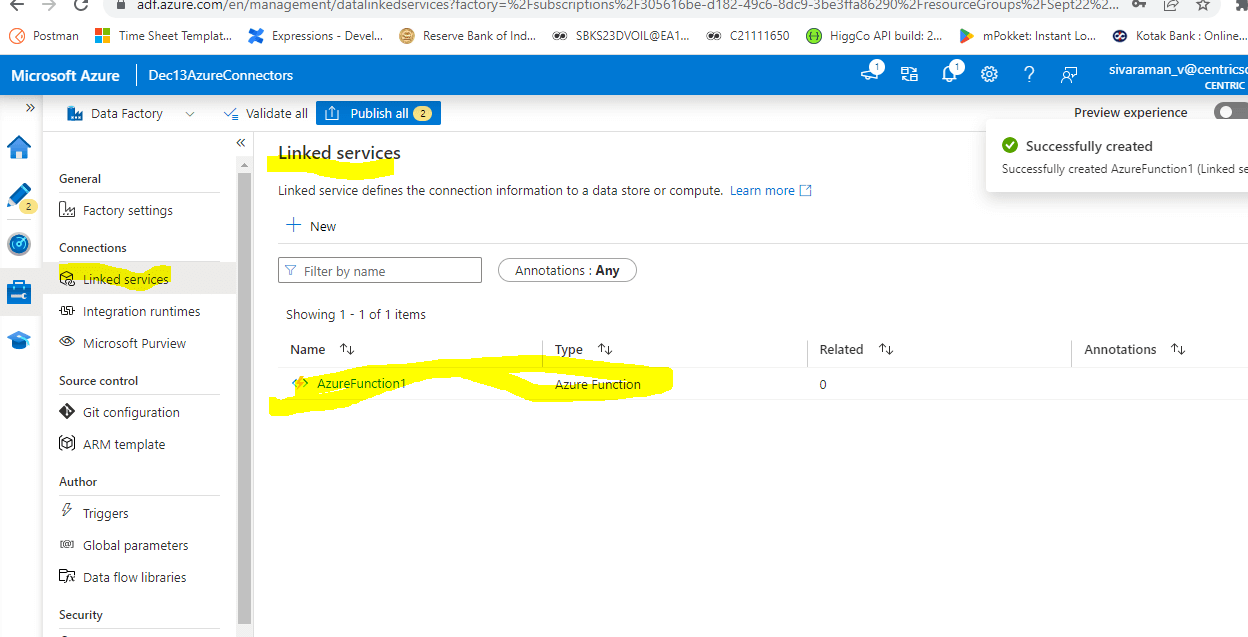

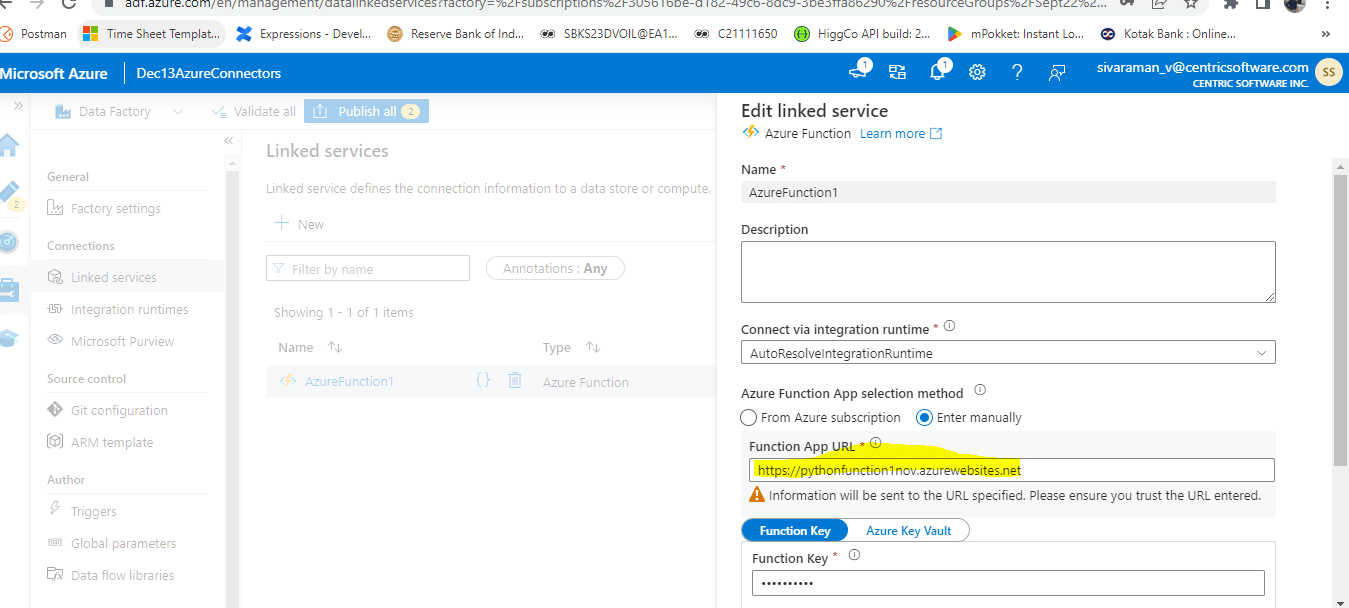

6. Here, I can add Function as a Linked service.

7. Please enter the Function App URL.

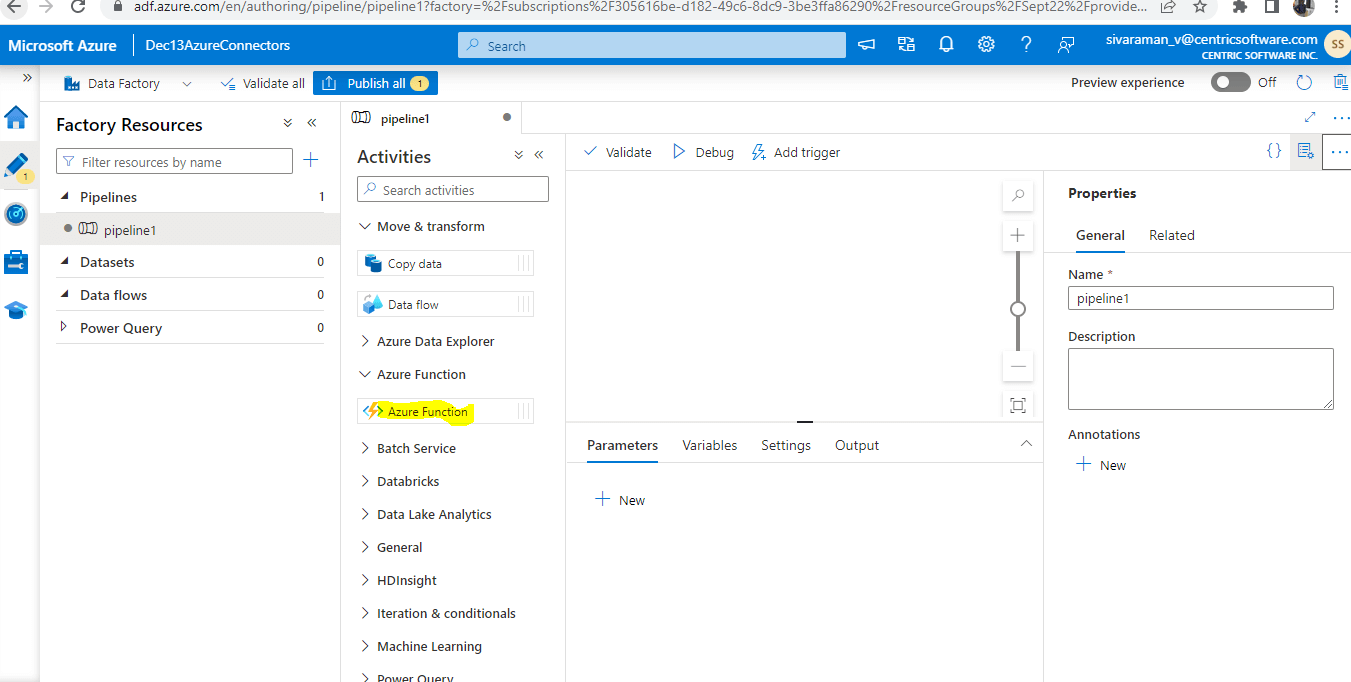

8. We can create Pipeline, Datasets, Dataflows, and Power Query based on the requirements.

How do New Connectors Add?

We can select the option Create a Resource from the Azure services menu in the Azure interface. And enter the Logic Apps Custom Connector in the search box of the New dialogue, and then choose from the dropdown list. Kindly select and create in the Logic Apps Custom Connector dialogue. User accounts, group memberships, and credential hashes are synced from an on-premises AD DS environment to Azure AD using Azure AD Connect. User account characteristics, like UPN and on-premises security identification (SID), are synced. Set up Azure connectors to access the Azure account’s resource information. On the Connectors application, connectors built third-party applications like Current Cloud’s View connectors that can be combined, or we can build a new one using the app.

Here I have created Azure cosmos DB to perform the activities.

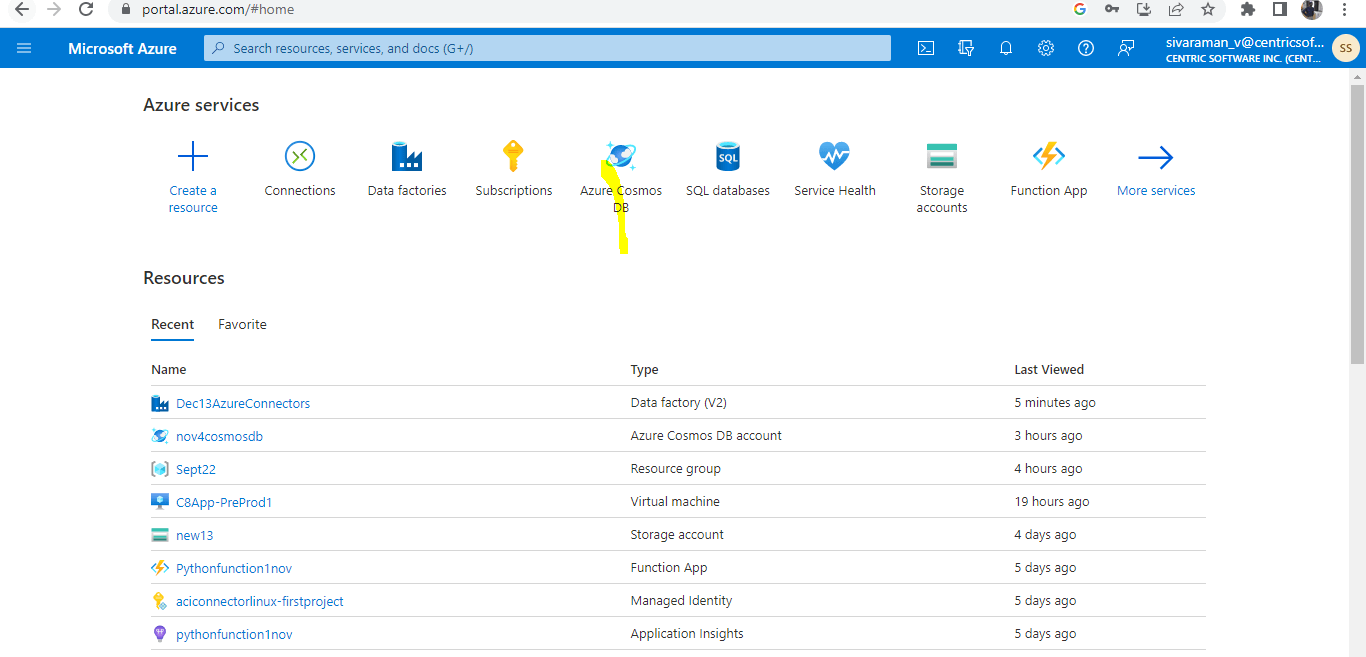

1. Search and Create Azure Cosmos DB in the home tab.

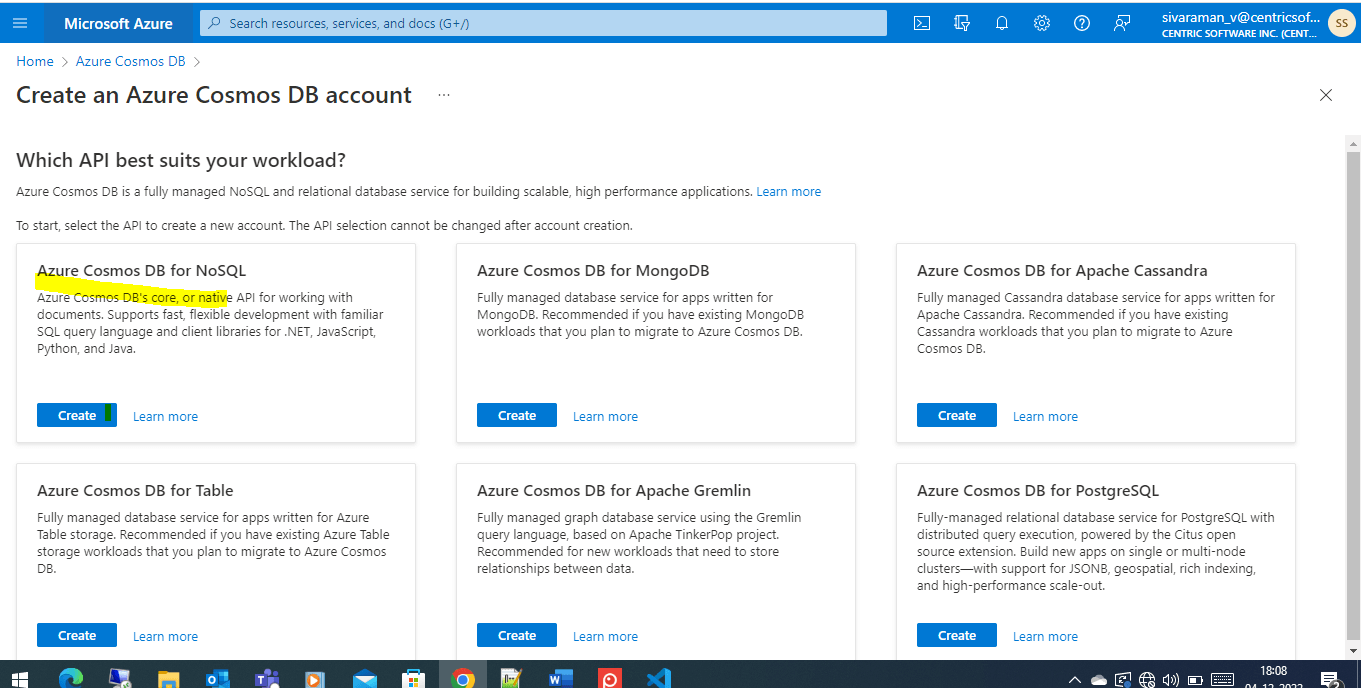

2. Here, I am creating Azure Cosmos DB for NoSQL.

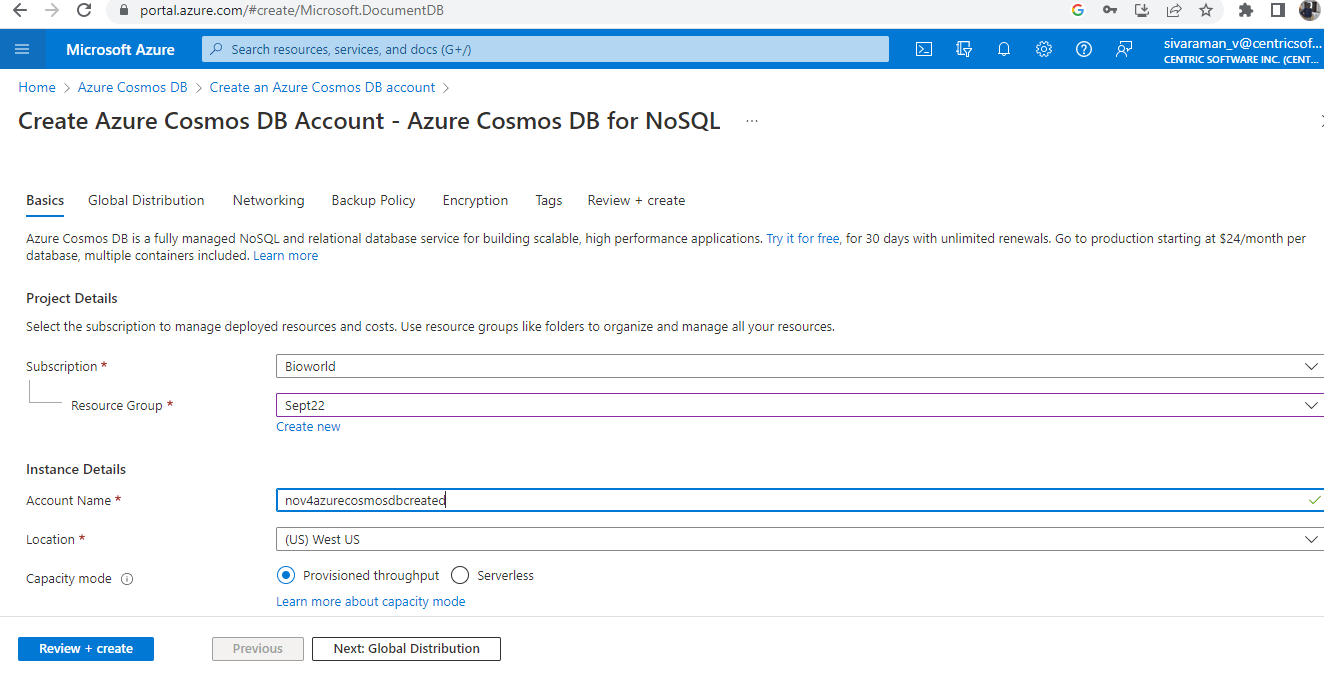

3. Enter required details like name, Resources, Subscriptions, etc.

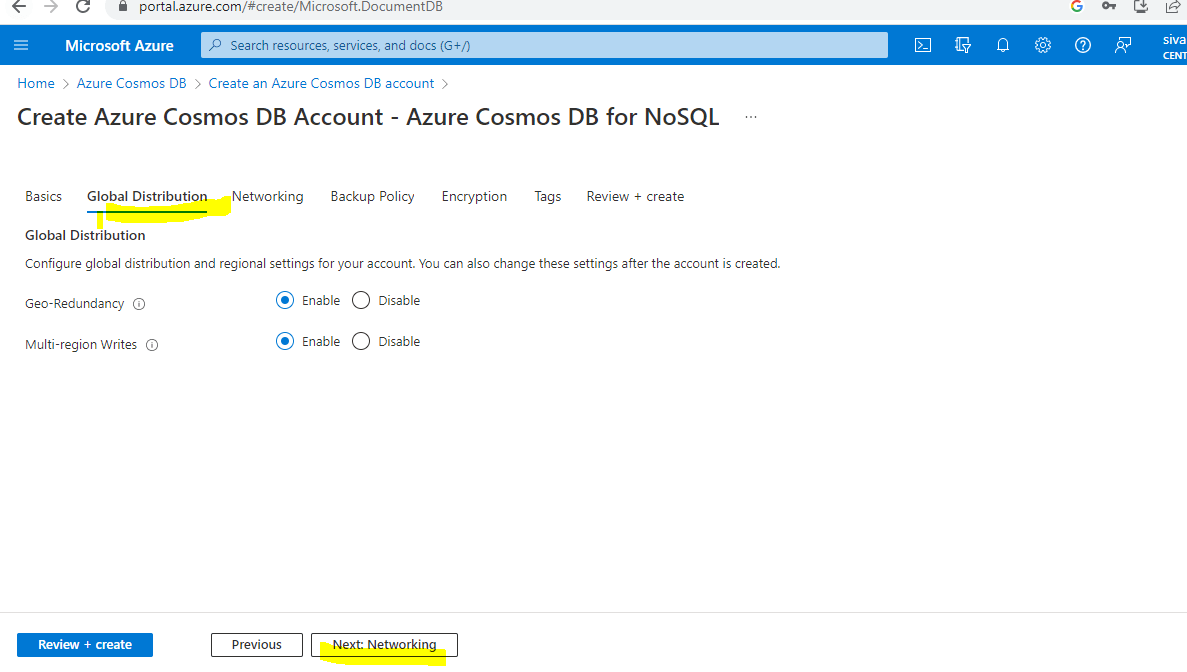

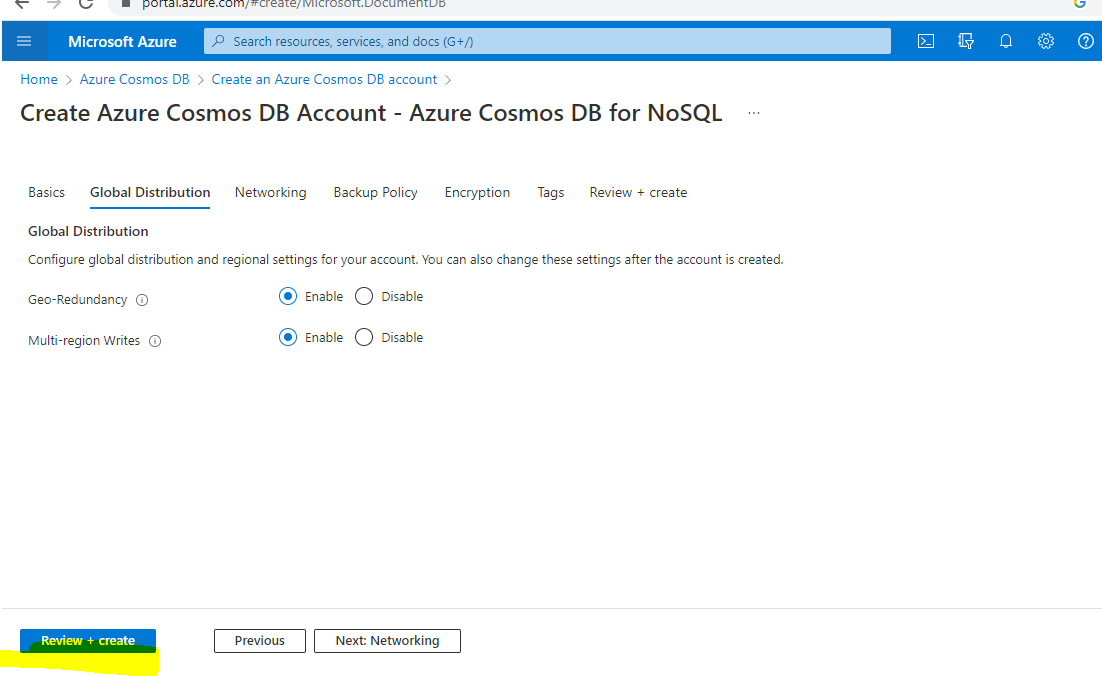

4. Then click on Next: Global Distribution button to fill other Tab details.

5. Finally, click Review + create button to create a Cosmos DB Account.

Configuring

For instructions on configuring authentication for the Azure Data Factory Connector, consult Microsoft’s website.

To Set up the upcoming properties, we need to follow the below steps:

- Tenant ID: The tenant identification code for Microsoft Azure. The tenant where the service principal was formed should be listed here, for instance: 11234cae-6ge9-2345-frga1-3b23452.

- Client ID – Also known as the Application ID, the client ID for an application can look like this: 11234cae-6ge9-2345-frga1-3b23452.

- Client ID and Client Secret – The client ID and the client secret.

Resource Name is the name of the resource which helps to prescribe the Subscriber ID and Optionally provide a comma-separated list of subscription IDs if the Connector needs to operate on the listed Subscriber IDs. This should be used as https://management.azure.com/.

If the Connector needs to operate on any of the specified Resource Groups, we can add a comma-separated list of them below. Azure Data Factory’s API version is designated as api-version.

Pane

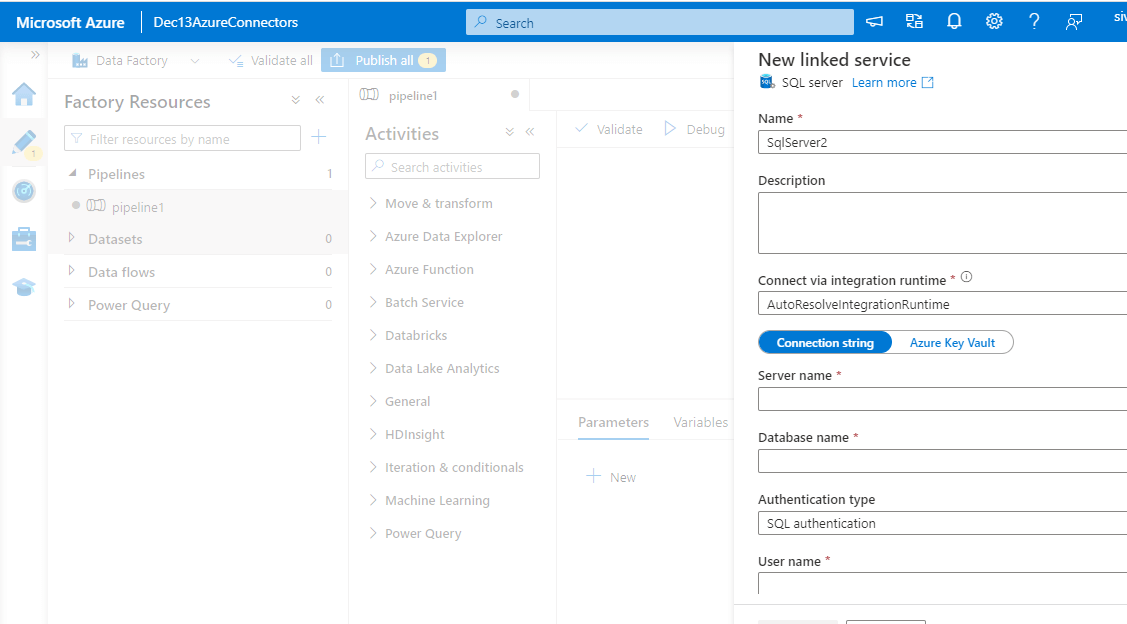

The azure data factory has a lot of panes, like the new linked service pane, which helps to open the new pane. Clearly, the data store tab will help to identify all the linked services, which can be helpful for reading the data. Finally, it is configured to the many data stores linked to services that are divided into each sub-category throughout the tab navigation.

Some of the Azure data factory connectors panes screenshots are as follows:

New dataset pane.

New Linked Service Pane.

Conclusion

Connecting to source and destination data stores is made easier with the aid of Azure Data Factory connectors, along with features like linked services that can use the same set of connectors. To check out this with the same on Azure Data Factory and Linked Services, parameters to perform additional services about linked services and to construct them.

Recommended Articles

This is a guide to Azure Data Factory Connectors. Here we discuss the introduction and how new connectors add, configure, and pane. You may also have a look at the following articles to learn more –