Updated March 13, 2023

What is Kernel in Machine Learning?

Machine Learning is a vast field, where we want a machine to learn without being explicitly programmed. In ML we deal with regression, classification, and pattern recognition problems. In classification problems, where the task is to classify different classes based on known input labels (Supervised learning), we have different methods. One is SVM (Support Vector Machine): Kernel methods (Kernel Tricks) are used in SVM. Kernel in Machine Learning is used to address the nonlinearity present in the dataset. A user-specified kernel function (similarity function) adds another dimension to the dataset, by doing this the dataset now can be classified using a linear hyperplane.

Why do we need Kernel Methods?

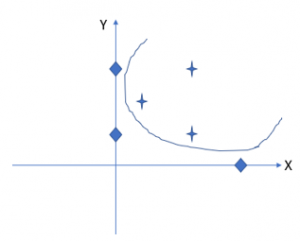

To address this question, we will take a simple classification problem to classify two different classes of data in the dataset. In the dataset, we have two categories stars and diamonds based on two features X and Y. It is easy to distinguish both datasets just by using a linear line. See the figure below: (Source: self-drafted)

Figure A: Linearly separable data set, separated with a linear function (line)

Let us take another case, here also we have two categories stars and diamonds, but now they are not linearly separable. In such cases, we will use a kernel function that gives an output that makes the dataset to be linearly separable.

For our analysis, we will take only 6 data points.

Figure B Dataset which cannot be separated using the linear function.

Co-ordinates of all the star points: (1, 2) ; (2, 1) ; (2, 3)

Co-ordinates of all diamond points: (0, 1) ; (0, 3) ; (4, 0)

Here we can see it is not possible to use a linear function to separate each category. To address this we will introduce one kernel function ( ø).

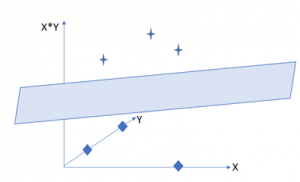

For our case, the kernel function we will use is: X*Y

This kernel function adds another dimension to existing data points. In simple words now coordinates of a point will be (X, Y, X*Y).

Now Co-ordinates for star points are: (1, 2, 2) ; (2, 1, 2) ; (2, 3, 6)

Co-ordinates of all diamond points are: (0, 1, 0) ; (0, 3, 0) ; (4, 0, 0)

We observe that for all diamond points the third-dimension coordinates are zero. By introducing this kernel function, we can distinguish two categories using a linear hyperplane.

Figure C: Linear hyperplane separating nonlinear spread dataset

This is the reason we need to know What is Kernel Machine Learning. The dataset we had was 2-D and lies in the 2-D plane (like a sheet of paper). Now by using a kernel, we can place this 2D plane into some other higher-dimensional space.

What is Kernel in Machine learning?

Kernel in Machine Learning is a field of study that enables computers to learn without being explicitly programmed. Basically, we place the input dataset into a higher dimensional space with the help of a kernel method or trick and then use any of the available classification algorithms in this higher-dimensional space. This is how we get a hyperplane that linearly separates the two categories. We can see in figure C, that this hyperplane can now easily distinguish both categories.

In other terms Kernel in Machine Learning is a measure of similarity between two points, it depends on the task also. For example, if one’s task is to recognize different categories. Kernel in Machine Learning will try to assign a low value to data that has the same objects, and a high value to another set of objects. Here the thing to notice is kernel provides a faster way to find similarity than that of comparing similarity point by point.

Let us say if we are using the kernel for text processing then it will assign high value to similar types of strings and low value to non-similar strings.

Kernel function takes data from the original dimension and provides scalar output by using dot products of the vector in a higher dimension. So, the output of a kernel method is a scalar, in this way the higher dimensionality is reduced, and we can easily avoid high dimensional computation to classify categories. This is the magic of the kernel trick.

Let’s see a simple an example:

I = (i1, i2, i3);

J = (j1, j2, j3).

Simple function to address nonlinearity: a refers to i,j

f = (a1a1, a1a2, a1a3, a2a1, a2a2, a2a3, a3a1, a3a2, a3a3)

kernel method is K(i, j ) = (i.j)^2

we will use some arbitrary data.

i = (1, 2, 3);

j = (4, 5, 6).

Then:

f(i) = (1, 2, 3, 2, 4, 6, 3, 6, 9)

f(j) = (16, 20, 24, 20, 25, 30, 24, 30, 36)

f(i). f(j) = 16 + 40 + 72 + 40 + 100+ 180 + 72 + 180 + 324 = 1024

A lot of calculation, because f is trying to map from a 3-D to a 9-D space.

Now if we use kernel trick then:

K(i. j) = (4 + 10 + 18 ) ^2 = 32^2 = 1024

Same output, and very less calculation.

For every classification problem with higher dimensionality and nonlinearity, we cannot use the kernel, without putting any extra effort. It increases flexibility in the model if we use the kernel for complex and higher dimensions. So, idea is to use simple kernels which can reduce computation time and complexity. Because with more flexibility there are chances of overfitting on the training set. Overfitting ruins the model.

It is hard to choose which kernel one should be used for a specific problem. Generally, it is recommended to try all possible kernels in the small-small training set and use the best one.

Benefits

We will discuss some bullet benefits of using the kernel trick in ML.

- Kernel reduces the complexity of calculation and makes it faster.

- We can use the kernel to address infinite dimensions.

- Kernel helps to distinguish similar objects easily.

- Kernel helps in dealing with nonlinear data by introducing linearity.

- The kernel gives an output that is scalar.

Conclusion

Kernel tricks are used for transforming nonlinearity present in the dataset to reduce calculation tasks and introduce linearity. The kernel provides a similarity function which further helps in categorizing data easily by providing scalar output. This article gives a brief introduction of why the kernel is so important, and how to use a kernel trick in machine learning.

Recommended Articles

This has been a guide to What is Kernel in Machine Learning. Here we also discuss why do we need kernel methods and benefits. You may also have a look at the following articles to learn more–