Introduction to Python Unicode Error

Python defines Unicode as a string type that facilitates the representation of characters, enabling Python programs to handle a vast array of different characters. For example, any directory path or link address as a string. When we use such a string as a parameter to any function, there is a possibility of the occurrence of an error. Such an error is known as a Unicode error in Python. We get such an error because any character after the Unicode escape sequence (“ \u ”) produces an error which is a typical error on Windows.

Working of Unicode Error in Python with Examples

Unicode standard in Python is the representation of characters in code point format. These standards are made to avoid ambiguity between the characters specified, which may occur Unicode errors. For example, let us consider “ I ” as roman number one. It can even be considered the capital alphabet “ i ”; they look the same but are two different characters with different meanings. To avoid such ambiguity, we use Unicode standards.

In Python, Unicode standards have two error types: Unicode encodes error and Unicode decode error. In Python, it includes the concept of Unicode error handlers. Whenever an error or problem occurs during the encoding or decoding process of a string or given text, these handlers are invoked. To include Unicode characters in the Python program, we first use the Unicode escape symbol \ you before any string, which can be considered a Unicode-type variable.

Syntax:

Unicode characters in Python programs can be written as follows:

"u dfskgfkdsg"Or

"sakjhdxhj"Or

"\u1232hgdsa"In the above syntax, we can see 3 different ways of declaring Unicode characters. In the Python program, we can write Unicode literals with prefixes either “u” or “U” followed by a string containing alphabets and numerals, where we can see the above two syntax examples. At the end last syntax sample, we can also use the “\u” Unicode escape sequence to declare Unicode characters in the program. In this, we have to note that using “\u,” we can write a string containing any alphabet or numerical, but when we want to declare any hex value, then we have to “\x” escape sequence, which takes two hex digits and for octal, it will take digit 777.

Example #1

Now let us see an example below for declaring Unicode characters in the program.

Code:

#!/usr/bin/env python

# -*- coding: latin-1 -*-

a= u'dfsf\xac\u1234'

print("The value of the above unicode literal is as follows:")

print(ord(a[-1]))Output:

![]()

In the above program, we can see the sample of Unicode literals in the Python program. Still, before that, we need to declare encoding, which is different in different versions of Python, and in this program, we can see it in the first two lines of the program.

Now we’ll see that Unicode faults, such as Unicode encoding and decoding failures, are promptly invoked if the problems arise.. There are 3 typical errors in Python Unicode error handlers.

In Python, strict error raises UnicodeEncodeError and UnicodeDecodeError for encoding and decoding failures, respectively.

Example #2

UnicodeEncodeError demonstration and its example.

In Python, it cannot detect Unicode characters, and therefore it throws an encoding error as it cannot encode the given Unicode string.

Code:

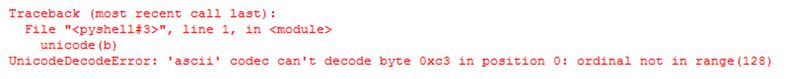

str(u'éducba')Output:

In the above program, we can see we have passed the argument to the str() function, which is a Unicode string. But this function will use the default encoding process ASCII. The program mentioned above throws an error due to the lack of encoding specification at the start. The default encoding used is a 7-bit encoding, which cannot recognize characters beyond the range of 0 to 128. Therefore, we can see the error that is displayed in the above screenshot.

The above program can be fixed by manually encoding the Unicode string, such as.encode(‘utf8’), before providing it to the str() function.

Example #3

In this program, we have called the str() function explicitly, which may again throw an UnicodeEncodeError.

Code:

a = u'café'

b = a.encode('utf8')

r = str(b)

print("The unicode string after fixing the UnicodeEncodeError is as follows:")

print(r)Output:

![]()

In the above, we can show how to avoid UnicodeEncodeError manually by using .encode(‘utf8’) to the Unicode string.

Example #4

Now we will see the UnicodeDecodeError demonstration and its example and how to avoid it.

Code:

a = u'éducba'

b = a.encode('utf8')

unicode(b)Output:

In the above program, we can see we are trying to print the Unicode characters by encoding first; then, we are trying to convert the encoded string into Unicode characters, which means decoding back to Unicode characters as given at the start. In the above program, we get an error as UnicodeDecodeError when we run. So to avoid this error, we have to decode the Unicode character “b manually.”

So we can fix it by using the below statement, and we can see it in the above screenshot.

b.decode(‘utf8’)

![]()

Conclusion

In this article, we conclude that in Python, Unicode literals are other types of string for representing different types of string. In this article, we also saw how to fix these errors manually by passing the string to the function.

Recommended Articles

We hope that this EDUCBA information on “Python Unicode Error” was beneficial to you. You can view EDUCBA’s recommended articles for more information.