Updated April 6, 2023

Introduction to Kubernetes Control Plane

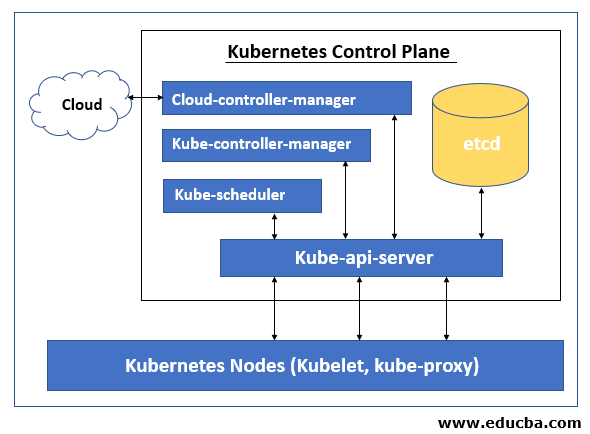

Kubernetes control Plane is responsible for maintaining the Desire State of any object in the cluster. It also manages the worker nodes and the pods. It is comprised of five components Kube-api-server, etc, Kube-scheduler, Kube-controller-manager, and cloud-controller-manager. The node on which these components are running is called ‘Master Node’. These components can run on a single node or on multiple nodes however it is recommended to run on multiple nodes in the production to provide high-availability and fault-tolerance. Each control plane’s component has its own responsibility however all together they make global decisions about the cluster, detect and respond to cluster events that are generated by the users, or any integrated third party application.

How does Kubernetes Control Plane work?

Let’s understand the working of Kubernetes control plane by an example, given below: –

$kubectl get nodes: The kubectl is a command-line tool that we use to interact with the Kubernetes cluster and manage it. Here, when we run this command, it makes an API call through HTTPs to the cluster and it is handled by ‘kube-apiserver’. ‘kube-apiserver’ communicate with other control plane’s component that is ‘etcd’ data store and it fetches the data and sends back to the console via HTTPs and we see the details of nodes on our terminal.

Components of Kubernetes Control Plane

Let’s understand about different components of Kubernetes Control Plane. Kubernetes Control Plane has five components as below:

- Kube-api-server

- Kube-scheduler

- Kube-controller-manager

- etcd

- cloud-controller-manager

1. Kube-API-server

Kube-api-server is the main component of the control plane as all traffic goes through api-server, other components of the control plane also connect to api-server if they have to communicate with ‘etcd’ datastore as only Kube-api-server can communicate with ‘etcd’. It services REST operations and provides a front end for the Kubernetes control plane that exposes the Kubernetes API through which other components can communicate to the cluster. There is more than one api-server that can be deployed horizontally to balance the traffic using a load balancer.

2. Kube-scheduler

Kube-scheduler is responsible for scheduling newly created pods to the best available nodes to run in the cluster. However, it is possible to schedule a pod or a group of pods on a specific node, in a specific zone or as per node label, etc. by specifying affinity, anti-specification or constraint in the YAML file before deploying a pod or a deployment. If there is no node available that meets the specified requirements then the pod is not deployed and it remains unscheduled until the Kube-scheduler does not find a feasible node. Feasible node is the node that fulfills all the requirements for a pod to schedule.

Kube-scheduler uses 2 step process to select a node for the pod in the cluster, filtering, and scoring. In filtering, Kube-scheduler finds a feasible node by running checks like node has enough available resource that is mentioned for this pod. Once it filters out all feasible nodes, it assigns a score to each feasible node based on active score rules and it runs the pod on the node which has the highest score. If more than one node has the same score then it chooses one randomly.

3. Kube-controller-manager

Kube-controller-manager is responsible for running controller processes. It is actually comprised of four processes and runs as a single process to reduce complexity. It ensures that the current state matches the desired state, if the current state does not match the desired state, it makes appropriate changes to the cluster to achieve the desired state.

It includes node controller, replication controller, endpoints controller, and service account and token controllers.

- Node controller: – it manages nodes and keeps an eye on available nodes in the cluster and responds if any node goes down.

- Replication controller: – It ensures that the correct numbers of pods are running for every replication controller object in the cluster.

- Endpoints controller: – it creates the Endpoints object, for example, to expose the pod externally we need to join it to a service.

- Service account and token controllers: – Responsible for creating default accounts and the API access token. For example, whenever we create a new namespace, we need a service account and access token to access it so these controllers are responsible for creating default accounts and API access token for the new namespace.

4. etcd

etcd is the default data store for Kubernetes that stores all cluster data. It is a consistent, distributed, and a highly-available key-value store. etcd is only accessible by Kube-api-server. If other control plane’s components have to access etcd, it has to go through kube-api-server. etcd is not a part of Kubernetes. It is totally different open-source product backed by the Cloud Native Computing Foundation. We need to set up a proper backup plan for etcd so if something happens to the cluster we can restore the backup and come back to the business quickly.

5. cloud-controller-manager

cloud-controller-manager allows us to connect our on-premises Kubernetes cluster to the cloud-hosted Kubernetes cluster. It is a separate component that only interacts with the cloud platform. Controllers of cloud-controller-manager depend upon which cloud provider we are running our workload. It is not available if we have on-premises Kubernetes cluster or we have installed Kubernetes on our own PC for learning purposes. cloud-controller-manager also includes three controllers in a single process those are Node controller, Route controller, and Service controller.

- Node controller: – It keeps checking the status of nodes hosted in cloud providers. For example, if any node is not responding then it checks that the node has been deleted in the cloud.

- Route controller: – It controls and sets up the route in the underlying cloud infrastructure.

- Service controller: – It creates, updates, and deletes cloud provider load balancers.

Conclusion

Kubernete’s control plane is the heart of the Kubernetes cluster. If we have multiple master nodes in a cluster then kube-scheduler and controller-manager must act only on one node at a time, on others node these will be in standby mode. etcd is the default data store for the Kubernetes however we can use the different key-value data stores if we want.

Recommended Articles

This is a guide to Kubernetes Control Plane. Here we discuss an introduction to Kubernetes Control Plane, how does it work and top 5 components. You can also go through our other related articles to learn more –