Updated July 5, 2023

Introduction to Feedforward Neural Networks

Feedforward neural network is an artificial neural network whereby connections between the nodes don’t type a cycle. During this network, the information moves solely in one direction and through completely different layers for North American countries to urge an output layer. It goes through the input layer, followed by the hidden layer, and so to the output layer, wherever we tend to get the desired output.

This area unit is largely used for supervised learning wherever we tend to apprehend already the required operation.

Applications of Feedforward Neural Networks

- Physiological feedforward system: During this, the feedforward management is epitomized by the conventional prevenient regulation of heartbeat before working out by the central involuntary

- Gene regulation and feedforward: During this, a motif preponderantly seems altogether the illustrious networks, and this motif is a feedforward system for detecting the non-temporary modification of the atmosphere.

- Automation and machine management: Feedforward control may be a discipline among automation controls.

- Parallel feedforward compensation with derivative: This new technique changes the part of AN open-loop transfer operations of a non-minimum part system into the minimum part.

The main reason for a feedforward network is to approximate operate. If we tend to add feedback from the last hidden layer to the primary hidden layer, it will represent a repeated neural network.

A feedforward neural network is additionally referred to as a multilayer perceptron. It’s a network during which the directed graph establishing the interconnections has no closed ways or loops. These networks have vital process powers; however no internal dynamics.

To develop a feedforward neural network, we want some parts that area units used to develop the algorithms.

- Optimizer- An optimizer is employed to attenuate the value operation; this updates the values of the weights and biases once each coaching cycle till the value operates the world.

- Stochastic gradient descent: It’s an unvarying methodology for optimizing An objective operation with appropriate smoothness properties.

- Adagrad

- Adam

- RMS prop

This optimization algorithmic rule has 2 forms of algorithms:

- First-order optimization algorithm: This derivative derived tells North American country if the function decreases or increases at a selected purpose. It provides a road that is tangent to the surface.

- Second-order optimization algorithm: This second-order by-product provides North American countries with a quadratic surface that touches the curvature of the error surface.

Cost Function

A cost operation may be alive to visualize, however smart the neural network did about its coaching and the expected output. It would even rely upon the weights and also the biases.

Some doable value functions are:

- Quadratic value

- Cross-entropy value

- Exponential value

- Hellinger distance

It should satisfy 2 properties for value operation.

- The value can be represented as a median.

- The value should not be enthusiastic about any activation worth of the network besides the output layer.

Architecture for Feedforward Neural Network

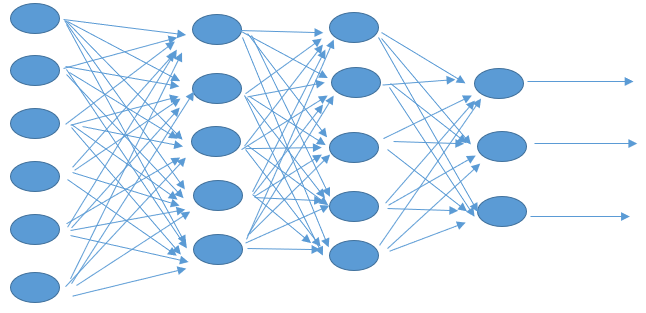

The top of the figure represents the design of a multi-layer feed-forward neural network. It represents the hidden layers and the hidden unit of every layer from the input to the output layer.

The operation of hidden neurons intervenes between the input and output network. The upper-order statistics area unit extracted by adding many hidden layers to the network.

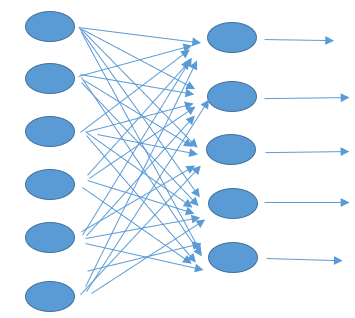

The top of the figure represents the one-layer feedforward neural specification. The input passes through weights and neurons within the output layer, determining the output signals.

Each on top of the figure, the network’s area unit is connected as each vegetative cell in every layer is connected to the opposite vegetative cell within the next forward layer. If any connections were missing, we would refer to it as partly connected.

A neural network’s necessary feature distinguishing it from a traditional pc is its learning capability.

Conclusion

In this, we have discussed the feed-forward neural networks.

- About feedforward neural network

- Applications of feed-forward neural network

- The architecture of a neural network

- Cost function

Recommended Articles

We hope that this EDUCBA information on “Feedforward Neural Networks” was beneficial to you. You can view EDUCBA’s recommended articles for more information.