Updated March 8, 2023

Introduction to Bigquery vs Bigtable

Bigquery vs Bigtable is the comparison between the Bigquery and the Bigtable. Bigquery is the enterprise data warehouse that enables super-fast SQL queries by using the processing power of Google’s structure and facilities. Bigtable is a high-performance storage system, stores a large amount of data in key-value pairs and supports high read-write throughput at the lowest latency for faster access to the data. These two services have a number of similarities but still support different kinds of use cases in big data ecosystem. Bigquery being an enterprise data warehouse for a huge amount of relational data whereas Bigtable is NoSQL column-wide database that is optimized for better read-write. Here, we shall compare both Bigquery with Bigtable and look at the key differences and similarities of both.

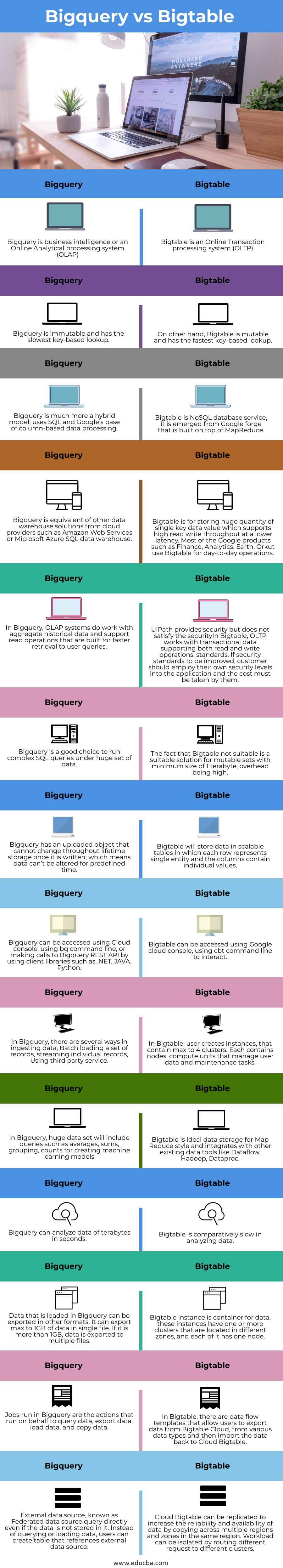

Head to Head Comparison Between Bigquery vs Bigtable (Infographics)

Below are the top 14 differences between Bigquery vs Bigtable:

Comparison Table (Bigquery vs Bigtable)

| Bigquery | Bigtable |

| Bigquery is business intelligence or an Online Analytical processing system (OLAP) | Bigtable is an Online Transaction processing system (OLTP) |

| Bigquery is immutable and has the slowest key-based lookup. | On other hand, Bigtable is mutable and has the fastest key-based lookup. |

| Bigquery is much more a hybrid model, uses SQL and Google’s base of column-based data processing. | Bigtable is NoSQL database service, it emerges from Google forge that is built on top of MapReduce. |

| Bigquery is equivalent to other data warehouse solutions from cloud providers such as Amazon Web Services or Microsoft Azure SQL data warehouse. | Bigtable is for storing a huge quantity of single key data values which supports high read write throughput at a lower latency. Most of the Google products such as Finance, Analytics, Earth, Orkut use Bigtable for day-to-day operations. |

| In Bigquery, OLAP systems do work with aggregate historical data and support read operations that are built for faster retrieval to user queries. | In Bigtable, OLTP works with transactional data supporting both read and write operations. |

| Bigquery is a good choice to run complex SQL queries under a huge set of data. | The fact that Bigtable is not suitable is a suitable solution for mutable sets with a minimum size of 1 terabyte, overhead is high. |

| Bigquery has an uploaded object that cannot change throughout lifetime storage once it is written, which means data can’t be altered for a predefined time. | Bigtable will store data in scalable tables in which each row represents a single entity and the columns contain individual values. |

| Bigquery can be accessed using Cloud console, using bq command line, or making calls to Bigquery REST API by using client libraries such as .NET, JAVA, Python. | Bigtable can be accessed using Google cloud console, using cbt command line to interact. |

| In Bigquery, there are several ways in ingesting data, Batch loading a set of records, streaming individual records, Using third party service | In Bigtable, the user creates instances, that contain max to 4 clusters. Each contains nodes, compute units that manage user data and maintenance tasks. |

| In Bigquery, huge data set will include queries such as averages, sums, grouping, counts for creating machine learning models. | Bigtable is ideal data storage for Map Reduce style and integrates with other existing data tools like Dataflow, Hadoop, Dataproc. |

| Bigquery can analyze data of terabytes in seconds. | Bigtable is comparatively slow in analyzing data. |

| Data that is loaded in Bigquery can be exported in other formats. It can export a max of 1GB of data in a single file. If it is more than 1GB, data is exported to multiple files. | Bigtable instance is a container for data, these instances have one or more clusters that are located in different zones, and each of them has one node. |

| Jobs run in Bigquery are the actions that run on behalf to query data, export data, load data, and copy data. | In Bigtable, there are data flow templates that allow users to export data from Bigtable Cloud, from various data types and then import the data back to Cloud Bigtable. |

| External data source, known as Federated data source query directly even if the data is not stored in it. Instead of querying or loading data, users can create a table that references external data sources. | Cloud Bigtable can be replicated to increase the reliability and availability of data by copying across multiple regions and zones in the same region. Workload can be isolated by routing different requests to different clusters. |

Key Differences in Bigquery vs Bigtable

- Bigquery is SQL big data warehouse whereas Bigtable is a NoSQL database.

- Bigtable was originally developed in 2004 and was built upon Google File System i.e., GFS.

- Bigquery is much faster is data scanning and allows scaling to petabytes, being a good enterprise data warehouse for analytics.

- Both are hosted on Server operating systems, and Bigquery supports user-defined service side script functions whereas Bigtable does not.

- Bigquery supports some of the programming languages such as Java, .NET, Objective C, PHP, Ruby, Python, and JavaScript.

- Bigtable supports some of the programming languages such as Go, C++, C, JavaScript(Node Js), Python, and Java.

Conclusion

With this, we shall conclude the topic “Bigquery vs Bigtable”. We have seen what Bigquery vs Bigtable means. Also referred to individual definitions of Bigquery and Bigtable. We have seen many similarities and compared all the differences in the comparison table above. Also listed out few of the key differences to be noted in both Bigquery and Bigtable. Thanks! Happy Learning!!

Recommended Articles

This is a guide to Bigquery vs Bigtable. Here we discuss key differences with infographics and comparison tables, respectively. You may also have a look at the following articles to learn more –