What are AI Chips?

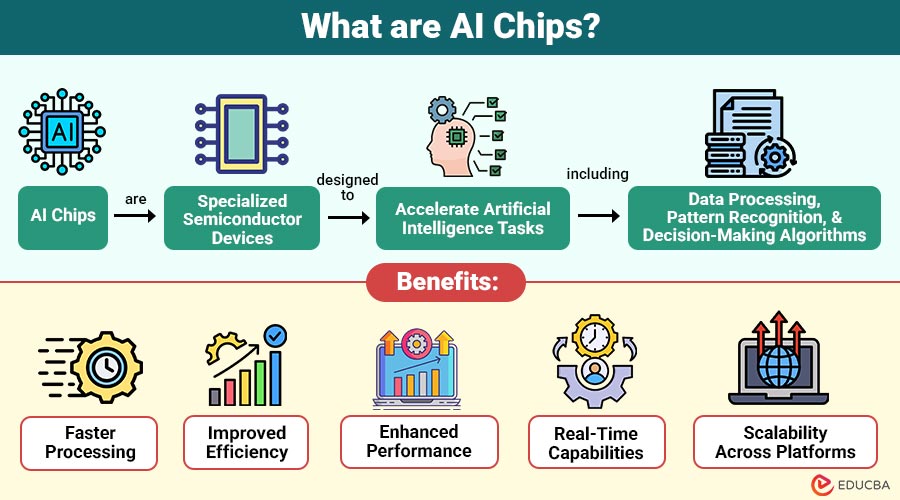

AI chips are specialized semiconductor devices designed to accelerate artificial intelligence tasks, including data processing, pattern recognition, and decision-making algorithms. These chips are built to handle large volumes of data and perform multiple operations simultaneously, which is important for training and deploying AI models.

Companies like NVIDIA, Intel, AMD, and Google have developed AI-specific processors to meet growing computational demands.

Table of Contents:

Key Takeaways:

- AI chips accelerate machine learning tasks through parallel processing and optimized computational architectures efficiently.

- Specialized AI processors improve performance, reduce latency, and enhance energy efficiency across diverse applications.

- They enable real-time intelligence in industries including healthcare, robotics, autonomous vehicles, and data centers.

- They support scalable deployment across cloud and edge environments for flexible computing solutions.

Key Features of AI Chips

Here are the key features that make them essential for modern artificial intelligence applications:

- Parallel Processing Capability: Execute multiple calculations simultaneously, significantly boosting performance and efficiency, and enabling faster processing of large datasets.

- High Computational Power: They handle complex deep learning calculations, improving precision and speeding up AI model training

- Energy Efficiency: Consumes less power than traditional processors, optimizing energy usage while delivering high performance for AI-driven applications.

- Low Latency: These processors enable real-time decision-making in applications such as analytics and autonomous driving by processing data quickly and with minimal latency.

- Scalability: Easily scale across cloud and edge environments, supporting diverse workloads and expanding AI applications efficiently across systems.

Types of AI Chips

Here are the major types used across different applications and industries:

- Graphics Processing Units (GPUs): GPUs were first developed for graphics rendering, but their superior parallel processing capabilities make them ideal for training intricate AI models.

- Tensor Processing Units (TPUs): Tensor Processing Units (TPUs) are special chips made to quickly run deep learning tasks, helping AI learn and work faster and more efficiently.

- Application-Specific Integrated Circuits (ASICs): Engineers design Application-Specific Integrated Circuits (ASICs) for specific AI tasks, delivering superior performance, efficiency, and reduced power consumption.

- Field Programmable Gate Arrays (FPGAs): Reconfigurable processors, or FPGAs, provide for flexible and adaptable processing of AI workloads.

- Neural Processing Units (NPUs): NPUs efficiently process neural networks in smartphones and IoT devices quickly smoothly

How Do AI Chips Work?

Here is a step-by-step explanation of how they process data and generate intelligent outputs:

- Step 1 – Data Input: Data is fed into the AI chip as images, text, or numerical datasets for further processing and analysis tasks.

- Step 2 – Parallel Computation: The chip runs many calculations at the same time using parallel units, increasing speed and handling large tasks efficiently.

- Step 3 – Model Execution: Neural network models process input data through multiple layers, applying mathematical transformations to extract patterns and meaningful insights.

- Step 4 – Output Generation: The AI chip generates predictions, classifications, or decisions based on processed data, enabling intelligent responses in various applications.

- Step 5 – Optimization: Optimizes performance using techniques like quantization and pruning, reducing computation load while maintaining accuracy and improving efficiency.

Applications of AI Chips

Here are the major applications where they play a crucial role in transforming industries:

- Autonomous Vehicles: Autonomous vehicles process sensor data to detect objects navigate roads and drive safely efficiently now

- Healthcare: Healthcare systems analyze medical images, assist diagnoses, accelerate drug discovery, and improve patient outcomes greatly

- Consumer Electronics: Consumer electronics power smartphones and wearables enabling voice recognition personalization performance and efficient processing features

- Data Centers: Data centers train models and make predictions supporting cloud computing, data analysis, and business applications

- Robotics: Robotics enable robots automate tasks, make decisions, and perform precise movements across industrial applications efficiently

Benefits of AI Chips

Here are the key benefits that make it essential for modern computing and intelligent systems:

- Faster Processing: Accelerates training and inference processes, significantly reducing computation time and enabling faster execution of complex AI models.

- Improved Efficiency: They use hardware resources efficiently, reduce power usage and costs, and still deliver high performance for AI tasks.

- Enhanced Performance: Specialized AI chips work faster than regular processors by efficiently handling tasks like matrix calculations and deep learning.

- Real-Time Capabilities: It processes data instantly and makes quick decisions, helping important systems like robots, self-driving cars, and healthcare monitoring work efficiently.

- Scalability Across Platforms: They support deployment from edge devices to cloud servers, ensuring flexibility and scalability for diverse AI applications and environments.

Limitations of AI Chips

Here are the key limitations associated with it:

- High Development Cost: Designing and producing require substantial investment, specialized expertise, and complex manufacturing processes, increasing overall costs.

- Limited Flexibility: AI chips like ASICs perform specific tasks efficiently but lack flexibility for adapting to changing workloads

- Rapid Obsolescence: Rapid AI progress makes chips outdated quickly, requiring frequent upgrades and raising long-term costs.

- Generation: High performance generates heat, requiring cooling systems to maintain stability and prevent damage

- Complex Integration: Engineers face challenges integrating AI chips into systems requiring compatible hardware software expertise.

- Limited Availability: Advanced AI chips limited due to high demand and complex production, causing delays and costs.

Real-World Examples

Given below are some prominent examples used across industries:

- AMD Instinct MI Series: AMD Instinct MI Series chips help run heavy AI tasks and large computations faster using parallel processing.

- Qualcomm AI Engine: Qualcomm AI Engine in Snapdragon processors enables on-device AI features like voice, camera, translation efficiently.

- Amazon AWS Inferentia: Amazon built AWS Inferentia, a custom chip optimized for cloud machine learning inference that delivers high performance at lower cost.

- Tesla Dojo Chip: Developed by Tesla, this AI chip is designed to efficiently train autonomous driving models using massive video datasets.

Final Thoughts

AI chips are the backbone of modern artificial intelligence systems, enabling faster, smarter, and more efficient computation. These specialized processors will become increasingly important in shaping the direction of technology as AI develops. From powering intelligent devices to transforming industries, AI chips are not just components—they are the driving force behind the next wave of innovation.

Frequently Asked Questions (FAQs)

Q1. Are AI chips expensive?

Answer: Yes, it can be costly due to its advanced design and specialized functionality.

Q2. Can AI chips work without the cloud?

Answer: Yes, AI chips in edge devices process data locally, reducing latency, improving privacy, and enabling real-time decision-making without cloud dependency.

Q3. Do AI chips require special software?

Answer: Yes, they require optimized frameworks like TensorFlow or PyTorch for best performance.

Q4. Are AI chips energy efficient?

Answer: Yes, they are designed to perform complex tasks with lower power consumption

Recommended Articles

We hope that this EDUCBA information on “AI Chips” was beneficial to you. You can view EDUCBA’s recommended articles for more information.