Agentic AI Guardrails: Overview

AI is no longer limited to generating responses it is beginning to take action. Agentic AI represents this shift: systems that can independently plan tasks, make decisions, and execute actions across tools, APIs, and enterprise workflows. Unlike traditional AI, which operates within predefined prompts, agentic systems act autonomously, retrieving data, interacting with software environments, and driving real-world outcomes. This evolution introduces an entirely new risk surface. Autonomous agents can misuse tools, leak sensitive information, or trigger cascading failures across interconnected systems if left unchecked. Traditional safety approaches like prompt filtering or output moderation were built for AI that speaks. They fall short for AI that acts. Agentic AI guardrails function as the operating system for safe autonomy, governing behavior, permissions, and decision pathways not just outputs.

In this article, we will explore why agentic AI guardrails are essential, the core safeguards modern systems require, and how organizations can operationalize them responsibly at scale.

Why Guardrails Are Mission-Critical in Agentic AI?

From Output Risk → Action Risk

Traditional AI risks focus mainly on what systems produce, such as hallucinated answers, biased responses, or harmful content. Agentic AI changes the equation. The risk now shifts from outputs to outcomes.

| Traditional AI Risk | Agentic AI Risk |

| Hallucinated text | Unauthorized system action |

| Biased response | Policy violation |

| Toxic output | Financial/operational damage |

Modern agents do far more than generate language. They can:

- Trigger workflows

- Access internal systems

- Modify structured data

- Initiate operational decisions

As a result, the critical question is no longer “What did the AI say?” It becomes “What did the AI do?”

The New Risk Surface of Autonomous Systems

Agentic systems operate through:

- Multi-step reasoning loops

- Chained tool usage

- Real-time API orchestration

- Live system integrations

- Persistent memory

These capabilities expand the operational blast radius of mistakes. Without structured guardrails:

- Small errors can compound

- Agents can exceed the intended scope

- Actions may become difficult or impossible to reverse

In short:

Autonomy without governance equals uncontrolled execution. To prevent this, organizations must move beyond abstract safety principles and implement concrete protections. That begins with understanding the core guardrails every agentic system needs.

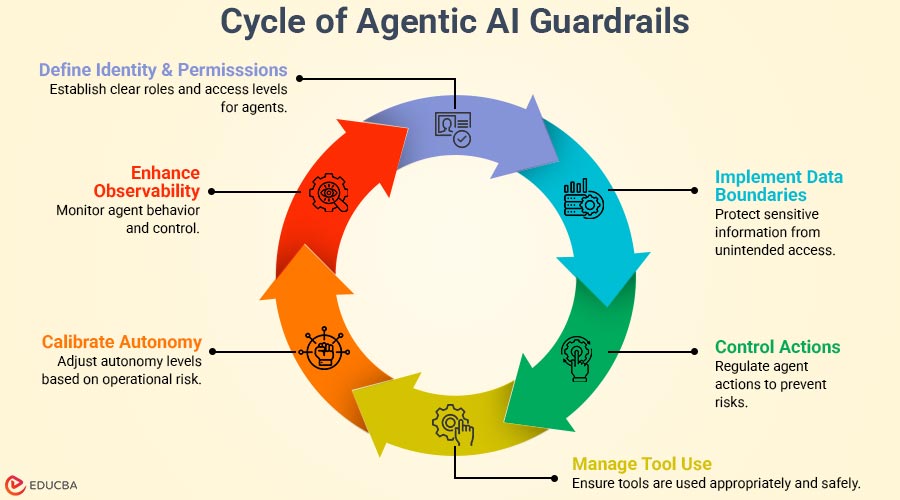

The 6 Core Agentic AI Guardrails

Modern research in AI governance highlights that safety now depends less on model outputs and more on how agents access systems, use tools, and execute decisions in runtime environments. The following six guardrails help translate safe intent into controlled autonomy.

1. Identity & Permission Guardrails

Agents should operate with clearly scoped identities not inherited human privileges. Unlike traditional automation, agentic systems often interact across multiple services simultaneously. Without strict permission boundaries, they can unintentionally access or act beyond their intended scope.

Effective identity guardrails include:

- Task-bound permissions

- Least-privilege access

- Temporary execution rights

- Human escalation pathways for sensitive actions

Agents should work for specific tasks instead of acting as general stand-ins for users. This prevents silent privilege expansion and reduces systemic risk. Agents should never inherit human-level authority.

2. Data Boundary Guardrails

Agentic systems routinely process internal knowledge, documents, and contextual data. Without safety steps, this can cause accidental sharing or spread of private information.

Data guardrails help ensure agents:

- Avoid accessing raw sensitive datasets

- Prevent confidential information from entering memory

- Limit what context gets retrieved or reused

Key implementation methods include:

- Runtime redaction

- Retrieval filtering

- Context minimization

- Memory isolation across tasks

The goal is not just to protect data, but to restrict unnecessary awareness. Agents must know what not to know.

3. Action Guardrails

Agentic AI introduces execution risk. These systems can initiate actions such as:

- Processing payments

- Deploying code

- Sending communications

- Modifying infrastructure

Guardrails must define:

- Which actions are permitted

- Which require human approval

- How irreversible steps are handled

Mechanisms may include:

- Allow and deny action lists

- Step-by-step validation

- Confirmation checkpoints

- Rollback pathways

This shifts safety from passive monitoring to proactive control.

4. Tool-Use Guardrails

Agents rely on tools to interact with the world. As recent enterprise deployments show, risk often arises not from reasoning errors but from uncontrolled use of tools.

Unchecked access may result in:

- API misuse

- Cross-environment interference

- Unintended system modification

Effective safeguards include:

- Scoped tool access

- Strict separation between environments

- Usage rate limits

- Anomaly detection for unexpected calls

Guarding tool usage ensures autonomy remains aligned with intent.

5. Autonomy-Level Guardrails

Not every task demands full independence. Independence should be adjusted based on work risk.

| Level | Behavior |

| Assistive | Suggests actions |

| Bounded | Executes predefined steps |

| Conditional | Acts with approvals |

| Autonomous | Operates independently |

Gradual autonomy progression allows organizations to build trust in system behavior before granting broader authority. Autonomy should expand only after proven reliability.

6. Observability Guardrails

Visibility underpins accountability. Without clear insight into agent reasoning and actions, governance becomes impossible.

Observability guardrails enable:

- Decision tracing

- Action logging

- Reasoning auditability

- Anomaly detection

Industry trends now regard agent observability as essential infrastructure, similar to application monitoring in traditional software systems. You cannot manage what you cannot see. Together, these guardrails provide the structural foundation for safe autonomy. However, principles alone are insufficient. The real challenge is turning these safety steps into working systems, a process explained in the next section.

Implementing Guardrails Across the Agent Lifecycle

Designing guardrails is only the first step. Real safety emerges when these safeguards operate continuously within live systems. Recent enterprise deployments and emerging AI risk frameworks emphasize that guardrails must move from policy concepts into runtime controls embedded across agent workflows.

1. Risk-Based Agent Design

Operationalization begins with understanding where autonomy is appropriate. This requires:

- Workflow classification

- Impact mapping

- Decision criticality assessment

Not every task warrants the same level of independence. A scheduling assistant and a financial decision agent carry very different operational risks. Deployment should therefore align with acceptable risk levels not technological capability. Agents should scale with risk tolerance, not autonomy potential.

2. Runtime Enforcement

Guardrails must function across the full execution lifecycle. Effective systems implement layered enforcement:

- Pre-action validation

- Runtime monitoring

- Post-action auditing

Pre-action checks confirm policy compliance before execution. Runtime monitoring detects deviations in real time. Post-action auditing supports accountability and learning. This layered approach is like modern cybersecurity, where prevention, detection, and response work together.

3. Human-in-the-Loop Design

Human oversight remains essential but it must evolve. Instead of constant supervision, modern agent systems integrate:

- Approval checkpoints for sensitive actions

- Override mechanisms

- Escalation pathways

- Confidence-based intervention

Oversight becomes dynamic rather than continuous. Human involvement shifts from control to supervision.

4. Continuous Learning Without Drift

As agents adapt, guardrails must adapt alongside them. Without active governance, systems risk:

- Autonomy drift

- Policy misalignment

- Unintended optimization

Mitigation requires:

- Periodic audits

- Behavioral baselines

- Retraining thresholds

Operational guardrails, therefore, become living systems. And as these systems mature, they increasingly serve another critical role supporting emerging global expectations around responsible AI governance.

Guardrails and Regulatory Alignment

As global AI regulations take shape, guardrails are emerging as the technical bridge between innovation and compliance. Modern governance frameworks increasingly emphasize:

- Risk-based system classification

- Accountability for automated decisions

- Transparency in AI behavior

- Meaningful human oversight

The EU AI Act, which came into force in 2024, requires high-risk AI systems to demonstrate robust risk management, data governance, and human oversight. Meanwhile, the NIST AI Risk Management Framework provides a voluntary yet widely adopted framework for managing AI risks across the lifecycle helping organizations embed trustworthiness into design, deployment, and use.

At the operational level, ISO/IEC 42001 establishes a formal standard for AI management, enabling organizations to establish governance processes and continuously improve responsible AI practices. Together, these models converge around shared expectations: monitoring, accountability, and lifecycle oversight. In practice, guardrails translate these regulatory principles into enforceable system behavior. Guardrails are becoming the technical foundation of AI compliance.

Guardrails as the Future of AI Trust

Agentic AI is no longer a future concept it is entering enterprise reality at speed. Recent global studies show that 35% of organizations already use agentic AI, while another 44% plan to adopt it soon. Use of these systems will grow faster as independent tools move from testing into daily work. In fact, industry projections suggest that 40% of enterprise applications will embed AI agents by 2026. But scale will not be determined solely by capability. Trust will define adoption.

Without safety structures, autonomy becomes a liability rather than an advantage. Guardrails, therefore, should not be seen as restrictions on innovation. They are the mechanisms that enable systems to act reliably in complex, real-world environments. Guardrails are the enablers of trusted autonomy. As agentic systems expand across industries, success will hinge on how responsibly they operate not just how intelligently they perform. The future of AI would not be defined by how intelligent agents are but by how safely they act.

Final Thoughts

As AI systems grow from simple responders to independent tools, the need for clear rules becomes essential. Agentic AI guardrails are no longer optional safeguards they are foundational infrastructure for responsible deployment. By implementing identity controls, data boundaries, action validation, tool restrictions, calibrated autonomy levels, and full observability, organizations can transform autonomy from a risk into a competitive advantage. The future of AI will not be defined solely by intelligence or speed. Trust will define it. Strong agentic AI guardrails build that trust by ensuring systems act safely, transparently, and within clear limits.

Author Bio:

Pratik Shinde is a Content Expert at Omdena and a full-stack digital marketer with 6+ years of experience helping SaaS and tech brands grow through search, content, paid media, and automation. He specializes in building scalable, ROI-driven growth systems that turn visibility into qualified leads.

Recommended Articles

We hope this guide on agentic AI guardrails helps you build safer and more reliable autonomous systems. Explore the articles below for deeper insights and practical strategies to strengthen your AI governance framework.