What is Adversarial AI?

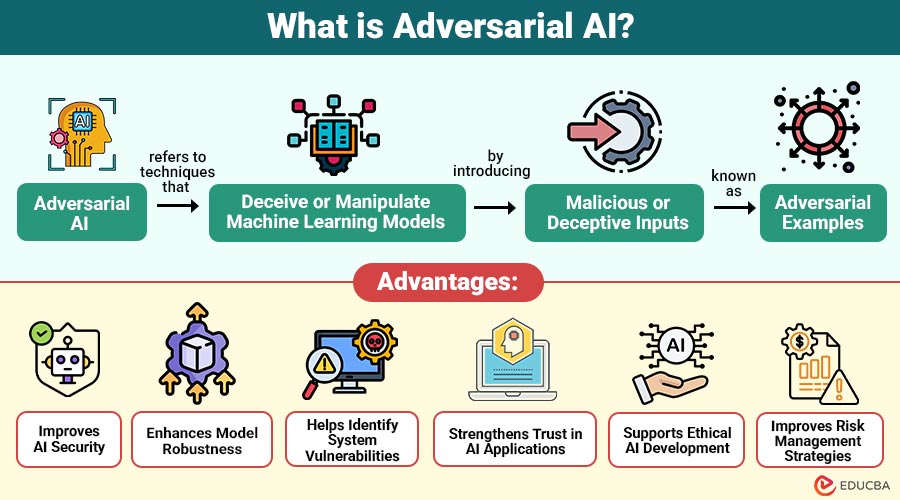

Adversarial AI refers to techniques that deceive or manipulate machine learning models by introducing malicious or deceptive inputs, known as adversarial examples. These inputs are designed to appear normal to humans but cause AI systems to make incorrect predictions or decisions.

For example, a slightly altered image of a stop sign might still look normal to a human but could be misclassified by an AI model as a speed limit sign, leading to potentially dangerous outcomes.

Table of Contents:

- Meaning

- Importance

- Working

- Types

- Key Components

- Techniques

- Advantages

- Challenges

- Real-World Examples

- Best Practices

- Future Trends

Key Takeaways:

- Adversarial AI exploits vulnerabilities in machine learning models by crafting malicious input data.

- It improves AI security robustness and trust by identifying and mitigating adversarial threats effectively.

- Adversarial AI works through evasion, poisoning, inversion, and model extraction attack techniques and methods used.

- Adversarial AI impacts critical systems such as healthcare, finance, and autonomous vehicles, posing serious risks.

Importance of Adversarial AI

Here are key reasons why adversarial AI is important in building secure and reliable artificial intelligence systems.

1. Protects Sensitive Data

Adversarial AI helps safeguard confidential information by identifying vulnerabilities, preventing data leaks, and reducing risks of unauthorized access or exposure.

2. Prevents System Failures

It minimizes risks of unexpected system failures by detecting adversarial threats early, ensuring AI systems operate accurately and consistently under diverse conditions.

3. Ensures Trust in AI Systems

By improving security and transparency, adversarial AI builds user confidence, ensuring systems behave reliably and ethically across real-world applications and environments.

4. Enhances Robustness and Reliability

Adversarial AI strengthens model resilience against attacks, improving stability, performance, and reliability even when exposed to malicious inputs or unpredictable scenarios.

5. Improves Model Generalization

Adversarial AI helps models learn diverse patterns, improving generalization and enabling better performance on unseen data across different real-world scenarios.

6. Supports Regulatory Compliance

It helps organizations meet data protection and AI governance regulations by ensuring models are secure, transparent, and resistant to adversarial threats.

How Does Adversarial AI Work?

Adversarial AI exploits vulnerabilities in machine learning models, particularly deep learning systems. These models rely on patterns in data, and attackers can manipulate these patterns in subtle ways.

Key Mechanisms:

1. Adversarial Examples

Small, carefully crafted changes are made to input data, causing models to misclassify outputs while the changes remain almost invisible to humans.

2. Model Evasion

Attackers design inputs that appear normal but exploit vulnerabilities, enabling them to bypass AI systems such as spam filters or fraud-detection mechanisms.

3. Data Poisoning

Bad or misleading data is added to training data. This makes models learn wrong patterns and give unreliable or biased results.

4. Model Extraction

Attackers repeatedly query a model, analyze its responses, and reconstruct a similar version, potentially stealing intellectual property or sensitive model behavior.

Types of Adversarial Attacks

Here are the main types of adversarial attacks used to exploit weaknesses in machine learning systems.

1. Evasion Attacks

Evasion attacks occur during inference when attackers subtly modify input data so machine learning models misclassify it without altering its intended meaning.

2. Poisoning Attacks

Poisoning attacks involve injecting malicious or misleading data into training datasets, causing models to learn incorrect patterns and significantly degrade overall performance.

3. Model Inversion Attacks

Model inversion attacks attempt to reconstruct sensitive training data by exploiting model outputs, revealing private information such as images, text, or personal attributes.

4. Membership Inference Attacks

Membership inference attacks aim to determine whether specific data points were used during training by analyzing model responses and confidence scores.

5. Transfer Attacks

Transfer attacks reuse adversarial examples crafted for one model to successfully attack another model, even when architectures, datasets, or parameters differ significantly.

Key Components of Adversarial AI

Here are the components that define how adversarial AI systems and attacks function.

1. Target Model

The target model is the machine learning system under attack, designed to perform predictions, classifications, or decisions based on input data.

2. Adversary

The adversary is an attacker or entity that intentionally exploits weaknesses in AI systems to manipulate outputs or gain unauthorized advantages.

3. Attack Strategy

Attack strategy refers to the specific technique used to deceive models, such as perturbations, data poisoning, or carefully crafted adversarial examples.

4. Defense Mechanism

Defense mechanisms are methods and techniques implemented to detect, mitigate, and prevent adversarial attacks, ensuring the robustness, security, and reliability of models.

Techniques to Defend Against Adversarial AI

Here are key techniques for improving the security and robustness of AI systems against adversarial attacks.

1. Adversarial Training

Adversarial training involves augmenting datasets with adversarial examples, enabling models to learn robust patterns and effectively resist malicious input manipulations.

2. Input Validation

Input validation checks incoming data for unusual or suspicious patterns. This stops harmful data from reaching and misleading models.

3. Model Regularization

Model regularization techniques reduce sensitivity to minor input variations, helping models generalize better and remain stable against adversarial perturbations and noise.

4. Defensive Distillation

Defensive distillation simplifies model decision boundaries, making it harder for attackers to exploit vulnerabilities and craft effective adversarial examples successfully.

5. Ensemble Methods

Ensemble methods combine predictions from multiple models, reducing reliance on a single system and improving resistance against adversarial attacks or manipulations.

Advantages of Adversarial AI

Here are the advantages of adversarial AI and its impact on modern machine learning systems.

1. Improves AI Security

Studying adversarial AI improves security. It helps find threats, reduce risks, and protect machine learning models from attacks.

2. Enhances Model Robustness

It enhances model robustness by enabling systems to handle noisy, manipulated, or unexpected inputs without significant performance or accuracy degradation.

3. Helps Identify System Vulnerabilities

Understanding adversarial techniques helps uncover hidden vulnerabilities in systems, allowing developers to address weaknesses and improve the overall resilience of AI systems.

4. Strengthens Trust in AI Applications

By improving reliability, security, and transparency, adversarial AI builds user trust, ensuring AI applications are dependable across critical industries and real-world scenarios.

5. Supports Ethical AI Development

Studying adversarial AI promotes ethical development by identifying biases, preventing misuse, and ensuring AI systems operate fairly and responsibly.

6. Improves Risk Management Strategies

It improves risk management by predicting possible attacks. This helps organizations prepare and reduce losses.”

Challenges of Adversarial AI

Here are the challenges faced in building and maintaining secure AI systems against adversarial threats.

1. Evolving Attack Techniques

Attackers constantly innovate new adversarial methods, making it difficult for security systems to keep pace and ensure long-term model protection.

2. Computational Complexity

Defending against adversarial attacks often demands high computational power, increasing costs, slowing performance, and requiring advanced infrastructure for effective implementation.

3. Lack of Standardization

There is no standard way to test adversarial robustness. This makes results inconsistent and hard to compare model security.

4. Trade-off Between Accuracy and Security

Enhancing model robustness against adversarial attacks can reduce prediction accuracy, forcing developers to balance security measures with overall model performance.

5. Limited Explainability

Adversarial defenses often lack transparency, making it difficult to understand how models respond to attacks and why certain predictions fail.

6. Data Dependency Issues

Adversarial robustness heavily depends on training data quality, and biased or insufficient datasets can weaken defenses against sophisticated adversarial inputs.

Real-World Examples

Here are practical examples that show how adversarial AI affects different industries and systems in real-world scenarios.

1. Autonomous Vehicles

Adversarial attacks alter road signs or lane markings, causing self-driving cars to misinterpret environments and make unsafe navigation decisions.

2. Facial Recognition Systems

Special accessories or small image changes can fool facial recognition systems. This can cause wrong identification or allow unauthorized access.

3. Healthcare AI

Manipulated medical images can deceive AI systems, leading to incorrect diagnoses, delayed treatments, or serious risks to patient health outcomes.

4. Financial Fraud Detection

Attackers create transactions that look normal to systems. This helps them avoid fraud checks and cause financial loss.

Best Practices for Handling Adversarial AI

Here are essential guidelines that help strengthen AI systems against adversarial threats and improve overall model security.

1. Regularly Update and Retrain Models

Continuously update and retrain AI models with new data to adapt against evolving adversarial attacks and maintain strong system performance.

2. Use Secure and Clean Datasets

Keep datasets clean, checked, and safe from changes. This prevents data poisoning and ensures reliable model training.

3. Implement Monitoring and Anomaly Detection

Deploy real-time monitoring and anomaly detection systems to identify unusual inputs or behaviors, enabling quick response to potential adversarial threats.

4. Conduct Penetration Testing on AI Systems

Regularly test AI systems by simulating attacks. This helps find weaknesses and improve protection.

5. Combine AI with Traditional Security Methods

Integrate AI-based defenses with traditional cybersecurity techniques to create layered security, effectively improving resilience against diverse adversarial attack vectors.

Future Trends in Adversarial AI

Here are key emerging developments that are shaping how adversarial AI will evolve and be managed in the future.

1. AI-Driven Defense Systems

AI models will detect and stop attacks in real time. This helps respond faster and improves overall security.

2. Explainable AI (XAI)

Improvements in explainable AI will make systems more transparent. This helps organizations understand decisions, find weaknesses, and build more trustworthy AI.

3. Regulatory Frameworks

Governments and organizations may introduce standardized regulations and policies to ensure the security, accountability, and ethical use of AI across industries globally.

4. Automated Threat Detection

Advanced AI systems will find, analyze, and stop attacks automatically. This reduces human effort and improves speed and accuracy.

Final Thoughts

Adversarial AI presents both risk and opportunity in modern artificial intelligence. It reveals system weaknesses while encouraging stronger, more resilient model design. As adoption grows, addressing adversarial threats becomes critical for safety and reliability. Organizations investing in proactive defenses can reduce risks, enhance trust, and fully leverage AI’s transformative potential across industries and real-world applications.

Frequently Asked Questions (FAQs)

Q1. Can adversarial attacks be prevented?

Answer: While not completely preventable, they can be mitigated using robust training and defense techniques.

Q2. Which industries are most affected?

Answer: Healthcare, finance, cybersecurity, and autonomous systems are highly impacted.

Q3. How do companies detect adversarial attacks?

Answer: Companies use anomaly detection, input validation, monitoring systems, and adversarial training techniques to identify and mitigate suspicious or malicious inputs effectively.

Recommended Articles

We hope that this EDUCBA information on “Adversarial AI” was beneficial to you. You can view EDUCBA’s recommended articles for more information.