Updated April 5, 2023

Introduction to PyTorch Tensors

The following article provides an outline for PyTorch Tensors. PyTorch was released as an open-source framework in 2017 by Facebook, and it has been very popular among developers and the research community. PyTorch has made building deep neural network models by providing easy programming and faster computation. However, PyTorch’s strong feature is providing Tensors. Tensors are defined as single dimensions or a matrix of a multi-dimensional array containing an element of single data types.

Tensors are also used in the Tensorflow framework, which Google released. NumPy Arrays in Python are basically just tensors processed by using GPUs or TPUs for training neural network models. PyTorch has libraries included in it for calculating gradient descent for feed-forward networks as well as back-propagation. PyTorch has more support to the Python libraries like NumPy and Scipy compared to other frameworks like Tensorflow.

PyTorch Tensors Dimensions

- In any linear algebraic operations, the user may have data in vector, matrix or N-dimensional form. Vector is basically a single-dimensional tensor, Matrix is two-dimensional tensors, and an Image is a 3-dimensional tensor with RGB as a dimension. PyTorch tensor is a multi-dimensional array, same as NumPy and also it acts as a container or storage for the number. To create any neural network for a deep learning model, all linear algebraic operations are performed on Tensors to transform one tensor to new tensors.

- PyTorch tensors have been developed even though there was NumPy array offering multi-dimensional array property, but PyTorch has the advantage of running on top of GPU and also tensors can integrate with Python libraries like NumPy, Scikit learns and pandas. Tensors store the data in the form of an array, and records can be accessed by using an index. Tensors represent many types of data which have arbitrary dimensions like images, audio or time-series data.

Example:

Code:

import torch

tensor_1 = torch.rand(3,3)Here random tensor of size 3*3 is created.

How to Create PyTorch Tensors Using Various Methods

Let’s create a different PyTorch tensor before creating any tensor import torch class using the below command:

Code:

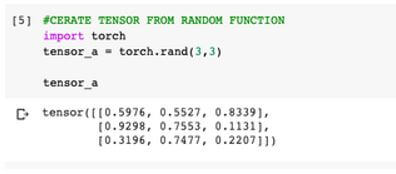

import torch1. Create tensor from pre-existing data in list or sequence form using torch class.

It is a 2*3 matrix with values as 0 and 1.

Syntax:

torch.tensor(data, dtype=None, device=None, requires_grad=False, pin_memory=False)Code:

import torch

tensor_b = torch.Tensor([[0,0,0], [1,1,1]])

tensor_bOutput:

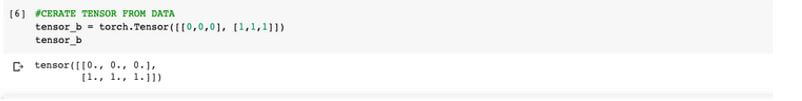

2. Create n*m tensor from random function in the torch.

Syntax:

torch.randn(data_size, dtype=input.dtype, layout=input.layout, device=input.device)Code:

import torch

tensor_a = torch.rand((3, 3))

tensor_aOutput:

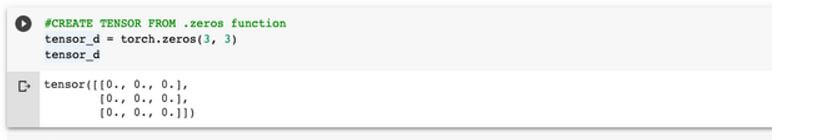

3. Creating a tensor from numerical types using functions such as ones and zeros.

Syntax:

torch.zeros(data_size, dtype=input.dtype, layout=input.layout, device=input.device)Code:

tensor_d = torch.zeros(3, 3)

tensor_dOutput:

In the above, tensor .zeros() is used to create a 3*3 matrix with all the values as ‘0’ (zero).

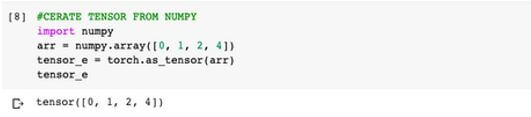

4. Creating a PyTorch tensor from the numpy tensor.

To create a tensor from numpy, create an array using numpy and then convert it to tensor using the .as_tensor keyword.

Syntax:

torch.as_tensor(data, dtype=None, device=None)Code:

import numpy

arr = numpy.array([0, 1, 2, 4])

tensor_e = torch.as_tensor(arr)

tensor_eOutput:

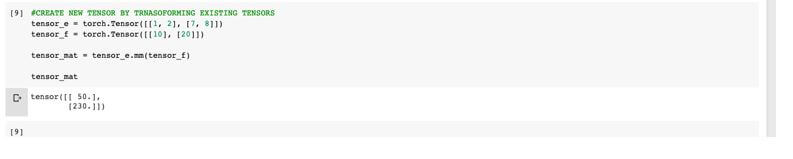

5. Creating new tensors by applying transformation on existing tensors.

Here is the basic tensor operation to perform the matrix product and get a new tensor.

Code:

tensor_e = torch.Tensor([[1, 2], [7, 8]])

tensor_f = torch.Tensor([[10], [20]])

tensor_mat = tensor_e.mm(tensor_f)

tensor_matOutput:

Parameters:

Here is the list and information on parameters used in syntax:

- data: Data for tensors.

- dtype: Datatype of the returned tensor.

- device: Device used is CPU or CUDA device with returned tensor.

- requires_grad: It is a boolean data type with values as True or False to record automatic gradient on returned tensor.

- data_size: Data shape of the input tensor.

- pin_memory: If the pin_memory is set to Truly returned tensor will have pinned memory.

See below jupyter notebook for the above operation to create tensors.

Importance of Tensors in PyTorch

Tensor is the building block of the PyTorch libraries with a matrix-like structure. Tensors are important in PyTorch framework as it supports to perform a mathematical operation on the data.

Following are some of the key important points of tensors in PyTorch:

- Tensors are important in the PyTorch as it is a fundamental data structure and all the neural network models are built using tensors as it has the ability to perform linear algebra operations

- Tensors are similar to numpy arrays, but they are way more powerful than the numpy array as They perform their computation GPU or CPU. Hence, It is way more faster than the numpy library of python.

- It offers seamless interoperability with Python libraries so that the programmer can easily use Sci-kit, SciPy libraries with tensors. Also, using functions like as_tensors or from_numpy programmer can easily convert the numpy array to PyTorch tensors.

- One of the important features offered by tensor is it can store track of all the operations performed on them, which helps to compute the gradient descent of output; this can be done using Autograd functionality of tensors.

- It is a multi-dimensional array which holds data for Images that can be converted into a 3-dimensional array based on its color like RGB (Red, Green and Blue); also, it holds Audio data or Time series data; any unstructured data can be addressed using tensors.

Conclusion

To learn PyTorch framework for building deep learning models for computer vision, Natural language processing or reinforcement learning. In the above tutorial, a programmer can get an idea of how useful and simple it is to learn and implement tensors in PyTorch. Of course, tensors can be used in PyTorch as well as Tensorflow. Still, the basic idea behind using tensors stays the same: using GPU or CPU with Cuda cores to process data faster which one framework to use for building models is developers decisions. Still, the above articles give a clear idea about tensor in the PyTorch.

Recommended Articles

We hope that this EDUCBA information on “PyTorch Tensors” was beneficial to you. You can view EDUCBA’s recommended articles for more information.