Top Informatica Scenario Based Interview Questions

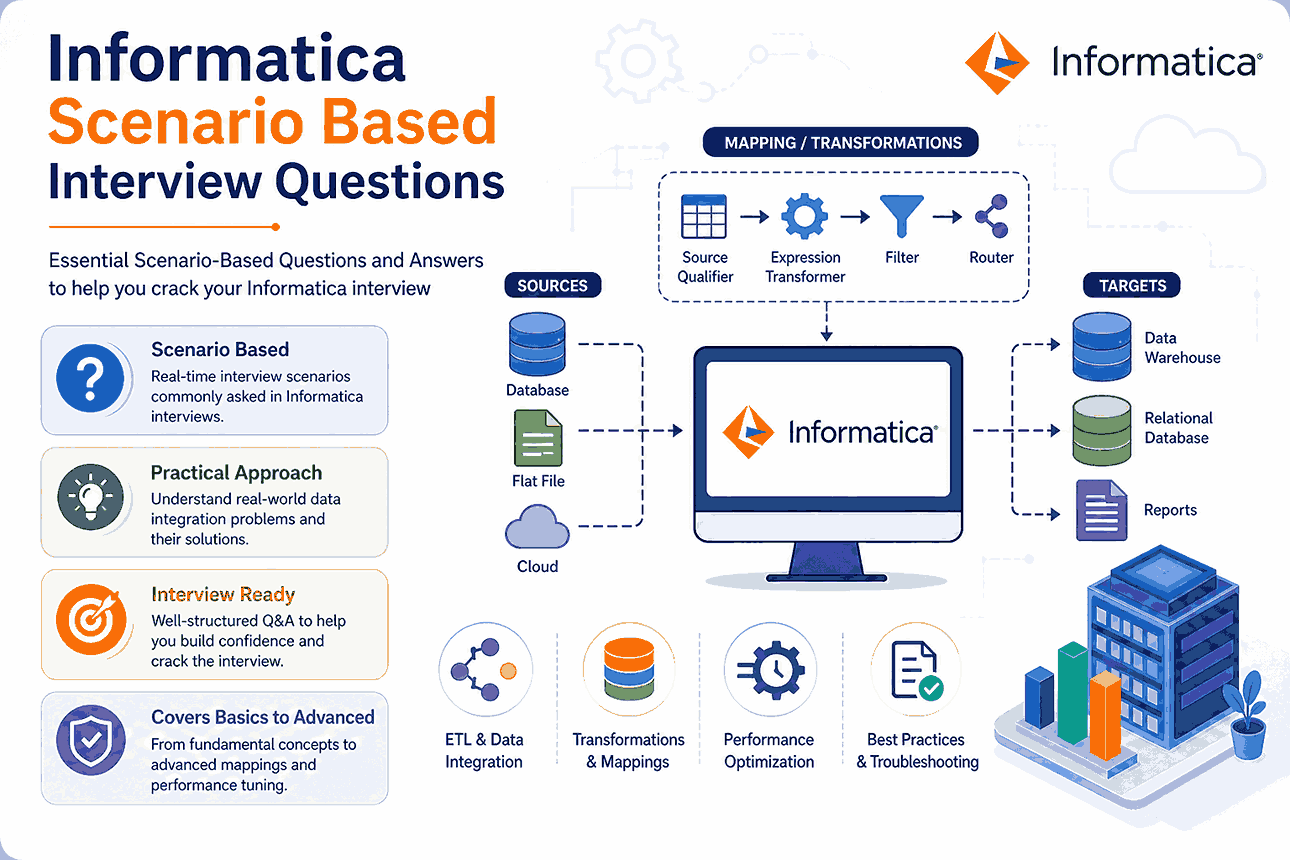

Informatica is an essential tool used in data warehousing, which helps manage large amounts of data and report data analysis. Below are some questions that will be helpful when you attend an interview at Informatica.

You have finally found your dream job in Informatica, but are wondering how to crack the 2026 Informatica interview and what the probable Informatica Scenario-Based Interview Questions could be. Every interview is different, and the job scope is different too. Keeping this in mind, we have designed the most common Informatica Scenario based on Interview Questions and Answers to help you succeed in your interview.

Basic and Advanced Informatica Scenario-Based Interview Questions

Preparing for an Informatica interview? Here are the top scenario-based questions you are most likely to face in 2026.

Q1. How to remove duplicate records in Informatica?

Answer:

There are many ways of eliminating duplicates:

- If there are duplicates in the source database, a user can use the property in the source qualifier. Users must go to the Transformation tab and checkmark the ‘Select Distinct’ option. Also, a user can use SQL override for the same purpose. The user can go to the Properties tab and, in the SQL query tab, write the distinct query.

- Users can use Aggregator and select ports as keys to get distinct values. If a user wishes to find duplicates in the entire column, all ports should be chosen as a group by key.

- The user can also use Sorter with Sort distinct property to get distinct values.

- Expression and filter transformation can also identify and remove duplicate data. If data is not sorted, then it needs to be sorted first.

- A new port is added when a property in the Lookup transformation is changed to use Dynamic cache. This cache is updated as and when data is read. If a source has duplicate records, the user can look in the Dynamic lookup cache, and then the router selects only one distinct form.

Methods:

- Source Qualifier → Use SELECT DISTINCT

- Aggregator → Apply GROUP BY on columns

- Sorter → Enable Distinct property

- Lookup (Dynamic Cache) → Avoid duplicate inserts

- Expression + Filter → Custom duplicate removal logic

Real-World Tip: Use database-level DISTINCT when possible—it performs faster than Informatica transformations.

Q2. What is the difference between Source Qualifier and Filter Transformation?

Answer:

Source qualifier transformation represents rows that the Integration service reads in a session. It is an active transformation. Using source qualifier, the following tasks can be fulfilled:

- When two tables from the same source database with a primary key–foreign critical transformation relationship exist, the sources can be linked to one source qualifier transformation.

- Filtering rows when the Integration service adds a where clause to the user’s default query.

- When a user wants an outer join instead of an inner one, join information is replaced by metadata specified in the SQL query.

- When sorted ports are specified, the integration service uses the order by clause to the default query.

- If a user chooses to find a distinct value, then the integration service selects particular to the specified query.

When the data we need to filter is not a relational source, the user should use Filter transformation. It helps the user to meet the specified filter condition to let go or pass through. It will drop the rows that do not meet the state, and multiple conditions can be specified.

Source Qualifier:

- Works only with relational sources

- Can perform joins, filtering, and sorting

- Uses SQL queries

Filter Transformation:

- Works on all data types

- Filters records based on conditions

- Drops unwanted rows

Real-World Tip: Use Source Qualifier for early filtering to improve performance.

Q3. How do you design a mapping to load the last 3 rows from a flat file?

Answer:

Suppose the flat file in consideration has the below data:

Column A

Aanchal

Priya

Karishma

Snehal

Nupura

Step 1: Assign row numbers to each record. Generate row numbers using expression transformation by creating a variable port and incrementing it by 1. After this, assign this variable port to the output port. After expression transformation, the ports will be as follows.

Variable_count= Variable_count+1

O_count=Variable_count

Create a dummy output port for the same expression transformation and assign 1 to that port. This dummy port will always return 1 for each row.

Finally, the transformation expression will be as follows:

Variable_count= Variable_count+1

O_count=Variable_count

Dummy_output=1

The output of this transformation will be :

Column A O_count Dummy_output

Aanchal 1 1

Priya 2 1

Karishma 3 1

Snehal 4 1

Nupura 5 1

Step 2: Pass the above output to an aggregator and do not specify any group by the condition. A new output port should be created as O_total_records in the aggregator and assign O_count port to it. The aggregator will return the last row. This step’s final output will have a dummy port with a value as 1, and O_total_records will have the total number of records in the source. The aggregator output will be: O_total_records, Dummy_output

5 1

Step 3: Pass this output to joiner transformation and apply a join on the dummy port. The property sorted input should be checked in the joiner transformation. Only then can the user connect both expression and aggregator transformation to joiner transformation. Joiner transformation condition will be as follows:

Dummy_output (port from aggregator transformation) = Dummy_output (port from expression transformation)

The output of joiner transformation will be

Column A o_count o_total_records

Aanchal 1 5

Priya 2 5

Karishma 3 5

Snehal 4 5

Nupura 5 5

Step 4: After the joiner transformation, we can send this output to filter transformation and specify the filter condition as O_total_records (port from aggregator)-O_count(port from expression) <=2

The filter condition, as a result, will be

O_total_records – O_count <=2

The final output of filter transformation will be :

Column A o_count o_total_records

Karishma 3 5

Snehal 4 5

Nupura 5 5

Steps:

- Expression Transformation

- Generate row numbers using a variable port

- Aggregator Transformation

- Calculate total record count

- Joiner Transformation

- Join row-level data with total count

- Filter Transformation

- Apply condition:

Total_Records – Row_Number <= 2

Output:

Only the last 3 rows are loaded into the target.

Best Practice:

This approach works when source data has no built-in row numbering.

Q4. How to load only NULL records into the target? Explain using mapping flow.

Answer:

Consider the below data as a source

Emp_Id Emp_Name Salary City Pincode

619101 Aanchal Singh 20000 Pune 411051

619102 Nupura Pattihal 35000 Nagpur 411014

NULL NULL 15000 Mumbai 451021

The target table also has a table structure as a source. We will have two tables containing NULL values and others that would not contain NULL values.

The mapping can be as follows:

SQ –> EXP –> RTR –> TGT_NULL/TGT_NOT_NULL

EXP – Expression transformation create an output port

O_FLAG= IIF ( (ISNULL(emp_id) OR ISNULL(emp_name) OR ISNULL(salary) OR ISNULL(City) OR ISNULL(Pincode)), ‘NULL’,’NNULL’)

RTR – Router transformation two groups

Group 1 connected to TGT_NULL ( Expression O_FLAG=’NULL’)

Group 2 connected to TGT_NOT_NULL ( Expression O_FLAG=’NNULL’)

Mapping Flow:

Source → Expression → Router → TargetLogic:

Expression Transformation:

O_FLAG = IIF(

ISNULL(emp_id) OR ISNULL(emp_name) OR ISNULL(salary) OR ISNULL(city),

'NULL','NOT_NULL'

)- Group 1 → NULL records

- Group 2 → Non-NULL records

Real-World Tip:

Router is more efficient than multiple filters for multi-condition routing.

Q5. How to improve performance of Joiner Transformation?

Answer:

The performance of the joiner condition can be increased by following some simple steps.

- The user must perform joins whenever possible. When for some tables, this is not possible, then a user can

- create a stored procedure and then join the tables in the database.

- Data should be sorted before applying to join whenever possible.

- When data is unsorted, then a source with fewer rows should be considered a master source.

- For sorted joiner transformation, a source with less duplicate key values should be considered a master source.

Optimization Techniques:

- Perform joins at the database level whenever possible

- Use sorted input for Joiner transformation

- Select smaller dataset as Master source

- Reduce duplicate keys in master source

- Use indexes in source tables

Best Practice:

Database joins are faster than Informatica joins in most cases.

Recommended Article

We hope that this EDUCBA information on “Informatica Scenario based Interview Questions” was beneficial to you. You can view EDUCBA’s recommended articles for more information.