What is Explainable AI?

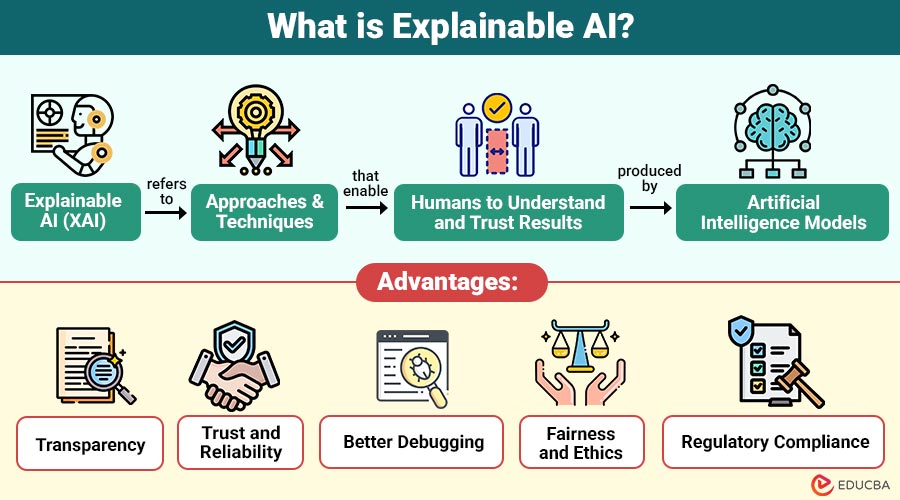

Explainable AI (XAI) refers to approaches and techniques that enable humans to understand and trust results produced by artificial intelligence models. It makes AI systems more transparent, understandable, and accountable.

In simple words, explainable AI answers questions like:

- Why did the AI make this decision?

- What data influenced the result?

- Can the decision be trusted?

- How will the model behave in different situations?

Traditional machine learning models, like decision trees, are easy to understand, whereas complex models, such as neural networks, are difficult to interpret. Explainable AI provides tools to make even complex models understandable.

Table of Contents:

Key Takeaways:

- Explainable AI makes artificial intelligence systems easier to understand by showing the reasons behind predictions and decisions.

- Different explanation methods allow complex machine learning models to be interpreted without changing their original structure or accuracy.

- Industries that rely on critical decisions use Explainable AI to ensure fairness, safety, transparency, and regulatory compliance.

- Even though it adds extra effort during development, Explainable AI helps build more reliable, ethical, and trustworthy AI systems.

Why is Explainable AI Important?

The following points explain why explainable AI is important in modern artificial intelligence systems.

1. Builds Trust in AI Systems

Users trust AI systems more when they clearly understand how decisions are made and which factors influenced the final output.

2. Helps in Regulatory Compliance

Industries such as banking, healthcare, and insurance must properly explain automated decisions that comply with legal rules, regulations, and ethical guidelines.

3. Detects Bias and Errors

Explainable AI helps identify biased data, incorrect logic, and unfair predictions, allowing developers to fix issues and improve model reliability.

4. Improves Model Performance

By understanding how the model makes predictions, developers can adjust features, algorithms, and training data to increase accuracy and efficiency.

5. Supports Human Decision-Making

Explainable AI provides clear insights that help humans verify results, make better decisions, and confidently rely on AI-generated predictions in practice.

6. Required in High-Risk Applications

In critical fields like medical diagnosis, fraud detection, and autonomous driving, explanations are necessary to ensure safety, accountability, and reliability.

How Does Explainable AI Work?

Explainable AI uses different techniques to show how an AI model makes decisions. These techniques can explain the model globally (overall behavior) or locally (for a single prediction).

1. Feature Importance

Feature importance identifies which input variables most affect predictions. It ranks features by impact, helping users understand which factors most influence model decisions.

2. Decision Trees

Decision trees explain predictions using step-by-step branches. Each node shows a condition, making the decision process easy for humans to follow, understand, and verify.

3. Rule-Based Models

Rule-based models use IF-THEN rules to explain logic. These rules clearly show why a prediction happened, making the system transparent, comprehensible, and easy to audit.

4. Visualization Methods

Visualization methods use charts, graphs, and plots to display model behavior. Visual explanations help users quickly understand patterns, relationships, and how predictions are generated.

5. Model Simplification

Model simplification explains complex models using simpler ones. A complicated algorithm is approximated with an easier model so humans can understand the overall decision behavior.

6. Post-hoc Explanation Methods

Post-hoc methods explain predictions after training. Techniques such as LIME, SHAP, saliency maps, and dependence plots help interpret complex models without altering the original model structure.

Types of Explainable AI

Below are the different types of Explainable AI.

1. Global Explainability

Global explainability describes how the entire AI model behaves across all data, revealing the overall logic, patterns, and decision rules used in predictions.

2. Local Explainability

Local explicability focuses on a single prediction and explains why the model made that specific decision.

3. Model-Specific Explainability

Model-specific explainability works only with certain algorithms that are naturally interpreted, such as decision trees or linear regression models.

4. Model-Agnostic Explainability

Model-agnostic explainability works with any machine learning model, including complex ones such as neural networks, without requiring internal model details.

Explainable AI Techniques

Below are some common techniques used in Explainable AI.

1. LIME

LIME explains individual predictions by approximating the model locally with a simpler interpretable model, helping users understand why a specific decision was made.

2. SHAP

SHAP values indicate each feature’s contribution to the prediction, helping users understand how different inputs affect the final output.

3. Decision Trees

Decision trees are easy-to-understand models that show decisions step-by-step using branches, making it simple to trace how the model reached a prediction.

4. Rule-based Systems

Rule-based systems use logical IF-THEN rules to explain decisions clearly, making the model transparent and easy for humans to understand and verify.

5. Feature Importance

Feature importance indicates which input variables have the greatest effect on predictions. This helps them understand which factors have the biggest effect on the model.

6. Saliency Maps

Saliency maps are used in image models to highlight the important areas of an image that the AI focuses on while making a prediction.

7. Partial Dependence Plots

Partial dependence plots show how one feature affects the prediction while keeping other features constant, helping understand the relationship between input and output.

Real-World Examples of Explainable AI

Below are some real-world examples of Explainable AI in different industries.

1. Banking and Finance

In banking and finance, understandable AI helps loan approval systems clearly show reasons for rejection, such as low income, poor credit history, or risk factors.

2. Fraud Detection

Explainable AI aids banks in fraud detection by highlighting unusual location, amount, behavior, or spending pattern differences.

3. Autonomous Vehicles

In autonomous vehicles, explicable AI shows why the car stopped, turned, or slowed down, helping engineers verify safety decisions and improve driving algorithms.

4. Hiring Systems

In hiring systems, understandable AI ensures recruitment decisions are fair by showing which skills, qualifications, or experiences influenced candidate selection without discrimination or bias.

5. Cybersecurity

In cybersecurity, explicable AI helps analysts understand detected threats by showing which activities, patterns, or anomalies caused the system to flag a security risk.

Advantages of Explainable AI

Below are the advantages of Explainable AI.

1. Transparency

Explainable AI provides transparency by showing how decisions are made, allowing users to understand model logic, rules, and prediction behavior clearly.

2. Trust and Reliability

People trust AI systems more when explanations are available because they can verify decisions, understand outcomes, and feel confident in the system’s reliability.

3. Better Debugging

Explainability makes debugging easier by revealing how predictions are made, helping developers quickly find errors, incorrect data, or wrong model assumptions.

4. Fairness and Ethics

Explainable AI helps detect bias in decisions by showing which features influenced results, allowing developers to correct unfair or unethical model behavior.

5. Regulatory Compliance

Many industries require explanations for automated decisions, so explainable AI helps organizations comply with regulations, audits, and legal requirements without incurring penalties.

Disadvantages of Explainable AI

Below are the disadvantages of Explainable AI.

1. Increased Complexity

Explainability techniques increase system complexity because extra algorithms are required to generate explanations, making development, testing, and maintenance more difficult.

2. Performance Trade-Off

Highly accurate models like deep learning networks are difficult to explain, and simplifying them for explainability may reduce prediction accuracy and performance.

3. Difficult for Deep Learning

Deep learning models contain many layers and parameters, making their decision process hard to interpret, even with advanced explainability techniques and visualization methods.

4. Extra Development Time

Building explainable AI requires additional design, testing, and validation, which increases development time, cost, and effort compared to creating normal machine learning models.

5. Not Always Perfect

Explainability methods sometimes provide approximate explanations that may not fully represent the internal workings of complex machine learning or deep learning models.

Final Thoughts

Explainable AI is an important part of modern artificial intelligence that improves transparency, trust, and accountability in automated systems. As AI is widely used in critical fields, understanding model decisions becomes necessary for ethical and reliable use. Techniques like feature importance, LIME, SHAP, and interpretable models help build AI systems that are accurate, understandable, and trustworthy for real-world applications.

Frequently Asked Questions (FAQs)

Q1. Where is Explainable AI used?

Answer: Healthcare, finance, cybersecurity, autonomous vehicles, and hiring systems.

Q2. Is Explainable AI required by law?

Answer: In some industries, yes. Regulations require AI decisions to be explainable.

Q3. Does Explainable AI reduce model accuracy?

Answer: Sometimes, explainability may slightly reduce accuracy because simpler models are easier to interpret. However, techniques like SHAP and LIME can explain complex models without major performance loss.

Q4. Who needs Explainable AI?

Answer: Explainable AI is needed by data scientists, developers, businesses, and regulators who require transparency, trust, and fairness in AI-based decision-making systems.

Recommended Articles

We hope that this EDUCBA information on “Explainable AI” was beneficial to you. You can view EDUCBA’s recommended articles for more information.