What is Bias Mitigation?

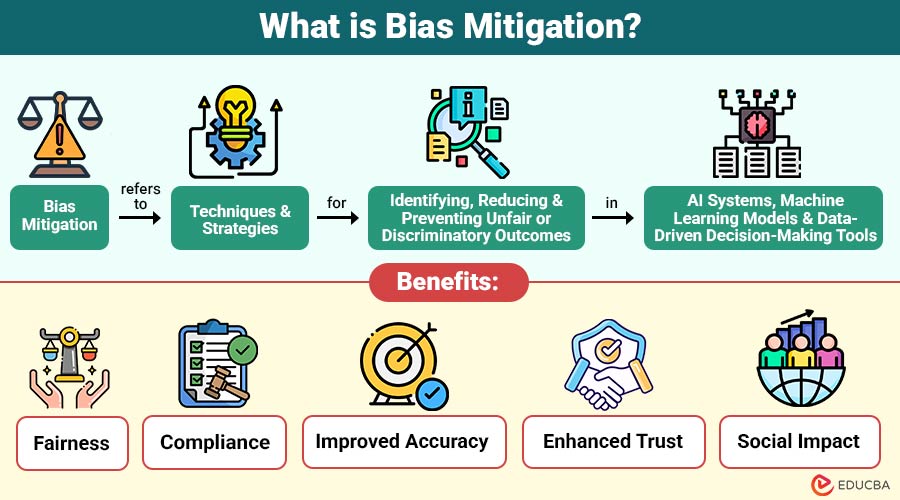

Bias mitigation refers to techniques and strategies for identifying, reducing, and preventing unfair or discriminatory outcomes in AI systems, machine learning models, and data-driven decision-making tools. It ensures fairness, transparency, and equal treatment across different groups in automated decisions.

Table of Contents:

- Meaning

- Importance

- Techniques

- Real-World Applications

- Benefits

- Challenges

- Tools and Frameworks

- Best Practices

Key Takeaways:

- Bias mitigation ensures fairness in AI by reducing discrimination and promoting equal outcomes across diverse groups.

- Applying preprocessing, in-processing, and post-processing techniques helps identify and minimize bias throughout the entire AI lifecycle.

- Continuous monitoring, diverse datasets, and transparency are essential practices for maintaining fairness and improving model performance.

- Effective bias mitigation enhances trust, ensures compliance, improves accuracy, and supports ethical decision-making in real-world applications.

Why is Bias Mitigation Important?

Here are the reasons that highlight the importance of bias mitigation in AI systems:

1. Ethical Responsibility

Ensuring fairness in AI decisions is to prevent discrimination, protect users, and uphold ethical standards in technology systems.

2. Legal Compliance

Following regulations GDPR and EEOC guidelines helps organizations avoid penalties, ensure fairness, and maintain lawful AI-driven decision-making processes.

3. Business Reputation

Biased AI systems can damage brand reputation, reduce customer trust, and lead to negative perception and financial losses.

4. Accuracy and Robustness

Reducing bias in models improves prediction accuracy, enhances generalization, and ensures performance across diverse and underrepresented data groups.

Bias Mitigation Techniques

Bias mitigation techniques can be broadly categorized into three stages of the AI lifecycle:

1. Preprocessing Techniques

Preprocessing techniques focus on improving the dataset before training the model by correcting imbalances, enhancing representation, and removing sensitive biases, ensuring the data is fair, diverse, and suitable for unbiased learning.

The following techniques help create a balanced and unbiased foundation for model training.

- Data Balancing: Ensuring equal representation of different groups through oversampling or undersampling.

- Data Augmentation: Generating additional data for underrepresented groups.

- Reweighting: Assigning weights to data points to reduce imbalance.

- Data Anonymization: Removing sensitive attributes such as gender or ethnicity.

2. In-processing Techniques

In-processing techniques are applied during model training to incorporate fairness into the learning process, ensuring the model minimizes bias while optimizing performance by enforcing fairness constraints and reducing discriminatory patterns.

The following techniques ensure that fairness is embedded directly into the model’s learning process.

- Fairness Constraints: Incorporating fairness metrics directly into the optimization process.

- Adversarial Debiasing: Training the model to make accurate predictions while preventing it from identifying sensitive attributes.

- Regularization Techniques: Penalizing biased outcomes during training.

3. Post-processing Techniques

Post-processing techniques adjust model outputs after training to improve fairness by modifying predictions, thresholds, or decision rules, ensuring equitable outcomes across different groups without changing the underlying model structure.

The following techniques refine model predictions to achieve fair outcomes without retraining the model.

- Threshold Adjustment: Changing decision thresholds for different groups.

- Output Calibration: Modifying predictions to ensure fairness metrics are met.

- Reject Option Classification: Revising uncertain predictions to favor fairness.

Real-World Applications of Bias Mitigation

Here are some key real-world applications where bias mitigation plays a crucial role in ensuring fairness and equality:

1. Recruitment and Hiring

Organizations use AI to screen resumes and recommend candidates. Bias mitigation ensures that underrepresented groups receive fair consideration, reducing gender or racial disparities.

2. Credit and Lending

ML models are used by banks and other financial organizations to approve loans. Bias mitigation helps prevent discrimination against applicants based on socioeconomic background, race, or gender.

3. Healthcare

AI in healthcare may be unfair to some groups. Reducing bias helps give fair care and more accurate results.

4. Law Enforcement

Prediction tools in policing may unfairly target some groups. Reducing bias helps ensure fair and just decisions.

Benefits of Bias Mitigation

Below are the key benefits of implementing bias mitigation in AI systems:

1. Fairness

Bias mitigation reduces discrimination against sensitive groups by correcting unfair patterns, ensuring equitable, inclusive, and unbiased decision-making across systems.

2. Compliance

Organizations meet legal, regulatory, and ethical standards by mitigating bias, reducing risk, ensuring transparency, and aligning with responsible AI practices.

3. Improved Accuracy

Balanced and representative datasets improve model performance, enabling systems to generalize better across diverse populations and produce more accurate results.

4. Enhanced Trust

Fair and unbiased systems make people trust them, use them more, build better relationships, and clearly show how decisions are made.

5. Social Impact

Bias mitigation promotes equality in healthcare, hiring, finance, and education, ensuring technology creates fair opportunities and benefits society more inclusively.

Challenges in Bias Mitigation

Below are the key challenges faced when implementing bias mitigation in AI systems:

1. Data Limitations

Access to high-quality, representative datasets remains limited, making it difficult to identify and correct biases effectively in AI systems.

2. Complexity of Fairness Metrics

Different ways to measure fairness can clash, making it hard to choose the right method to check and reduce bias.

3. Dynamic Systems

AI systems continuously evolve, and new biases can emerge, requiring constant monitoring, updates, and adaptive mitigation strategies.

4. Trade-offs

Balancing fairness and model performance can be difficult, as improving fairness may sometimes reduce overall accuracy or efficiency.

5. Human Oversight

Effective bias mitigation requires skilled experts to interpret fairness metrics correctly and ensure ethical decisions are implemented consistently across systems.

Tools and Frameworks for Bias Mitigation

Several tools have emerged to help organizations identify and mitigate bias in AI:

1. IBM AI Fairness 360 (AIF360)

An open-source toolkit providing comprehensive algorithms and metrics to detect, evaluate, and mitigate bias across machine learning models effectively.

2. Microsoft Fairlearn

A toolkit designed to assess fairness issues and apply mitigation techniques, enabling developers to build more equitable machine learning models.

3. Google What-If Tool

An interactive visualization tool that lets users examine potential scenarios, identify bias, and evaluate model performance without knowing how to code.

4. Fairness Indicators

A framework for monitoring fairness metrics like demographic parity and equal opportunity, helping evaluate model performance across different population groups.

Best Practices for Bias Mitigation

Here are the key best practices to effectively reduce and manage bias in AI systems:

1. Diversify Data Source

Include diverse demographic groups in datasets to ensure balanced representation, reduce bias, and improve fairness in model outcomes.

2. Continuous Monitoring

Regularly audit models and update them to detect, prevent, and address emerging biases throughout the system lifecycle effectively.

3. Transparency

Document datasets, model decisions, and fairness metrics clearly to ensure accountability, explainability, and trust in AI systems and processes.

4. Stakeholder Engagement

Engage ethicists, domain experts, and affected communities to ensure inclusive decision-making and to identify potential biases early.

5. Algorithm Selection

Pick models and methods that stay fair and still work well, giving ethical results without losing much accuracy.

Final Thoughts

Bias mitigation is no longer optional in modern AI development—it is essential for fairness, ethics, compliance, and trust. Using a clear step-by-step approach before, during, and after building AI systems helps reduce bias and unfair results. It improves how reliable the model is and supports fairness in society. Using right tools and best practices ensures AI creates positive and inclusive outcomes instead of reinforcing existing inequalities.

Frequently Asked Questions (FAQs)

Q1. Can bias ever be completely eliminated?

Answer: While it is challenging to remove bias entirely, mitigation strategies can significantly reduce unfair outcomes and improve equity.

Q2. Which stage is most effective for bias mitigation?

Answer: All stages—preprocessing, in-processing, and post-processing—are important. A multi-stage approach usually yields the best results.

Q3. Are bias mitigation tools suitable for all AI models?

Answer: Yes, but effectiveness varies depending on the model type, data complexity, and domain. Tool selection should align with the use case.

Q4. How often should bias mitigation be applied?

Answer: Bias mitigation should be ongoing, with continuous monitoring and periodic audits as the system and data evolve.

Recommended Articles

We hope that this EDUCBA information on “Bias Mitigation” was beneficial to you. You can view EDUCBA’s recommended articles for more information.