What is Chain-of-Thought (CoT) Prompting?

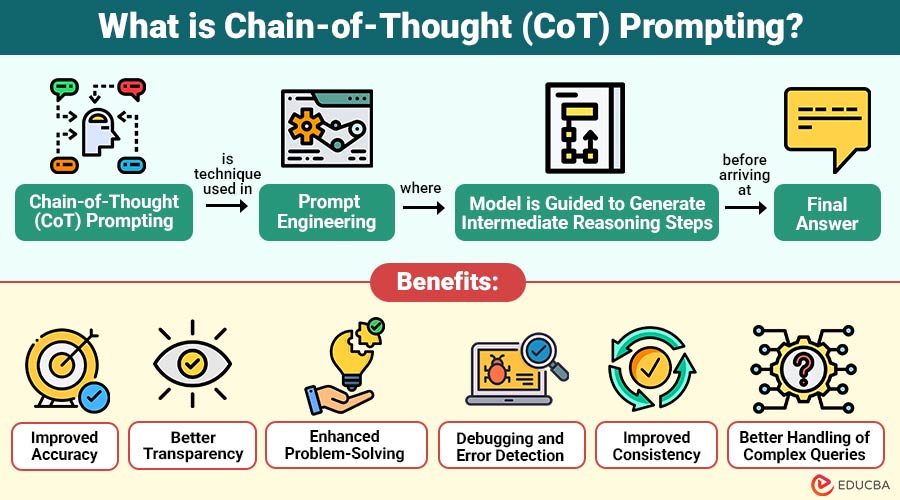

Chain-of-Thought (CoT) Prompting is a technique used in prompt engineering where the model is guided to generate intermediate reasoning steps before arriving at final answer.

Instead of asking:

“What is 25 × 12?”

You guide the AI like this:

“Break this down step by step: What is 25 × 12?”

The model then produces:

- 25 × 10 = 250

- 25 × 2 = 50

- Total = 300

This structured reasoning improves both accuracy and transparency.

Table of Contents:

- Meaning

- Why Chain-of-Thought Prompting Matters?

- Working

- Types

- Benefits

- Limitations

- Difference

- Use Cases

- Real-World Example

Key Takeaways:

- Chain-of-Thought prompting improves accuracy by encouraging structured step-by-step reasoning for complex tasks.

- It enhances transparency by clearly revealing the intermediate reasoning steps behind final answers.

- Chain-of-Thought prompting is most useful for complex mathematics, logic, programming, and decision-making scenarios.

- It may increase length and cost, making it less efficient for simple queries.

Why Chain-of-Thought Prompting Matters?

Here are the key reasons why Chain-of-Thought prompting is important and widely used:

1. Reasoning Ability

Enhances reasoning ability by encouraging step-by-step thinking, helping models break down problems and reach consistent conclusions.

2. Accuracy in Complex Tasks

Improves accuracy in complex tasks by guiding structured reasoning, reducing guesswork, and enabling reliable, context-aware problem-solving.

3. Explainability of Responses

Makes it easier to understand by showing each step clearly, so people can see how the answer was reached.

4. Error Reduction

Helps make fewer mistakes by thinking step by step, spotting problems early, and avoiding wrong guesses.

5. Better Problem Decomposition

Helps break complex problems into smaller, manageable parts, making it easier to analyze each step and arrive at solutions.

6. Improved Consistency

Promotes consistent outputs by following structured reasoning paths, reducing response variability, and ensuring more dependable results over time.

How Does Chain-of-Thought Prompting Work?

Here is a step-by-step breakdown of how Chain-of-Thought prompting functions:

1. Breaking Down the Problem

The model is guided to split complex problems into smaller logical parts, making them more manageable to understand and solve.

2. Sequential Reasoning

Each step in the reasoning process builds on the one before it, creating a logical chain that strengthens the solution’s coherence and dependability.

3. Final Answer Generation

After processing all reasoning steps, the model delivers a final answer supported by structured thinking and logical justification.

Types of Chain-of-Thought Prompting

Here are the main types of Chain-of-Thought prompting used to guide structured reasoning:

1. Zero-Shot CoT Prompting

Zero-shot CoT prompting uses no prior examples, relying on simple reasoning cues to encourage step-by-step thinking in responses.

2. Few-Shot CoT Prompting

Few-shot CoT prompting provides sample problems with step-by-step solutions, guiding the model to consistently follow similar reasoning patterns.

A: 3 apples = $6 → 1 apple = $6 ÷ 3 = $2

3. Automatic CoT Prompting

Automatic CoT prompting generates reasoning chains internally without explicit prompts and is often used in advanced AI systems for complex problem-solving tasks.

Benefits of Chain-of-Thought Prompting

Here are the major benefits that make Chain-of-Thought prompting highly effective:

1. Improved Accuracy

Breaking problems into smaller steps improves accuracy by reducing errors, especially in mathematics, logical reasoning, and multi-step calculations.

2. Better Transparency

Provides greater transparency by showing how answers are derived, enabling users to understand the reasoning process rather than only the final outcome.

3. Enhanced Problem-Solving

Enhances problem-solving capabilities by enabling AI to handle tasks involving deductive reasoning, cause-and-effect analysis, and informed decision-making effectively.

4. Debugging and Error Detection

Supports debugging and error detection by revealing intermediate steps, helping identify mistakes and correct flawed reasoning before final answers are produced.

5. Improved Consistency

Encourages consistent outputs by following structured reasoning paths, reducing random variation, and ensuring more stable, dependable results across tasks.

6. Better Handling of Complex Queries

Enables better handling of complex queries by systematically analyzing multiple variables, relationships, and conditions before arriving at well-reasoned conclusions.

Limitations of Chain-of-Thought Prompting

Here are key limitations to consider when using chain-of-thought prompting:

1. Increased Response Length

Step-by-step reasoning can make responses longer by adding extra details, which may reduce readability and efficiency when the question is simple or direct.

2. Slower Processing

Additional reasoning steps can slightly increase processing time, as the model evaluates each stage before generating the final response.

3. Not Always Necessary

Chain-of-thought prompting may be unnecessary for simple queries, where direct answers are sufficient and detailed reasoning adds no real value.

4. Risk of Incorrect Reasoning

If a mistake happens in any step along the way, it can carry forward and affect later steps, which may result in a wrong conclusion even if the overall approach is structured.

5. Higher Computational Cost

Requires more computational resources due to longer reasoning chains, increasing costs, and making it less efficient for large-scale or real-time applications.

6. Over-Reliance on Structure

Following strict step-by-step reasoning can sometimes reduce creativity and intuition, making the model less flexible when dealing with open-ended or unclear problems.

Difference Between Chain-of-Thought Prompting and Standard Prompting

Here is a clear comparison to understand how they differ:

| Feature | Chain-of-Thought Prompting | Standard Prompting |

| Approach | Step-by-step reasoning | Direct answer |

| Accuracy | High (for complex tasks) | Moderate |

| Transparency | High | Low |

| Use Case | Complex problem-solving | Simple queries |

| Error Handling | Easier to trace | Difficult |

Use Cases of Chain-of-Thought Prompting

Here are some common use cases where Chain-of-Thought prompting proves highly effective:

1. Mathematics and Calculations

Helps solve math problems step by step, making answers more correct in algebra, arithmetic, and word problems.

2. Programming and Debugging

Helps developers analyze code logic step-by-step, efficiently identify bugs, and understand complex algorithms through structured reasoning.

3. Business Decision-Making

Supports business decisions by carefully analyzing risks, comparing costs and benefits, and predicting possible outcomes using structured reasoning to ensure clear and informed choices.

4. Education and Learning

Enhances learning by providing step-by-step explanations, improving concept clarity, and guiding students through structured problem-solving processes effectively.

5. Logical Reasoning Tasks

Helps solve puzzles and tricky problems step by step, making thinking clearer and more accurate.

Real-World Example

Here is a simple example to illustrate the difference between standard prompting and Chain-of-Thought prompting:

Without CoT Prompting:

Question: A train travels 60 km in 1 hour. How far will it travel in the 3 hours?

Answer: 180 km

With CoT Prompting:

Question: A train travels 60 km in 1 hour. How far will it travel in the 3 hours? Let’s think step by step.

Answer:

- Distance in 1 hour = 60 km

- Time = 3 hours

- Total distance = 60 × 3 = 180 km

This version is clearer, educational, and more reliable.

Final Thoughts

Chain-of-Thought prompting represents a significant shift in how we interact with AI. Encouraging models to think step-by-step improves accuracy, transparency, and problem-solving capabilities. While it may not be necessary for every query, it is invaluable for complex tasks that require structured reasoning. As AI continues to integrate into everyday workflows, mastering CoT prompting can give users a powerful edge in extracting more reliable and meaningful outputs.

Frequently Asked Questions (FAQs)

Q1. When should we use CoT prompting?

Answer: Use it for complex problems involving logic, calculations, or multi-step reasoning.

Q2. Does CoT prompting always improve accuracy?

Answer: It significantly improves accuracy in complex tasks, but not necessarily for simple queries.

Q3. Is chain-of-thought prompting used in real AI systems?

Answer: Yes, it is widely used in advanced AI models to improve reasoning and explainability.

Q4. Does Chain-of-Thought prompting work for non-technical tasks?

Answer: Yes, it helps in tasks like writing, decision-making, and planning by improving clarity and structure through step-by-step reasoning.

Recommended Articles

We hope that this EDUCBA information on “Chain-of-Thought (CoT) Prompting” was beneficial to you. You can view EDUCBA’s recommended articles for more information.