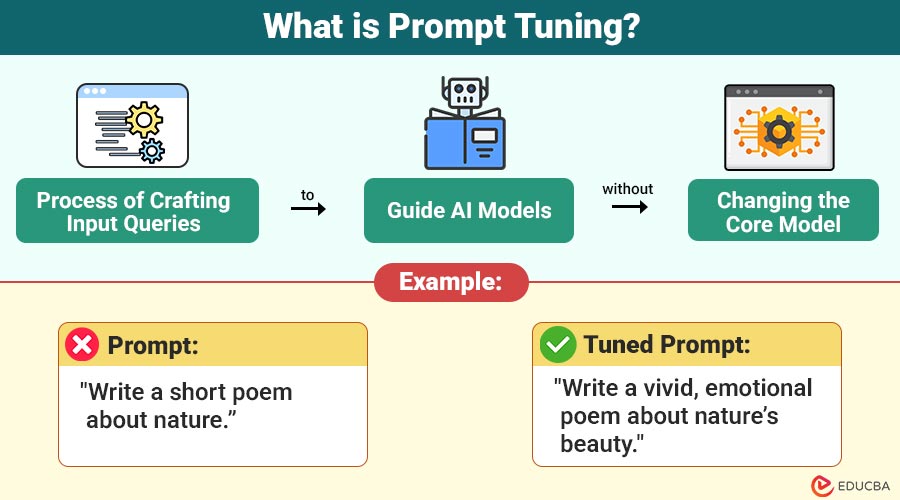

What is Prompt Tuning?

Prompt tuning refers to the process of crafting input prompts to guide AI models toward producing more accurate, creative, or context-aware outputs. Unlike traditional machine learning, where you retrain the model weights, prompt tuning focuses on adjusting the input queries to optimize results without changing the model itself.

In simpler terms, think of a large model as a well-educated person. Instead of retraining them from scratch for every new job, you simply provide them with better instructions for each task.

Example:

- Poor Prompt: “Tell me about AI.”

- Better Prompt: “Explain the concept of artificial intelligence in simple terms with 3 real-life examples.”

The second prompt is more specific, enabling the AI to provide a structured and meaningful answer.

Table of Contents

- Introduction

- Why is Prompt Tuning Important?

- How Does Prompt Tuning Work?

- Real-World Examples

- Types of Prompt Tuning

- Advanced Prompt Tuning Techniques

- Prompt Tuning vs. Fine-Tuning vs. Prompt Engineering

- Common Mistakes

Why is Prompt Tuning Important?

With AI becoming integral to industries like content creation, healthcare, education, and customer support, effective prompt tuning can provide:

- Accuracy: Correctly tuned prompts yield precise, relevant results.

- Consistency: Reduces variations in responses, especially for repetitive tasks.

- Efficiency: Saves time and computational resources by avoiding unnecessary iterations.

- Task-Specific Customization: Helps fine-tune LLMs for specialized domains such as legal advice, medical summaries, or coding solutions.

Without prompt tuning, even advanced models may give generic or off-target responses, making the results unreliable for critical applications.

How Does Prompt Tuning Work?

Here is a simple step-by-step breakdown of how it functions:

- Pre-Trained Model Foundation: Large language models, such as GPT or T5, are trained on vast amounts of text data to learn general language patterns and meanings.

- Prompt Embedding Layer Added: Instead of modifying the model itself, prompt tuning introduces a small set of additional parameters known as soft prompts. These are special vectors (numerical representations of words) that the model learns during a short training process.

- Task-Specific Learning: Researchers train these soft prompts on a small labeled dataset specific to the target task, such as sentiment analysis, translation, or summarization.

- Fixed Model, Tuned Prompts: The model keeps its main weights frozen and updates only the prompt embeddings. This makes tuning lightweight, efficient, and less resource-intensive than full fine-tuning.

- Inference (Prediction) Phase: During actual use, the model takes both the tuned prompt and the user’s input. The tuned prompt helps guide the model to produce task-optimized responses.

Real-World Examples

Here is how prompt tuning works across different applications:

1. Content Creation

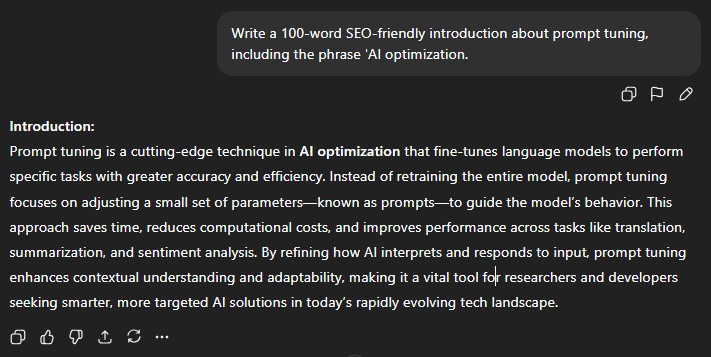

- Prompt: “Write a 100-word SEO-friendly introduction about prompt tuning, including the phrase ‘AI optimization.”

- Result: A concise, keyword-rich paragraph ready for a blog post.

2. Customer Support

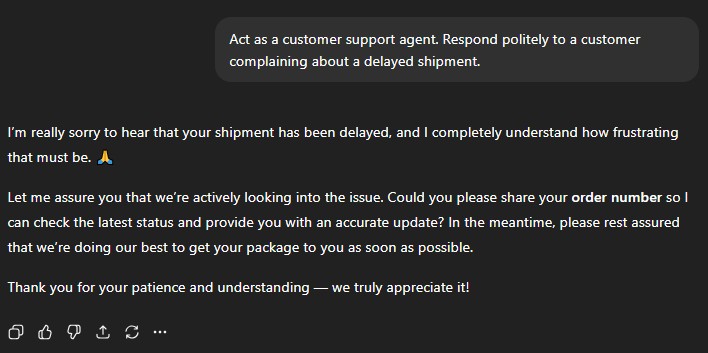

- Prompt: “Act as a customer support agent. Respond politely to a customer complaining about a delayed shipment.”

- Result: AI generates a professional and empathetic response.

3. Education

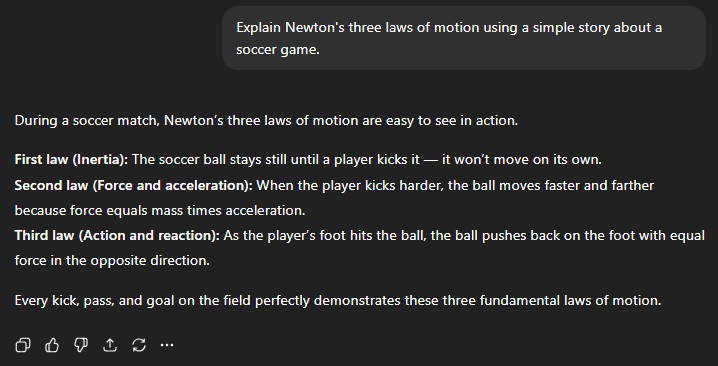

- Prompt: “Explain Newton’s three laws of motion using a simple story about a soccer game.”

- Result: Students receive an engaging, easy-to-understand explanation.

Types of Prompt Tuning

Prompt tuning has evolved into several specialized forms depending on how prompts are structured, trained, or integrated with the model. Let us explore the major types of prompt tuning with simple examples.

1. Manual (Textual) Prompt Tuning

The most basic form involves manually crafting textual prompts to guide the AI’s behavior. It does not require any training only creativity and a clear mind.

Example:

❌ “Tell me about dogs.”

✅ “Write a short paragraph describing the behavior and health benefits of owning a Labrador dog.”

Use Case:

Perfect for everyday users, marketers, educators, and writers who interact with models like ChatGPT, Claude, or Gemini.

2. Soft Prompt Tuning

Instead of using human-written text, the model learns virtual (soft) prompt embeddings continuous vectors that the AI interprets as specific instructions. Only these embeddings are trained, not the entire model.

Example:

You train a few soft prompt embeddings that enable the model to focus on summarizing medical reports.

Now, even if you only type:

“Summarize the following report.”

The tuned model automatically understands that the task involves clinical summaries, not generic text.

Use Case:

Ideal for developers who need domain-specific AI behavior (e.g., legal, financial, or scientific tasks).

3. Instruction Tuning

Instruction tuning teaches a model to follow general instructions more effectively by fine-tuning it on a large dataset of question-answer or task-response pairs.

Example:

If trained on examples like:

“Explain photosynthesis in simple terms.”

“Summarize this passage.”

“Translate this text.”

The model improves its ability to understand human instructions across various tasks.

Use Case:

Used by companies (like OpenAI and Google) to make LLMs more instruction-following and conversational.

Advanced Prompt Tuning Techniques

Here are the most effective strategies used by professionals to enhance model outputs.

1. Few-Shot and One-Shot Prompting

Few-shot and one-shot prompting involve showing the AI a few examples (or even just one) of the desired input-output pattern within the prompt. This helps the model understand the expected response style and structure without full retraining.

Example:

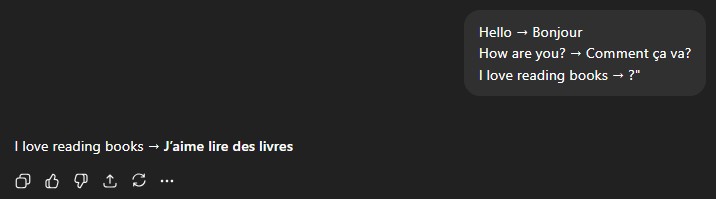

“Translate the following English sentences to French:

- Hello → Bonjour

- How are you? → Comment ça va?

- I love reading books → ?”

Output:

2. Chain-of-Thought Prompting

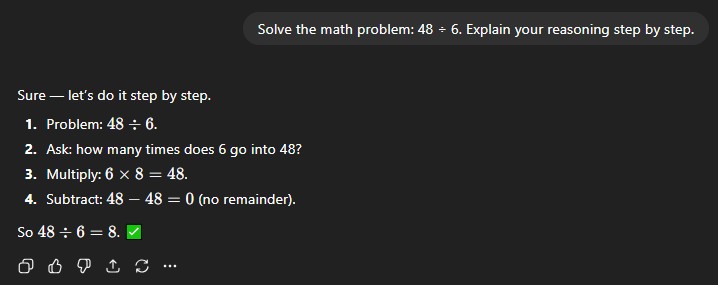

Chain-of-thought prompting asks the model to reason step by step before giving a final answer. This approach enhances logical accuracy and transparency in tasks that require critical thinking and reasoning.

Example:

Solve the math problem: 48 ÷ 6. Explain your reasoning step by step.

Output:

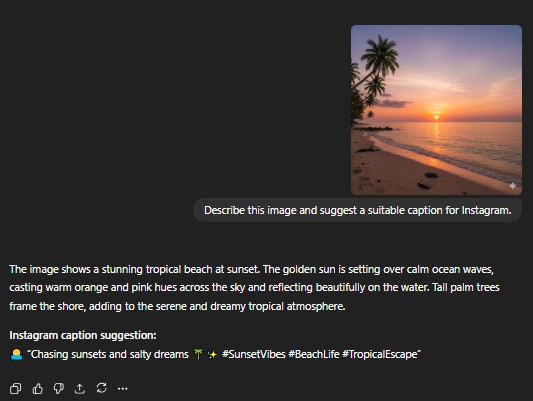

3. Multimodal Prompt Tuning

Multimodal prompt tuning integrates multiple input types, including text, images, and audio, to guide AI behavior. This technique is common in advanced models that support mixed data formats.

Example:

Describe this image and suggest a suitable caption for Instagram.

Output:

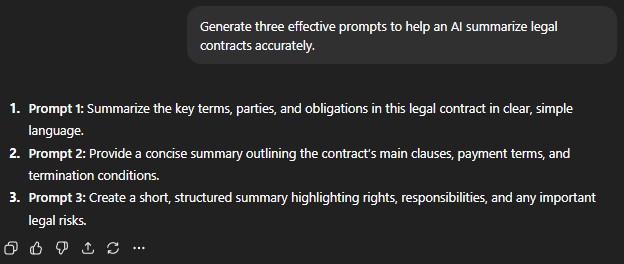

4. Meta-Prompting

Meta-prompting involves designing prompts that instruct the AI on how to construct or evaluate other prompts. Essentially, you are teaching the model to think about prompting itself.

Example:

“Generate three effective prompts to help an AI summarize legal contracts accurately.”

Output:

Prompt Tuning vs. Fine-Tuning vs. Prompt Engineering

It is essential to understand the relationship between prompt tuning and other adaptation methods.

| Method | What’s modified | Compute / Storage Cost | Best Use Case |

| Fine-Tuning | Model weights (often all or most layers) | High | Customizing a model deeply for one task |

| Prompt Tuning | Prompt parameters only; model weights frozen | Moderate to low | Multi-task flexibility, limited computing |

| Prompt Engineering | No training; manual crafting of input prompt | Minimal | Quick adaptation, user-side usage |

- In fine-tuning, you modify the whole model; this can yield high performance, but it is expensive, and you need to maintain separate models for each task.

- In prompt engineering, you craft special input prompts for an off-the-shelf model: you are not training anything.

- In prompt tuning, you get a “middle ground” still some training, but much lighter, and you reuse the model.

If you want a scalable and efficient way to adapt a large model to multiple tasks, prompt tuning is often a strong choice.

Common Mistakes

Even experts can slip up. Avoid these pitfalls:

- Vague Prompts: Leads to irrelevant or generic outputs.

- Overloading the Prompt: Too much information can confuse the AI.

- Ignoring Iteration: Not testing multiple prompts reduces effectiveness.

- Forgetting Context: Always include the necessary background information for more effective responses.

Final Thoughts

Prompt tuning bridges the gap between a generic AI and one tailored to your specific needs. From beginners using clear instructions and role-playing to advanced users applying prefixes and soft prompts, it is a versatile and essential technique in modern AI workflows.

By following this guide, experimenting with examples, and iteratively refining your prompts, you can unlock the true potential of large language models, achieving outputs that are precise, relevant, and actionable.

Recommended Articles

Explore more insights to enhance your AI and content creation skills: