Updated March 15, 2023

Introduction to TensorFlow Layers

The following article provides an outline for TensorFlow Layers. TensorFlow’s tf$layers module provides a high-level API for quickly building a neural network. It includes tools for creating dense (completely linked) layers and convolutional layers and adding activation functions and dropout regularisation.

What are TensorFlow layers?

TensorFlow’s tf.layers module attempts to create a Keras-like API, while tf.keras.layers is a compatibility wrapper.

| Layer | Description |

| Add | Calculates element-by-element addition. |

| AvgPool | Average pooling is given to the input data. |

| BatchToSpaceND | Rearranges data from batch into blocks of spatial data |

| BiasAdd | Adds bias |

| Const | Creates a constant tensor |

| Conv2D | Computes a 2-D convolution |

| Conv2DBackpropInput | Reorganizes data from a batch into spatial data chunks. |

| Identity | Calculates the convolution gradients concerning the source |

| Maximum | Computes element-wise maximization. |

| MaxPool | Mostly on input, MaxPool performs maximum pooling. |

| Mean | The mean element is calculated with the dimensions. |

| Mul | Computes element-wise multiplication |

| Neg | Computes numerical negative value element-wise |

| Pad | Pads a tensor |

| Placeholder | Inserts a placeholder for a tensor that will always be fed |

| Prod | manipulates the product of elements across tensor |

| RandomUniform | Outputs random values from a uniform distribution |

Different Types include:

1. Layer API

2. Custom Layers

3. Densor Layer a basic layer

4. Convolutional

5. Flatten

6. Drop out

Creating models with the Layers

Keras (tf.keras), a popular high-level neural network API that is concise, quick, and adaptable, is suggested for TensorFlow models. TensorFlow has made it official and fully supports it. Model and Layer are two fundamental notions in Keras. The layers encapsulate numerous computational tasks and variables (for example, fully connected layers, convolutional layers, pooling layers, and so on), whereas the model connects and encapsulates the layers overall, explaining how the input information is then passed through the layers and operations to achieve the result.

class MyModel(tf.keras.Model):

def __init__(self):

super().__init__()

# l1= tf.keras.layers.BuiltInLayer()

# l2 = MyCustomLayer()

def call(self, input):

# a = l1(input)

# result = l2(a)

return result

An example

To demonstrate the model-building process in TensorFlow 2, we utilize the simplest multilayer perceptron (MLP), often known as a “multilayer fully connected neural network.” The following steps are taken in this part.

1. tf.keras.datasets are used to take and pre-process datasets.

2. tf.keras.Model and tf.keras.layers are used for developing a model.

3. Create a model training procedure. For example, to calculate loss functions, use tf.keras.loses, and to improve models, use tf.keras.optimizer.

4. Process for evaluating a model. Calculate assessment indicators with tf.keras.metrics (e.g., accuracy)

MNIST image sample

So here, an MNIST loader is installed to read data from the datasets. The model takes a vector as input (in this case, a compressed 1784 handwritten digit image) and produces a 10-dimensional vector representing the likelihood that the image corresponds to one of the nine categories.

class MLP(tf.keras.Model):

def __init__(self):

super().__init__()

self.flatten = tf.keras.layers.Flatten()

self.de1 = tf.keras.layers.Dense(units=100, activation=tf.nn.relu)

self.de2 = tf.keras.layers.Dense(units=10)

def call (self, inputs):

a = self. flatten(inputs)

a = self.de1(a)

a = self.de2(a)

result = tf.nn.softmax(a)

return result

Custom layers

A model’s building blocks are called layers. We can define a custom layer that interacts effectively with the other levels if the model performs a custom computation. We’ll create a custom layer that manipulates the sum of a cube as follows:

class cubesum extends tf. layers.Layer {

constructor() {

super({});

}

computeOutputShape(inputShape) { return []; }

call(input, kwargs) { return input.cube().sum();}

getClassName() { return 'cubesum'; }

}

TensorFlow Layers Models

Models are determined in the open API technique by generating layers and correlating them in sets, then defining a Model that consists of the layers to act as the input and output. We can define the model layer by layer using the Keras API. A layer is just a tensor with its associated weights. Each layer accepts as an input a tensor value, which is the tensor supplied from the previous layer. Next, the layer’s internal operation performs a computation on the input tensor and the internal weight tensor. The final result is the resultant tensor, which is passed to the next layer in the network.

Keras model construction

There are two ways to create models with tf.keras:

1. Sequential API

We can use the sequential model if we have a most simple model in which each layer node is connected sequentially from the input layer to the output layer. This model has a continuous chain of layers from the source to the destination, and there are no layers with numerous inputs. A single input data and output are also required for this technique.

from TensorFlow.Keras. models import Sequential

from tensorflow.Keras.layers import Dense

model = Sequential()

add()

The procedure for Sequential models is straightforward:

- Begin by setting up the sequential model.

- By model, add layers in the correct order.

- add()

2. Functional API

from tensorflow.keras.layers import Input, Dense, Add

from tensorflow.keras.models import Model

in1 = Input((2,))

in2 = Input((2,))

den1 = Dense(3, activation = 'relu')(in1)

den1 = Dense(3, activation = 'relu')(den1)

den2 = Dense(3, activation = 'relu')(in2)

den2 = Dense(3, activation = 'relu')(den2)

add_l = Add()([den1, den2])

dmain = Dense(3, activation = 'relu')(add_l)

dmain = Dense(3, activation = 'relu')(dmain)

dmain = Dense(3, activation = 'relu')(dmain)

output_layer = Dense(1, activation = 'sigmoid')(dmain)

model = Model([in1, in2], output_layer)

use for classification

TensorFlow is used to deploy a very easy neural network classifier. This model categorizes photographs of handwritten digits from the MNIST data set, which has ten classes.

TensorFlow layers Examples

Different examples are mentioned below:

Example

Importing a libraries

import TensorFlow as tf

import numpy as np

import matplotlib.pyplot as plt

model = tf.keras.Sequential([

tf.keras.layers.Dense(3, activation="relu", name="first"),

tf.keras.layers.Dense(4, activation="tanh", name="second"),

tf.keras.layers.Dense(3, name="last"),

])

in = tf.random.normal((1,4))

out = model(in)

for layer in the model. layers:

print(layer.name, layer)

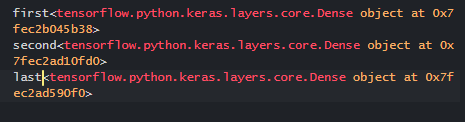

Explanation

The above code builds a sequential model, and the model provides the necessary input. The last step is to increment all the layers in the model.

Output:

Conclusion

Finally, in this article, we had utilized the convolutional network in the classification. We have also built a Neural network using tensor flow for implementation. TensorFlow includes a Model class that we may use to create a model using the layers we had created.

Recommended Articles

This is a guide to TensorFlow Layers. Here we discuss the Introduction, What are TensorFlow layers, Creating models with the Layers with examples. You may also have a look at the following articles to learn more –