Offer ends in:

What you'll get

- 2h 41m

- 13 Videos

- Course Level - All Levels

- Course Completion Certificates

- One-Year Access

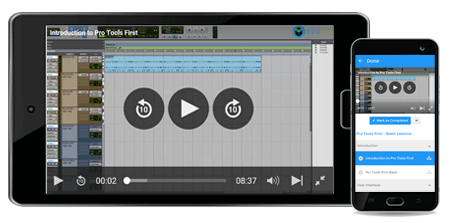

- Mobile App Access

Curriculum:

About big data and hadoop:

- Big data is a multiple groups of related things for any collection of data set which is so large and very complex that it becomes difficult to handle using database management tools or regular data processing applications.

- Storing such data is very difficult task. We have several issues like searching particular data from database, sharing such a complex data with others, transferring this data, analyze and visualizing the data, capturing data.

- Due to some additional analyze of such single complex set of data is very difficult.

- Working with big data is difficult because of relational database management system and some statistic and visualization packages which have several hundreds of or even thousands of servers running behind it.

- Big data is considered on size of an organization managing set or capabilities of that organization for use of applications which analyze and process data set. To handle thousand gigabyte of data in an organization is very complex task by data management system.

- Demand of information management system is increased because of big data. Many of the software companies have spent more money on specialized in data management and analyze system.

- So now day, hadoop storage is highly demanding in many software companies and other companies those deals with big data.

- Hadoop has brought out reference architecture for big data analysis. Hadoop technology provides highly flexible analytical platform for processing large and complex size of data structure an unstructured data.

- It can handle petabytes of data which are store across hundreds or thousands of nodes and physical storage servers.

- Hadoop was developed by Apache software foundation under open source platform in 2005. To handle such big data it uses MapReduce concept.

- This MapReduce the take the input data and break it for further processing across various nodes within the hadoop instance. These nodes are called worker nodes and they break the data for next processing.

- The processed data is then collected back in reduce step and forwarded back to original query.

- Hadoop look through massive scale of data analysis. Hadoop is implemented on scale-out architecture which is very low-cost physical server. It is used to distribute the processed data during map operations.

- The logic behind hadoop system was to reduce the execution time and process many parts of query in parallel order.

- This can be compared with legacy-structured database design which looks up within single server by using faster processor also which has more memory and fast storage.

- To implement the data storage layer, Hadoop uses a feature called as HDFS which stands for Hadoop Disributed File System. HDFS is not a file system in the regular manner and it does not usually mounted for a user to view, which can sometimes make the concept complex to understand; it’s may be for better to think of it simply as a Hadoop data store.

- HDFS instances are classified into two components: the nameNode, which maintains metadata to keep the track on placement of physical data across the Hadoop instance and dataNodes, which store the data.

- You can run multiple logical datanodes on a single server, but ordinarily its implementation will run only one per server across an instance. HDFS supports a single file system name space, which stores data in a traditional hierarchical format of directories and files. Across an instance, data is divided into 64MB chunks that are triple-mirrored across the bunch to provide flexibility. Obviously in very large Hadoop bunch, component or even entire server failure will occur so the duplication of data across many servers is a key design requirement of HDFS.

WHY you should do big data and hadoop TRAINING?

- This online course on Big Data and Hadoop training will teach you about the all essential knowledge of hadoop frame work.

- Hadoop software is a framework that allows you for the distributed processing of big data sets across clusters of computers using simple programming models.

- It is designed to scale up from single servers to thousands of machines, each offering local computation and physical storage. Rather than rely on hardware to deliver high-availability, the library is designed in such a way that it detect and handle failures at the application layer, so delivering a highly available service on top of a cluster of computers.

- Map/Reduce is a programming paradigm that was made popular by Google where in a task is divided in to small chunks and distributed to a large number of nodes for processing (map), and the results are then integrated in to the final answer (reduce). Google and Yahoo use such technology for their search engine, and among other things.

- Hadoop is a generic framework for implementing of analyzing scheme. Mostly because it provides features such as fault tolerance and lets you bring together any kind of hardware to do the processing. It also scales extremely well, provided your problem fits the prototype.

- This course presents an integrated approach to Big Data; we will start from the beginning about big data and hadoop fundamentals.

Course Objective:

- The course accentuate that we study Big Data to gain insight that will be used to get people throughout the enterprise to run the business better and to maintain the database properly.

- Rather than an implementation of single open-source systems such as Hadoop, the course recommends that Big Data should be processed in a platform that can handle the variety and volume of data by using a family of components that require integration and data governance.

- Big Data is NoHadoop (“not only Hadoop”) as well as NoSQL (“not only SQL”).

Target Audience for Big data and hadoop Training:

- Those who want to build their career in data management system.

- Anyone who wants to learn about hadoop framework

- Anyone from data analyzes background.

- Students who want to learn big data role in corporate world.

Pre- requisites to Hadoop training

- Basic knowledge of Big data concepts

- Passion to learn

- A computer with internet connection

Offer ends in:

Training 5 or more people?

Get your team access to 5,000+ top courses, learning paths, mock tests anytime, anywhere.

Drop an email at: [email protected]