Updated March 14, 2023

Introduction to Avro converter

The avro converter allows us to convert the Apache avro object into a favored data format such as JSON, XML, CSV, etc. It has been generated for assisting data conversion and serialization depending on the Apache avro technology. It is an outstanding option because they deflate techniques and schema registry can able to manage for those who are using the event-driven architecture with the help of the distributed platform. It can combine avro with the spring MVC, it also helps to reprocess the avro object from surviving event-based system since it will describe the RESTful interface.

Overview of avro converter

The avro converter can be used to convert one format into another, so it can transform the avro object into the specified data format as it can control the data which has been written by Apache avro. If we wanted to use the avro converter then we need to specify type = “avro” in the definition of the converter, converters are separated from connectors to recognize the reprocessing between the connectors naturally, the converters can be used at origin for accepting the input and to provide output to the various group of formats, let us understand that at the origin connector, the converter can hold input from JDBC and transform it to the AVRO and it can be sent through Kafka in the same way at the fall of it a converter can take input from Kafka as avro and send it to OCS, as data can be flowing from JDBC to OCS, the avro converter is the most usual and approved converter because the avro format can be considered as more strong.

How to use avro converter?

The Kafka Connect is an extensible and dependable tool for when flowing the data from Apache Kafka to other systems, we can able to pick Kafka to connect when we try to generate the new dedicated cluster which can be the non-compulsory element, there are times when frequently we have to utilize some regularly available system for building and absorbing from the Apache Kafka. The Kafka connect is a pre-planned connector for the execution of a few ordinal systems, in which it has two types of connectors that are source connector and sink connector in which the source connector can consume data from the producer and sustain them into topics, and the sink connector can able to distribute data from topics into the end-users.

The Kafka connect and schema registry can combine together for recording the information which is related to a schema from the connector, and the sink connector can allow the connector to understand the formation of the data to give further capabilities.

Let us see how to use Kafka connect converter by using the schema registry,

• For that, we need to define the ‘key.converter’ or ‘value.converter’ properties in the connector or in the working configuration of connect, instead of this we also need the extra configuration so we will perform as shown below,

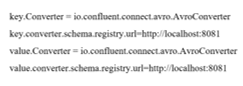

- We have to set avro converter properties as given below,

- Below is the extra configuration that can be done with an avro converter,

![]()

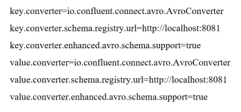

- When we try to configure extra properties with the working or connector configuration then we need to perform some extra properties, and when we try to do that we need to have prefixes as ‘key.converter.’ and ‘value.converter.’, let us consider below example,

- When we are utilizing primary authentication then we need to add the below properties,

![]()

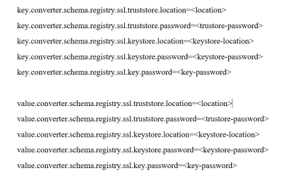

- When we are utilizing the avro in an assured environment then we need to add properties as, ‘value.converter.schema.registry.ssl.’, let us understand the related example as given below,

Class of avro converter

-

XmlToAvroConverter:

This is the class of converter that can have the framework that can transform the XML schema and data into an identical avro format, it can allow us to convey and reserve the same data and the avro-formatted data, it can also reverse the process that will transform back into the same XML data, ‘xmlToAvroConverter’ can able to use the ‘Reflectdata’ class for creating the schema from a class over the classpath, we can use this class by using below path,

‘new XmlToAVroConverter<DummyObject>().convert(xmlString, DummyObject.class)’

2. JsonToAvroConverter:

This conversion has been used to convert the single substitute of the model to be used at the time of generating REST API, we can reverse it as Avro to JSON by using the below path.

‘new JsonToAvroConverter<DummyObject>().convert(jsonString, DummyObject.class)’

We can use the below code for validating this conversion,

-

AvroToXmlConverter:

This conversion can be done by using the Avro schema when we have the structured XML data and the reverse can also be possible, the XmlToAvro conversion can be performed to create Avro schema in the surviving java class with the help of the below syntax,

‘new AvroToXmlConverter<DummyObject>().convert(DummyObject);’

-

AvroToJsonConverter:

For such type of conversion, we have to use ‘ConvertRecord’ or ‘ConvertAvroToJSON’ for converting our avro data to the JSON, if the avro file will not have the fixed schema then we have to provide it either with the help of ‘ConvertRecord’ or ‘ConvertAvroToJSON’ and for the class conversion we have to use the below syntax,

‘new AvroToJsonConverter<DummyObject>().convert(DummyObject);’

We can validate the conversion by using the below code,

![]()

-

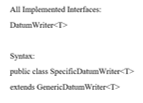

SpecificDatum writer class:

The ‘SpecificdatumWriter’ class is in the ‘org.apache.avro.specific’ package concerned about changing the java objects into the in-memory format in which this class can execute the interface which we can call as DatumWriter interface, and that interface can take part as a converter, the constructor of it can be ‘SpecificDatumWriter’ and it can have the methods such as ‘SpecificData getSpecificData()’ in which this method can obtain the specific data execution those which can be used by the writer.

Conclusion

In this article we conclude that the avro converter has been used to convert the object of avro into the liked or favored data format and, it can also help to reuse that avro object for surviving event-based system, so this article helps us to understand the concept of avro converter.

Recommended Articles

This is a guide to Avro converter. Here we discuss the Introduction, overview, How to use avro converter, Class of avro converter, with implementation. You may also have a look at the following articles to learn more –