Updated March 20, 2023

Introduction to Hadoop YARN Architecture

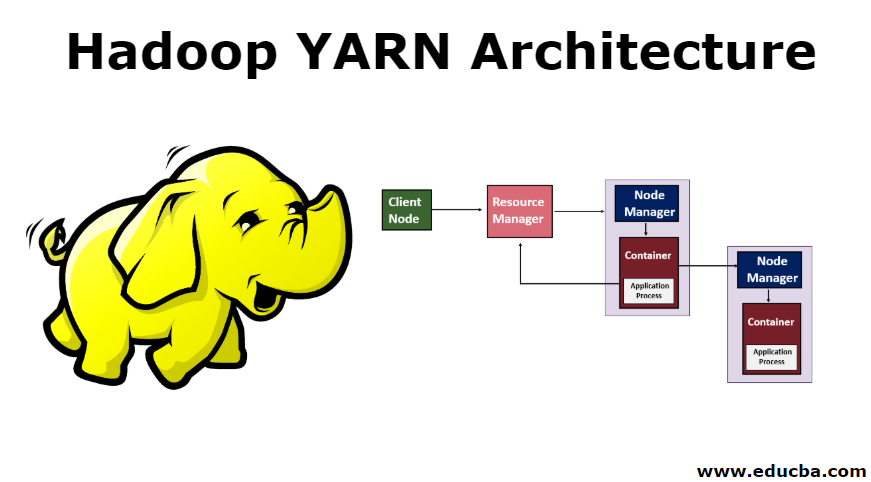

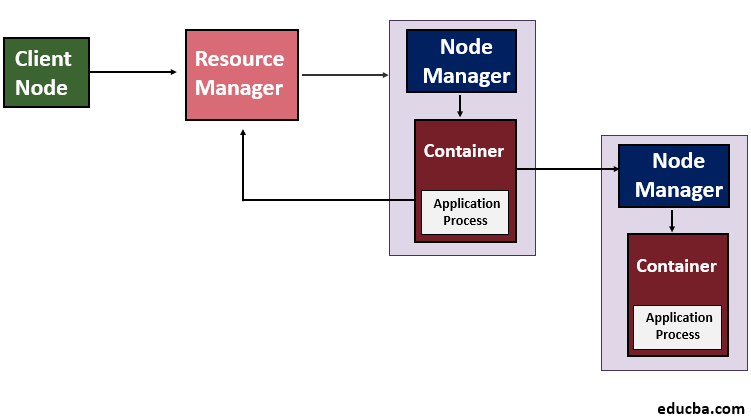

Hadoop YARN Architecture is the reference architecture for resource management for Hadoop framework components. YARN, which is known as Yet Another Resource Negotiator, is the Cluster management component of Hadoop 2.0. It includes Resource Manager, Node Manager, Containers, and Application Master. The Resource Manager is the major component that manages application management and job scheduling for the batch process. Node manager is the component that manages task distribution for each data node in the cluster. Containers are the hardware components such as CPU, RAM for the Node that is managed through YARN. Application Master is for monitoring and managing the application lifecycle in the Hadoop cluster.

Explain Hadoop YARN Architecture with Diagram

[Architecture of Hadoop YARN]

YARN introduces the concept of a Resource Manager and an Application Master in Hadoop 2.0. The Resource Manager sees the usage of the resources across the Hadoop cluster whereas the life cycle of the applications that are running on a particular cluster is supervised by the Application Master. Basically, we can say that for cluster resources, the Application Master negotiates with the Resource Manager. This task is carried out by the containers which hold definite memory restrictions. Then these containers are used to run the application-specific processes and also these containers are supervised by the Node Managers which are running on nodes in the cluster. This will confirm that no more than the allocated resources are used by the application.

Various Components of YARN

Below are the various components of YARN.

1. Resource Manager

YARN works through a Resource Manager which is one per node and Node Manager which runs on all the nodes. The Resource Manager manages the resources used across the cluster and the Node Manager lunches and monitors the containers. Scheduler and Application Manager are two components of the Resource Manager.

- Scheduler: Scheduling is performed based on the requirement of resources by the applications. YARN provides few schedulers to choose from and they are Fair and Capacity Scheduler. In case of any hardware or application failure, the Scheduler does not ensure to restart the failed tasks. Also, Scheduler allocates resources to the running applications based on the capacity and queue.

- Application Manager: It manages the running of Application Master in a cluster and on the failure of the Application Master Container, it helps in restarting it. Also, it bears the responsibility of accepting the submission of the jobs.

2. Node Manager

Node Manager is responsible for the execution of the task in each data node. The Node Manager in YARN by default sends a heartbeat to the Resource Manager which carries the information of the running containers and regarding the availability of resources for the new containers. It is responsible for seeing to the nodes on the cluster individually and manages the workflow and user jobs on a specific node. Chiefly it manages the application containers which are assigned by the Resource Manager. The Node Manager starts the containers by creating the container processes which are requested and it also kills the containers as asked by the Resource Manager.

3. Containers

The Containers are set of resources like RAM, CPU, and Memory etc on a single node and they are scheduled by Resource Manager and monitored by Node Manager. The Container Life Cycle manages the YARN containers by using container launch context and provides access to the application for the specific usage of resources in a particular host.

4. Application Master

It monitors the execution of tasks and also manages the lifecycle of applications running on the cluster. An individual Application Master gets associated with a job when it is submitted to the framework. Its chief responsibility is to negotiate the resources from the Resource Manager. It works with the Node Manager to monitor and execute the tasks.

In order to run an application through YARN, the below steps are performed.

- The client contacts the Resource Manager which requests to run the application process i.e. it submits the YARN application.

- The next step is that the Resource Manager searches for a Node Manager which will, in turn, launch the Application Master in a container.

- The Application Master can either run the execution in the container in which it is running currently and provide the result to the client or it can request more containers from resource manager which can be called distributed computing.

- The client then contacts the Resource Manager to monitor the status of the application.

With MapReduce in Hadoop version 1.0(MRV1), the number of maps and reduce slots were defined per node. Also in a Hadoop cluster, as the hardware capabilities varied and the number of tasks on a specific node needed to be limited manually. But with YARN, this shortcoming is overcome because here the Resource Manager knows about the capacity of each node as it communicates with the Node Manager which runs on each node.

Conclusion

YARN helps in overcoming the scalability issue of the MapReduce in Hadoop 1.0 as it divides the work of Job Tracker, of both job scheduling and monitoring progress of the tasks. Also, the issue of availability is also overcome as earlier in Hadoop 1.0 the Job Tracker failure led to the restarting of tasks. YARN came with many added bonuses such as better resource utilization as there is no fixed slot for tasks as it provides central resource management. So with YARN many of the issues faced in the earlier version of Hadoop are overcome as it helps in segregating the data processing from scheduling and resource management. With YARN, it is possible to run interactive queries independently as well as providing better real-time analysis.

Recommended Articles

This has been a guide to Hadoop YARN Architecture. Here we discuss the various components of YARN Which include Resource Manager, Node Manager, and Containers along with the Architecture. You can also go through our other suggested articles to learn more –